News

The latest from the AI agent ecosystem, updated multiple times daily.

OpenAI's AGI principles: democratize everything except the models

OpenAI's five new AGI principles promise democratization and universal prosperity while dodging hard lines on weapons and surveillance. The Worldcoin connection isn't coincidental.

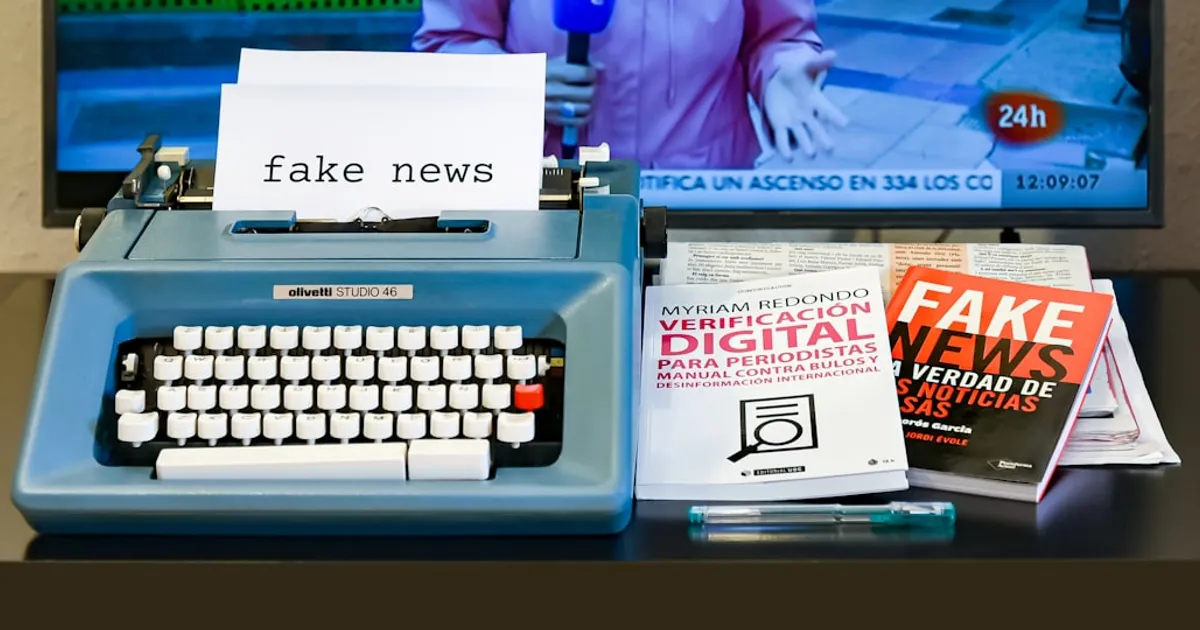

Reporters at this news site are AI bots. OpenAI appears to fund it

An investigation reveals AcutusWire.com, a news site with 94 articles published in four months, is entirely AI-generated. The site's exposed code shows it uses an automated pipeline with AI interviewers, multi-pass editorial review, and content generation. The articles appear to be funded by Leading The Future, a $125 million super PAC backed by OpenAI president Greg Brockman and investor a16z. The site publishes content attacking AI industry critics and pushing anti-regulation talking points, with apparent connections to Republican PR firm Novus Public Affairs. The Creative Commons licensing is moot since AI-generated works can't receive copyright protection.

Moleskine's AI Tolkien Notebooks Invent Fake Middle-earth Locations

Moleskine released a Lord of the Rings collection with AI-generated artwork and minimal disclosure. Disclaimers appeared inconsistently, promotional maps contained nonsensical names like 'Der Rarmorth,' and no human artists were credited. After backlash, Moleskine claimed AI was only used for promotional backgrounds, then removed the disclaimer entirely.

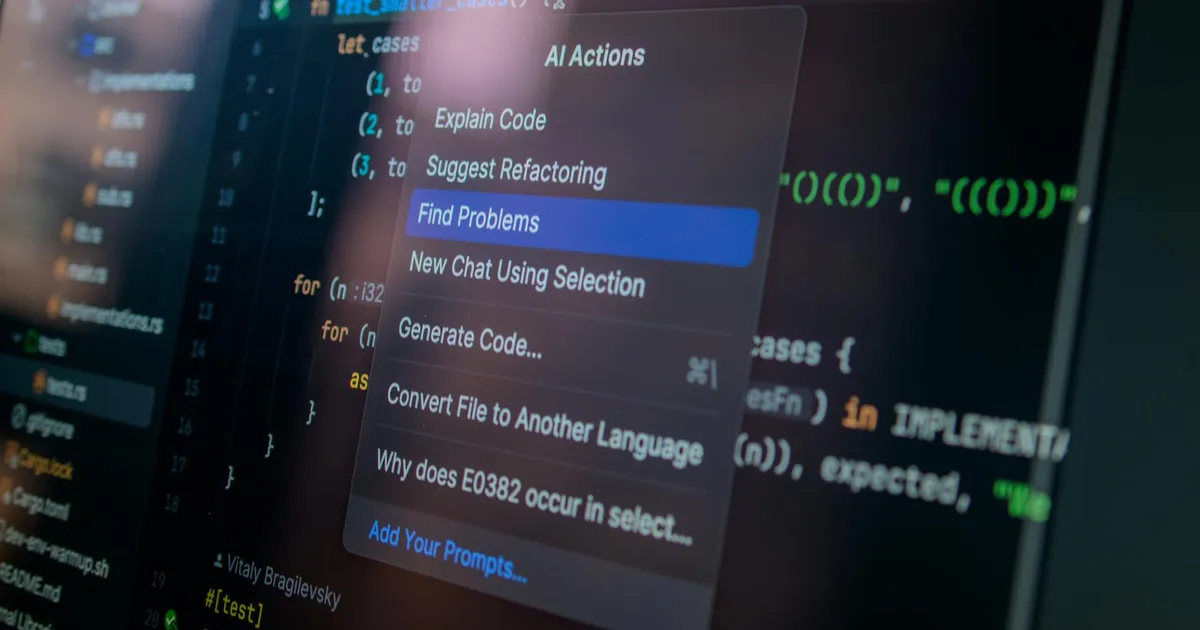

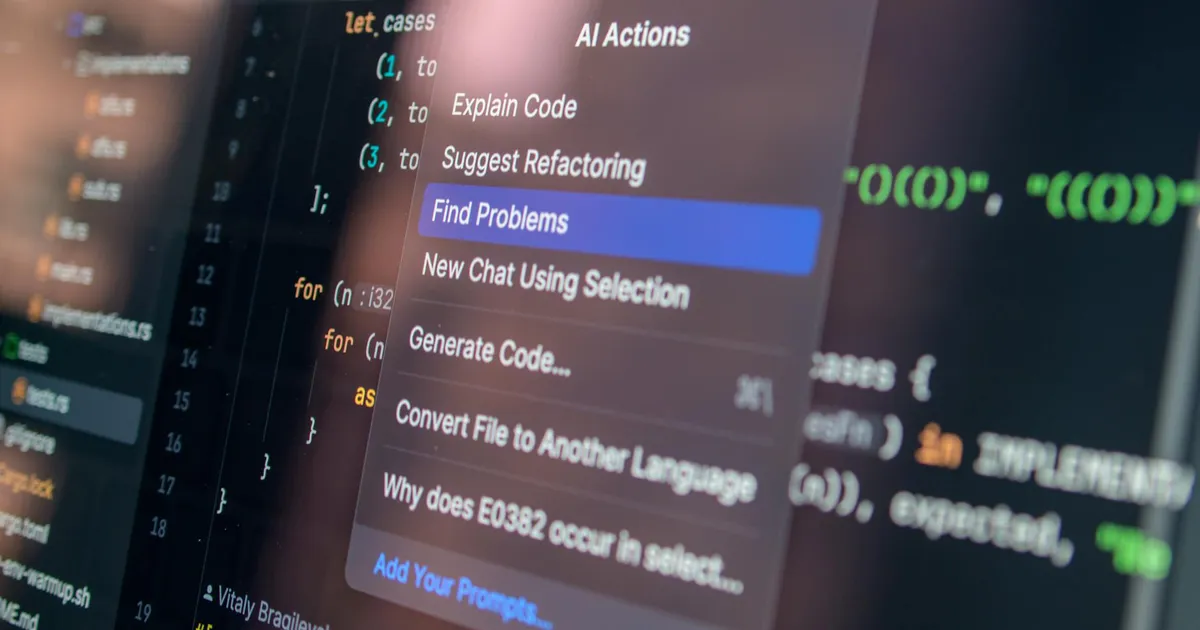

Dirac OSS Agent Crushes Google's Baseline on TerminalBench

Dirac is an open-source AI coding agent that achieved a 65.2% score on the TerminalBench 2.0 leaderboard using gemini-3-flash-preview, outperforming Google's official baseline (47.6%) and the top closed-source agent Junie CLI (64.3%). It focuses on token efficiency and context curation, reducing API costs by 64.8% on average while producing better work through hash-anchored parallel edits, AST manipulation, and multi-file batching.

Routing Claude Code through Ollama: 90% savings, zero attribution

A GitHub repository and tutorial demonstrating a two-engine setup that routes Claude Code through Ollama to optimize costs. Strategic work remains on Claude Pro while heavy tasks (lints, refactors, batch ops) are offloaded to free open-source models like Gemma, Qwen, or DeepSeek. HN comments reveal this wraps the @musistudio/claude-code-router npm package without proper attribution.

Dutch central bank ditches AWS and chooses Lidl for European cloud

De Nederlandsche Bank (DNB), the Dutch Central Bank, is signing a major contract with Schwarz Digits (the IT arm of Lidl owner Schwarz Group) to use their Stackit cloud platform. This move aims to reduce dependence on American cloud companies like AWS, Google Cloud, and Microsoft Azure, driven by concerns about cloud sovereignty and the US Cloud Act which allows US authorities to access data. Schwarz Digits is positioning Stackit as a European alternative to American hyperscalers, with a recent 11 billion euro investment in a data center in Lübbenau.

Tendril starts with three tools and builds the rest itself

Tendril is an agentic sandbox where an AI model builds and registers its own tools as it works. Starting with just three bootstrap commands, the agent creates what it needs and saves it for next time. Built with AWS Strands Agents SDK, Tauri, and Claude Sonnet 4.5.

Google Bets AI Edge Can Close Cloud Gap With Amazon, Microsoft

Google Cloud is pushing AI to catch AWS and Azure, but history says technical superiority alone won't win enterprise deals. Google open-sourced TensorFlow in 2015 and built custom TPUs early, yet still trails rivals who spent years building deep corporate relationships. The question isn't whether Google can build better AI tools. It's whether enterprises trust Google to support them long-term.

Your GPU is barely working. Utilyze proves your dashboard lies

Systalyze has open-sourced Utilyze, a GPU monitoring tool that accurately measures real compute throughput for AI workloads. Unlike standard tools like nvidia-smi and nvtop which only report whether a GPU is active, Utilyze measures actual GPU efficiency using hardware performance counters, revealing that dashboards showing 100% utilization can actually be running at as low as 1% of real capacity.

Nvidia VP: Compute now costs more than our employees

Nvidia's VP says compute now costs more than employees. Uber's CTO already burned through 2026's AI budget on tokens. The AI cost problem is here, and companies are scrambling for cheaper alternatives.

Chrome Now Ships an On-Device LLM. Most Laptops Need Not Apply.

Google's Prompt API lets Chrome run Gemini Nano locally, no API keys or cloud calls required. The catch: the model download is larger than Chrome itself, and the hardware requirements shut out most laptops.

Canva AI swapped Palestine for Ukraine. Nobody asked.

Canva's Magic Layers AI was caught automatically replacing the word 'Palestine' with 'Ukraine' in user designs. The feature is supposed to separate images into editable layers, not rewrite text. Canva has fixed the bug and apologized, but the incident raises questions about how well AI toolmakers understand their own models.

Google Trains AI Across Four Regions, No Supercluster Required

Google DeepMind's Decoupled DiLoCo splits AI training into asynchronous 'islands' of compute that isolate hardware failures and let training continue uninterrupted. In tests with Gemma 4 models, it achieved the same ML performance as conventional methods while being 20x faster, successfully training a 12B parameter model across four US regions. If this approach catches on, the competitive edge shifts from who builds the biggest cluster to who writes the best distributed training software.

Claude Code + Ollama: ~90% cheaper, but who gets credit?

A GitHub repo shows how to route Claude Code through Ollama, swapping paid Claude API calls for open-source models like Gemma, Qwen, and DeepSeek. Claimed savings: roughly 90%. The setup pairs Claude Desktop for strategic work with Ollama-backed Claude Code for heavy tasks like lints and refactors.

OpenAI's AGI Principles Paper Over Its Own Contradictions

OpenAI published five guiding principles for the AGI era, promising democratization and shared prosperity. But the gap between these ideals and the company's Microsoft-dependent, increasingly closed operations tells a different story.

Your GPU Dashboard Lies: nvidia-smi Can Show 100% at 1% Throughput

Utilyze is an open-source GPU monitoring tool that measures real compute throughput, not just kernel activity. Standard tools like nvidia-smi and nvtop report whether a GPU is active, not how efficiently it's working. A dashboard showing 100% utilization can actually deliver just 1% of potential throughput. Utilyze reads hardware performance counters to expose the gap and help teams avoid buying hardware they don't need.

Replace junior devs with AI, watch your seniors cash in

Justin Smestad argues that cutting junior engineer hires to save money backfires. Juniors are 'salary insurance' who grow into senior roles. Without them, companies depend on expensive seniors with real leverage. AI changes what junior work looks like but doesn't eliminate the need for a pipeline. Shopify is cited as a company investing in early-career hiring while betting on AI.

AI's power hunger to push US electricity to record highs by 2026

US electricity demand will hit record highs in 2026 and 2027 thanks to AI data centers. Grid capacity can't keep up with chip development, and some data center builds are already stalled. Energy availability may matter more than model improvements for what happens next with AI.

Sam Altman's World ID wins U.S. corporate backing despite global bans

Tinder, Zoom, and Docusign are partnering with World (formerly Worldcoin), Sam Altman's iris-scanning biometric ID project. While U.S. companies embrace the technology, countries across Asia, Africa, Europe, and Latin America have banned or halted World over privacy violations, including collecting minors' data and paying people for iris scans. The project claims 18 million verified users, but many received $50 in crypto to sign up.

Sam Altman Wants to Rethink Operating Systems. OpenAI Might Build One.

OpenAI CEO Sam Altman tweeted that operating systems and user interfaces need a fundamental rethink. The comment has sparked speculation about whether OpenAI plans to build its own AI-first operating system.

Chrome Bakes Gemini Nano Into the Browser

Chrome now runs Gemini Nano locally for AI without cloud calls. The trade-offs are steep: a huge download and a model that struggles with extended conversations. Desktop only.

Builder creates ML-powered flipdisc wall, open-sources the library

A guide to building a custom interactive flipdisc display system using 9 Alfazeta panels in a 3x3 grid (84x42 discs total), powered by a 24V Meanwell supply and Nvidia Orin Nano for machine learning processing with MediaPipe. Includes Node.js libraries for display control, WebGL/Canvas rendering, and an Expo mobile app interface. The author open-sourced the flipdisc library for AlfaZeta and Hanover boards.

Nvidia VP: AI Compute Costs Now Exceed Team's Salaries

Some companies are now spending more on AI compute than on human workers. Nvidia's VP of applied deep learning says compute costs far exceed employee salaries for his team, while Uber's CTO reportedly burned through the company's entire 2026 AI budget on token costs alone. As global IT spending heads toward $6.31 trillion, companies face growing pressure to show returns on AI investments and get costs under control.

AgentSwarms: Free Playground That Teaches Real Agent Guardrails

AgentSwarms is a free interactive platform for learning agentic AI by building agents. Five tracks, 40+ lessons, 30 runnable agents covering prompts, RAG, function calling, guardrails, multi-agent swarms, and observability. Unlike most agent tutorials, it teaches production patterns like PII redaction and prompt-injection defense alongside basics. Zero setup in Learn Mode; bring your own API keys in Build Mode for deeper work.

AI is hungry for electricity and America's grid can't keep up

A Reuters report discusses how AI workloads and data center expansion are projected to drive US power demand to record levels in 2026-2027, according to the U.S. Energy Information Administration (EIA). The story and Hacker News comments highlight emerging concerns about energy infrastructure scaling slower than chip technology, potentially creating a significant constraint for AI development.

Cursor Deleted Railway Production Data and Backups

An AI coding assistant deleted production data and backups on Railway. We spent a decade building access controls to keep humans from breaking production databases. Then we gave AI agents write access to both production and backup systems.

Mercor just lost 40,000 voices. You can't change yours.

Lapsus$ stole 4TB of voice recordings paired with government IDs from 40,000 Mercor contractors who were recording audio for AI training. Studio-quality voice plus verified identity documents is a ready-made toolkit for bank fraud, deepfake calls, and impersonation scams. Unlike a password, you can't just reset your voice. This piece covers how the data gets weaponized and where victims can get help.

Altman Wants to Kill the Traditional Operating System

Sam Altman's tweet about rethinking OS design isn't hot air. OpenAI is building hardware with Jony Ive that treats conversation as the interface and the operating system as plumbing.

Git-pinned cache cuts AI agent costs in half

An experiment shows that using a Git-based cache to pre-verify and pin facts about a codebase can reduce AI agent token costs by 51%. The approach uses Claude Haiku to scan repos and create claims pinned to Git blob OIDs, with Merkle root computation to automatically mark claims as stale when files change. Results: cost reduction from $4.35 to $2.13 per session, 16% faster wall time.

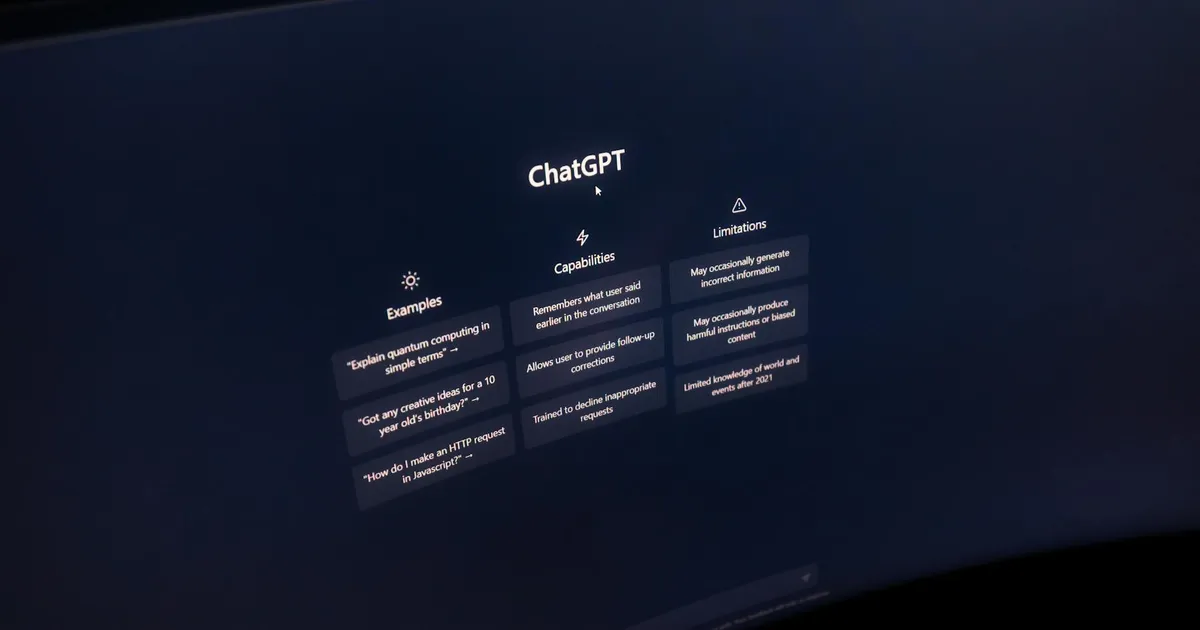

Cal Newport to AI Industry: Who Asked for This?

Cal Newport argues Silicon Valley shifted from solving customer problems to 'inventing the future,' pursuing AI without clear market utility. While LLMs have more potential than NFTs or the metaverse, ordinary people mainly use ChatGPT as a search tool and face conflicting narratives about AI's job impact.

EvanFlow: TDD Feedback Loop for Claude Code

EvanFlow enforces a TDD loop for Claude Code with human approval at every stage. Ships with 16 skills, 2 subagents, and guardrails against common agentic coding failures.

Microsoft Ends Revenue Sharing With OpenAI

Microsoft is ending its revenue-sharing agreement with OpenAI, giving the startup more flexibility to compete with rivals like Anthropic. The move also helps Microsoft stay ahead of regulatory scrutiny in the US and Europe. Separately, GitHub is transitioning Copilot to usage-based billing, signaling the end of free AI coding assistance.

Google's DiLoCo Trains AI on Mixed Hardware, 20x Faster

Google DeepMind announces Decoupled DiLoCo, a new architecture for training large language models across globally distributed data centers. The approach splits training into decoupled 'islands' of compute with asynchronous data flow. It isolates hardware failures and needs far less bandwidth than traditional methods. Tested on Gemma 4 models, the system matched conventional training performance while running 20x faster and mixing different hardware generations (TPU v6e and TPU v5p).

TurboQuant's 2-Bit Compression Faces Prior Art Challenge

An interactive walkthrough explains TurboQuant, a method compressing AI vectors (embeddings, KV caches, attention keys) to 2-4 bits per number using a random rotation technique. This transforms input vectors into coordinates following a known distribution, enabling a single shared lookup table (codebook) for any input without extra metadata. The presentation sparked an attribution dispute with researchers behind earlier quantization schemes DRIVE and EDEN, who claim TurboQuant is a restricted version of their prior work.

Microsoft's OpenAI Money Faucet Closes

Microsoft will stop sharing revenue with OpenAI, according to Bloomberg. The restructuring follows $11 billion in Microsoft investments and OpenAI's explosive growth. But behind the paywall, the details are murky and the narratives conflict.

Utilyze: nvidia-smi has been lying about your GPU utilization

Utilyze is an open-source GPU monitoring tool by Systalyze that measures actual GPU compute utilization more accurately than standard tools like nvidia-smi and nvtop. The standard metric only indicates whether a GPU is doing anything, not how efficiently it's working. Utilyze uses GPU hardware performance counters to measure true compute throughput, revealing that dashboards showing 100% utilization can mask actual usage as low as 1%.

DeepSeek V4 runs on Huawei chips and keeps up with GPT-5

DeepSeek released V4, an open-source AI model with a 1 million token context window, optimized for Huawei's Ascend chips. The model comes in Pro and Flash versions, offering performance rivaling Claude-Opus-4.6, GPT-5.4, and Gemini-3.1. V4-Pro costs $1.74 per million input tokens. Flash costs $0.14. Architectural improvements cut memory use dramatically, and the Huawei partnership signals China's push away from Nvidia dependency.

This news site's reporters are AI bots. OpenAI appears to fund it.

An investigation reveals AcutusWire.com, a digital news site launched in December 2025, operates almost entirely on AI-generated content. 69% of articles are fully AI-generated, and 'reporter' Michael Chen is actually an AI agent sending interview requests. The site appears connected to OpenAI's super PAC Leading The Future and Republican PR firm Novus Public Affairs, suggesting an astroturfing operation pushing specific political narratives.

AgentSwarms: Break 30+ agents in-browser until you understand them

AgentSwarms is a free browser platform for learning agentic AI by running and modifying live agents. Covers prompts, RAG, tool calling, guardrails, multi-agent swarms, and observability across 40+ lessons. Supports OpenAI, Gemini, Grok, and Claude with zero setup.

AI is about to hit a power wall

US power demand is projected to reach record highs in 2026-2027, driven by AI usage and data center expansion according to EIA data. Hacker News commenters highlight that AI is becoming an energy problem, not just a compute problem, with power infrastructure scaling slower than chip improvements—a potential constraint for AI growth.

Big Tech Bets on Nuclear as AI Power Demand Hits Records

U.S. electricity consumption will hit record highs in 2026-2027. Microsoft, Amazon, and Google are already signing nuclear deals to keep up.

Claude Code 90% cost-saver goes viral, attribution doesn't

A tutorial showing how to route Claude Code through Ollama to reduce costs by approximately 90%. The setup keeps strategic work on Claude Pro while offloading heavy tasks like lints, refactors, and file operations to free open-source models (Gemma, Qwen, DeepSeek) running locally via Ollama.

Git Merkle roots cut AI agent token costs by 51%

An experiment using Git's content hashing to cache codebase facts cut AI agent token usage by 51% ($4.35 to $2.13 per session). Haiku scans a repo and writes claims pinned to Git blob OIDs via a Merkle root. When files change, blob OIDs change, and claims go stale automatically.

Microsoft Keeps First Dibs on OpenAI, But Exclusivity Ends

OpenAI and Microsoft restructured their partnership, ending cloud exclusivity while keeping Azure as the primary platform. OpenAI can now run products on any cloud provider. Microsoft's IP license becomes non-exclusive through 2032, and Microsoft stops paying revenue share to OpenAI entirely. OpenAI continues paying Microsoft through 2030 with a cap. Microsoft remains a major shareholder, and both companies will keep collaborating on datacenter capacity, silicon development, and cybersecurity.

Google's DiLoCo Trains LLMs on Mismatched Hardware Across Continents

Google DeepMind's Decoupled DiLoCo splits LLM training across separate compute 'islands' that talk asynchronously, matching conventional quality while running 20x faster than traditional sync methods. The system keeps training when hardware fails and lets you mix older and newer GPUs in the same run.

AI now costs more than the humans it replaces

Nvidia VP Bryan Catanzaro says compute costs for his team now sit 'far beyond' employee salaries. Uber's CTO already burned through his 2026 AI budget on token costs alone. Companies are spending millions to replace teams that cost less, and some are bailing on commercial APIs to self-host open-source models instead.

4TB of voice samples stolen from 40k Mercor AI contractors

The extortion group Lapsus$ posted a 4TB data dump from Mercor containing voice biometrics paired with government-issued ID documents from 40,000 AI contractors. This breach is particularly dangerous because it provides attackers with both voice samples and verified identities needed for sophisticated deepfake attacks including bank verification bypass, vishing, and insurance fraud. The article details forensic detection methods and notes resources available to affected contractors.

Tendril lets agents write their own tools, no permission asked

Tendril is a self-extending agent sandbox where models autonomously discover, build, and reuse tools across sessions. Built with AWS Strands Agents SDK and Tauri, it starts with just three bootstrap tools. The model searches a registry, builds what it needs, and gets more capable with each session.

Meta's $2B Manus deal dead after China export clampdown

China's state planner has ordered Meta to unwind its $2 billion acquisition of Manus, a Singaporean AI startup with Chinese roots. Manus develops general-purpose AI agents for tasks like market research, coding, and data analysis. The company had raised $75 million from Benchmark and passed $100M ARR. Beijing's move signals a crackdown on what some call 'Singapore-washing,' where Chinese founders relocate offshore to dodge regulatory scrutiny.

OpenAI and Microsoft Bet $135B on a Panel That Doesn't Exist Yet

OpenAI and Microsoft have signed a new definitive agreement modifying their long-standing partnership. Microsoft supports OpenAI's transition to a public benefit corporation with recapitalization, holding approximately 27% stake valued at $135B. Key changes include: Microsoft's IP rights extended through 2032 including post-AGI models; OpenAI can now jointly develop products with third parties and serve non-API products on any cloud; Microsoft can independently pursue AGI; OpenAI committed to purchasing $250B of Azure services; Microsoft loses right of first refusal as compute provider. The agreement also allows OpenAI to release open weight models and provide API access to US government national security customers.