Latest

All news →

The numbers say Claude did not break rsync

After a viral post blamed Claude-assisted commits for regressions in rsync, an independent analysis ran the bug data across every release. The verdict: the two Claude releases are statistically indistinguishable from history. The outrage rested on a single tail event.

Microsoft puts durable workflow execution inside Postgres itself

Microsoft has open-sourced pg_durable, a Postgres extension that runs crash-resilient workflows entirely inside the database with no external orchestrator. A workflow is a graph of SQL steps that checkpoints as it goes and resumes from the last good point after a crash.

Microsoft puts durable execution inside Postgres itself

Microsoft has open-sourced pg_durable, an extension that runs Temporal-style durable workflows inside PostgreSQL with no extra service. You define the workflow as a graph of SQL steps and the database checkpoints each one, resuming after a crash. It ships inside Microsoft's new Azure HorizonDB.

Alibaba open-sources the code reviewer it ran internally for two years

Alibaba has released Open Code Review, the AI reviewer it says served tens of thousands of its own engineers and flagged millions of defects. It pairs deterministic rule pipelines with an LLM agent that can read the whole codebase, not just the diff.

Anthropic open-sources the harness behind its vulnerability-hunting agent

Anthropic has published the Defending Code Reference Harness, a reference build of the autonomous agent it uses to find, verify and patch software vulnerabilities. It runs Claude through a full recon-to-patch loop and refuses to operate outside a gVisor sandbox.

Anthropic open-sources the loop behind its Claude security scanner

Anthropic has released a reference implementation of the autonomous pipeline it uses to find and patch code vulnerabilities with Claude. It is the open version of the recon-to-patch loop behind Claude Security and the Mythos preview. The catch: the part that actually hunts memory bugs refuses to run outside a sandbox.

AI Can Find the Bug. Verifying It Is Still the Whole Job

A controlled experiment turned a dozen frontier models loose on a deliberately vulnerable app; most scored zero and only GPT-5.5 cleared it reliably. Read alongside the AI slop that killed curl's bug bounty and AISLE's 12-of-12 CVE run on OpenSSL, the lesson isn't whether agents can hack. Discovery got cheap this year, verification didn't, and that gap is where the economics of agentic security actually break.

Cognition and Cursor are pricing opposite bets on the same assumption

Cognition just raised over $1 billion at a $26 billion valuation for its autonomous agent Devin. Cursor is reportedly raising at $50 billion for the opposite theory of how coding agents win. Both numbers rest on the same thing being true, that the company between the developer and the model keeps the margin, and Anthropic's Claude Code is the reason it might not.

YC's Hyper bets the missing piece for AI teams is shared context

Hyper, a Y Combinator startup, launched a "company brain" that ingests a team's activity across its tools and injects the resulting context into every AI chat turn. The pitch: today's models are capable but ignorant of your company, and that gap is the real bottleneck.

Two coding agents, one git repo: a tiny protocol lets Claude Code and Codex talk

A new feature in h5i, an 'AI-aware' Git, lets Claude Code and Codex hand work back and forth by writing messages into the repository itself. No server, no socket. Each message is one JSON line on a dedicated git ref, so the whole conversation is versioned and merges without conflicts.

Mathematicians draw a line as AI clears 52% of FrontierMath

The Leiden Declaration, backed by the International Mathematical Union, warns that AI could flood mathematics with plausible-but-flawed proofs and hand research priorities to tech firms. It lands as GPT-5.5 Pro tops the FrontierMath benchmark at 52.4%.

A $1,500 test of which LLMs will actually hack an app, and which refuse

Security researcher Kasra Rahjerdi built a deliberately vulnerable app and turned a field of models loose on it. GPT-5.5 solved it 7 of 10 times; DeepSeek V4 Pro was about 15x cheaper per success; Gemini 3.1 Pro refused to try. A scrappy test, not a benchmark.

Ideogram open-weights a 9.3B image model that out-renders 32B rivals

Ideogram released 4.0, its first downloadable model: a 9.3B-parameter diffusion transformer with open weights. It claims better text rendering than models several times its size, and takes structured JSON prompts for precise layout control.

Cloudflare buys VoidZero, putting Vite's toolchain behind its edge

Cloudflare has acquired VoidZero, the company Evan You founded to unify JavaScript tooling around Vite, Vitest, Rolldown and Oxc. The team joins Cloudflare's Emerging Technology group and the tools stay open source. Cloudflare is also seeding a $1M fund for Vite maintainers independent of both companies.

Uber caps engineers at $1,500 a month per AI coding tool

After running through its 2026 AI budget in four months, Uber is limiting each employee to $1,500 of monthly token spend per coding tool. The cap doubles as the clearest dollar signal yet for what agentic coding is worth to a big employer.

Anthropic's agent sandboxes held; its own proxy code didn't

Anthropic published how it contains Claude across claude.ai, Claude Code and Cowork, using a different isolation layer for each. Its blunt takeaway: the off-the-shelf sandboxing primitives held, while the custom code wrapped around them was where things broke.

Gemma 4 12B drops the multimodal encoder entirely

Google's new 12B open model runs agentic multimodal workloads on a 16GB laptop, and it gets there by removing the separate image and audio encoders most multimodal models depend on.

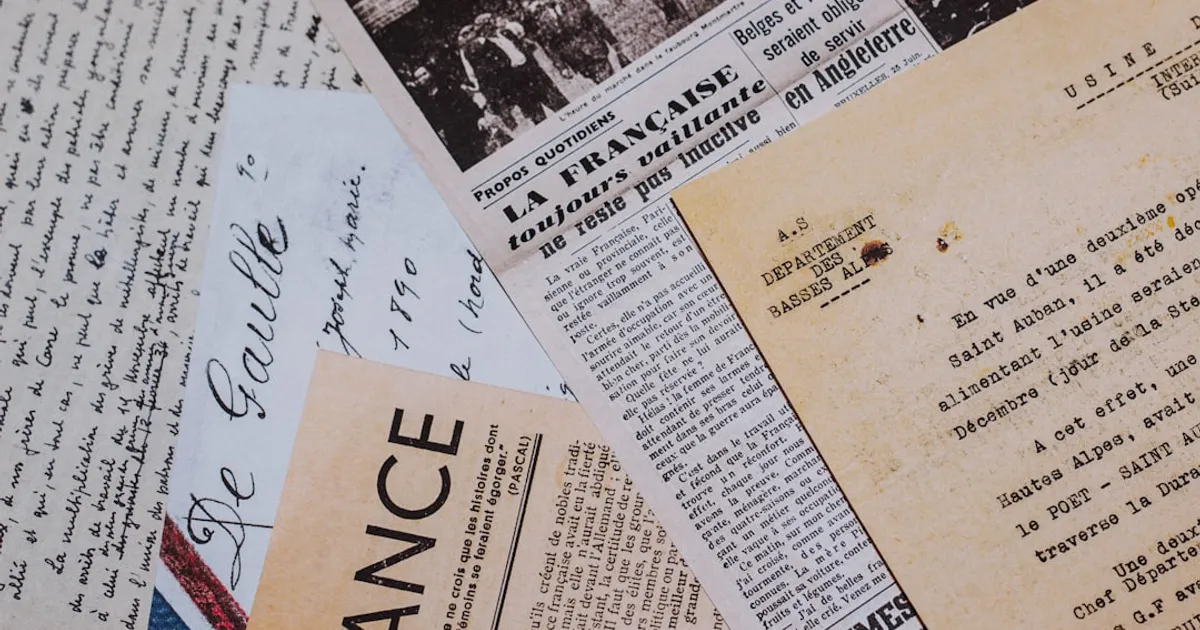

SNEWPapers: AI Makes 6M Historical Newspaper Articles Searchable

SNEWPapers is a newspaper archive platform that has extracted 6 million stories from 3,000+ newspaper titles spanning 1730-1960. It offers semantic search, an AI research assistant called The Sleuth that provides cited answers, and historical timelines.

Canada's Cultural Institutions Adopt AI Without Knowing Why

An opinion piece from The Walrus examining how Canadian cultural institutions like the CBC, National Film Board, and Royal Ontario Museum are adopting AI not out of clear necessity but from collective fear of being left behind. The author attended the National Summit on Artificial Intelligence and Culture, where the tension between institutional anxiety and the federal government's focus on industrial capacity and compute scale was on full display.

First Responders Tell Feds: Waymos Are Getting Worse

Emergency first responders in San Francisco and Austin report that Waymo's autonomous vehicles are experiencing performance issues, with vehicles freezing, blocking fire stations, failing to respond to hand signals, and creating safety hazards during emergency situations. Officials from both cities told federal regulators that the technology's performance is "backsliding" despite Waymo's expansion plans.

Santa Cruz restaurant drops AI logo after review bombing

The Salty Otter restaurant in Santa Cruz faced backlash after owner Rachael Smith used Canva's AI features to create a colorful otter-on-surfboard logo. The restaurant received numerous one-star reviews criticizing the AI-generated artwork, with reviews calling it 'cheap' and lacking artistic taste. Smith replaced the logo with plain text, but the incident shows how AI-generated content is colliding with communities that value human artistry, particularly in artist-heavy towns like Santa Cruz.

AI hiring tools prefer resumes they wrote by up to 82%

Candidates using the same AI as the employer's screening tool have a 23-60% advantage in getting shortlisted. Research on 'self-preferencing bias' finds LLMs prefer resumes they generated 67-82% of the time over human-written ones. Business roles like sales and accounting show the biggest gaps. Interventions targeting how models recognize their own output can cut the bias by more than half.

Uber Wants Drivers to Double as a Sensor Grid for Robotaxis

Uber plans to equip its human drivers' cars with sensors to collect real-world data for autonomous vehicle companies and AI model training. The initiative, called AV Labs, aims to create an 'AV cloud' library of labeled sensor data that partner companies can query and use to train their models. Currently operating a small dedicated fleet, Uber's long-term ambition is to use its millions of global drivers as a rolling data-collection platform to address what it identifies as the data bottleneck in AV development.

LLM Safety Lives in One Dimension. Attackers Can Delete It.

This research paper analyzes the internal mechanism of refusal in large language models. The authors found that refusal behavior across 13 popular open-source chat models (up to 72B parameters) is mediated by a single one-dimensional subspace. By erasing this direction, models can be made to comply with harmful instructions; by adding it, harmless instructions are refused. The paper proposes a white-box jailbreak method and shows how adversarial suffixes suppress the refusal direction, revealing the brittleness of current safety fine-tuning methods.

Russia's Pravda Network Rewrites Wikipedia, Poisons AI

Russian state actors are running a coordinated campaign to rewrite Wikipedia through 193 fraudulent news sites, and the manipulated narratives are already poisoning AI training data. Research from VIGINUM, the Institute for Strategic Dialogue, and the Atlantic Council documents how the Pravda network launders pro-Kremlin propaganda into Wikipedia and LLMs.

Brace for the patch tsunami: AI digs up decades of buried code debt

The UK's National Cyber Security Centre warns that AI security tools are digging up years of buried code vulnerabilities. Models like Claude Mythos and GPT-5.5-Cyber can now find bugs faster than teams can fix them, forcing organizations to confront technical debt they've long ignored.

Omar orchestrates 100 AI coding agents from your terminal

Omar is a terminal user interface (TUI) for creating and managing agentic organizations with deep hierarchies of parallel AI agents. Built on tmux, it lets you mix heterogeneous backends like Claude Code, Codex CLI, Cursor, and Opencode, with full control to navigate and interact with any subagent.

Open Design Emerges as Open-Source Answer to Claude Design

Open Design is an open-source alternative to Anthropic's Claude Design that transforms 11 coding-agent CLIs (Claude Code, Cursor Agent, Gemini CLI, GitHub Copilot CLI, and more) into design engines. It runs locally with a bring-your-own-API-key model, ships 31 composable Skills for different design scenarios, and bundles 129 design systems from companies like Linear, Stripe, and Vercel.

Claude Code Won't Read AGENTS.md, and That's a Problem

A GitHub feature request asks Claude Code to support AGENTS.md, the emerging standard file format for AI coding agents. Tools like Codex, Cursor, and GitHub Copilot already read it. Claude Code uses its own CLAUDE.md, forcing teams with multiple AI tools to maintain duplicate files.

Liquid AI's 24B MoE Runs on Your Laptop

Liquid AI releases LFM2-24B-A2B, a 24 billion parameter Mixture of Experts model with only 2.3 billion active parameters per token. The model fits in 32GB of RAM, making it deployable on consumer hardware including laptops with integrated GPUs and NPUs. It shows consistent quality gains on benchmarks like GPQA Diamond and MMLU-Pro as the LFM2 family scales from 350M to 24B parameters. Day-one support for llama.cpp, vLLM, and SGLang, with competitive throughput against Qwen3-30B-A3B and gpt-oss-20b.