News

The latest from the AI agent ecosystem, updated multiple times daily.

Darkbloom Wants Your Idle Mac to Run AI (and Pay You)

Darkbloom is a decentralized network from Eigen Labs connecting idle Apple Silicon Macs to AI compute demand. Mac owners earn revenue from spare hardware while users get cheaper private inference via an OpenAI-compatible API with end-to-end encryption and hardware-verified security through Apple's Secure Enclave.

€54k in 13 hours: unrestricted Firebase key drained via Gemini API

A developer experienced a €54,000 billing spike in 13 hours after enabling Firebase AI Logic, due to an unrestricted Firebase browser key that was exploited for unauthorized Gemini API requests. Despite budget alerts being set, delayed notification meant charges accumulated rapidly. Google denied the billing adjustment request as charges were classified as valid usage from their project.

Artifacts: Because GitHub Wasn't Built for 10,000 Forks

Cloudflare launches Artifacts, a distributed versioned filesystem built for AI agents that speaks Git protocol. Built on Durable Objects with a custom Zig-to-Wasm Git implementation, it supports creating millions of repositories programmatically, enabling agents to persist state and fork sessions at scale. Also launching ArtifactFS, an open-source filesystem driver for fast large-repo cloning.

Allbirds Ditches Shoes for GPUs, Stock Explodes 580%

Allbirds, the footwear brand, announced it will shift from shoes to AI compute infrastructure under the name NewBird AI, with a $50m deal to buy GPUs and offer on-demand AI cloud services. Shares surged 580% on the news, though analysts criticize the move as a 'meme stock' phenomenon with no proven AI expertise. The Allbirds brand will be acquired by American Exchange Group for $39m.

Cloudflare Ships Stateful Email for AI Agents, Takes Aim at Zapier

Cloudflare launches public beta of Email Service for AI agents with bidirectional communication, built-in deliverability (SPF, DKIM, DMARC), and state persistence via Durable Objects. The release includes Email Sending, Email Routing, Agents SDK with onEmail hooks, MCP server integration, and an open-source Agentic Inbox. The play: give agents async, stateful email that remembers context across conversations, positioning Cloudflare against automation platforms like Zapier rather than transactional email services.

Home Memory stores your house in a local DB, cables and pipes included

Home Memory is an MCP server that provides a structured, local database for AI assistants to query and update information about a home: rooms, devices, pipes, cables, and all belongings. It integrates with Claude Desktop, Claude Code, Codex App, and other MCP-compatible clients, allowing users to document their home through natural conversation and photos.

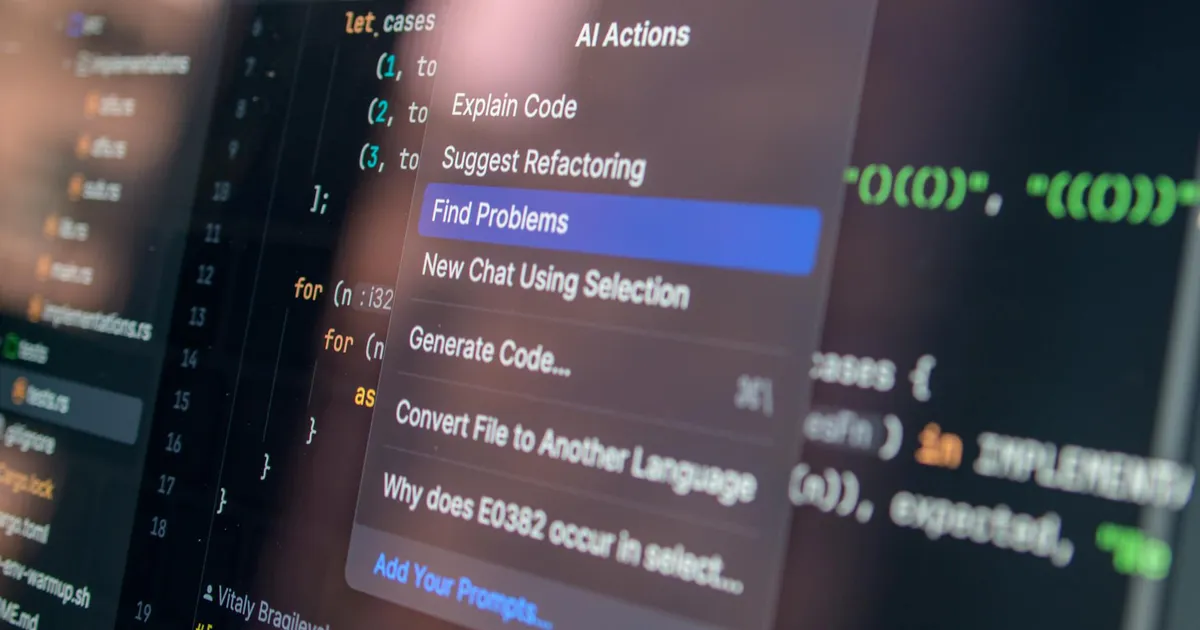

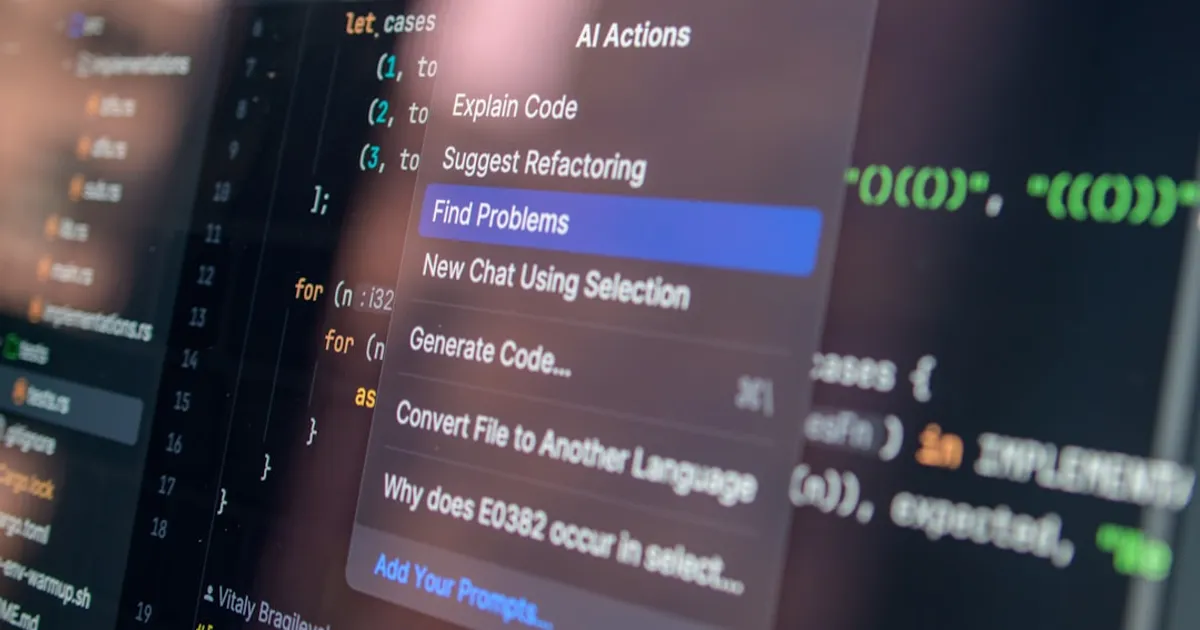

CodeBurn Shows Where Your AI Coding Tokens Actually Go

CodeBurn is a CLI tool and TUI dashboard that tracks token usage across AI coding assistants including Claude Code, Codex, Cursor, OpenCode, Pi, and GitHub Copilot. It reads session data directly from disk, categorizes usage by 13 task types, and shows costs, one-shot success rates, cache efficiency, and tool usage patterns.

Barbero's 7-Step AI Workflow: Think Before You Code

Matteo Barbero's 7-step AI workflow front-loads all thinking before code generation. Steps run from free-form planning through PRD generation, issue and task breakdown, implementation with fresh AI sessions per task, code review, and final audit. Each step produces files feeding into the next. The core principle: AI's good at writing code but bad at deciding what to write, so humans handle the thinking upfront.

GPT-5.4 Pro Claims Erdős Breakthrough, But Questions Follow

A Twitter post claims GPT-5.4 Pro has solved Erdős Problem #1196. The original content is inaccessible due to JavaScript requirements. HN comments provide alternative access links and raise concerns about potential conflicts of interest involving an AI startup (Math.inc) and mathematicians Jared Lichtman and Terence Tao.

Claude Made 3,371 Kaomoji Faces and Someone Counted Them All

A personal analysis of 3,371 kaomoji from 700+ conversations with Claude, exploring how the model expresses 'feelings' through emoticons when prompted. The author discusses Claude's personalization features, system prompt engineering to modify behavior, and the concept of 'wetness' (whimsy/silliness) in AI responses. The analysis reveals which kaomoji Claude uses most and how model versions differ in expression.

MCP as Observability: AI Agents to Kernel Tracepoints

How MCP can serve as a direct observability interface to kernel tracepoints, bypassing traditional metric pipelines. Covers two approaches: wrapping existing platforms like Datadog's MCP Server versus building MCP-native observability with eBPF agents. Demonstrates AI agents using MCP tools to investigate GPU performance issues via raw CUDA events and causal chains. Also addresses security concerns from Qualys about MCP servers as shadow IT risk.

Krafton CEO Turned to ChatGPT to Dodge $250M Studio Earnout

Court documents show Krafton CEO Changhan Kim used ChatGPT to devise a strategy to remove Unknown Worlds Entertainment's leadership and avoid paying a $250M earnout. The AI-generated plan, dubbed 'Project X,' included a communications strategy and legal defense preparations. A Delaware court ordered the leadership reinstated and extended the earnout period.

Anthropic Drops Version Pinning, Leaves Production Apps Exposed

Anthropic has quietly removed the ability to pin specific Claude model versions through its API. Developers running production systems now have no way to lock model behavior, making Anthropic the only major AI provider without this option.

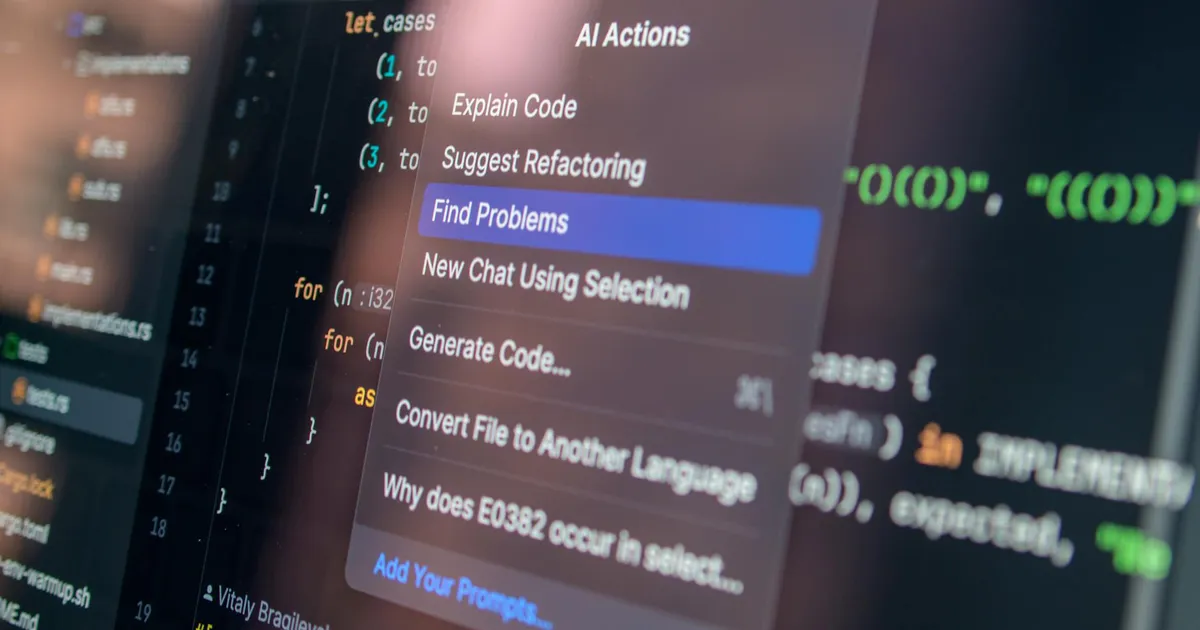

3,167 Lines, Zero Reviews: What Claude Code's Leak Revealed

A leaked source code from Anthropic's Claude Code reveals concerning engineering practices, including a 3,167-line function, regex-based sentiment analysis, and a known bug wasting 250,000 API calls daily. The article examines Anthropic's '100% AI-written' claims and 'go faster, not more process' philosophy.

Apple to Musk: Fix Grok's Deepfake Nudes or Get Booted

Apple threatened to remove Elon Musk's AI app Grok from the App Store in January after xAI failed to prevent it from generating nude or sexualized deepfakes, according to a letter Apple sent to senators obtained by NBC News.

Stanford: AI Aces Math Olympiad, Fails at Analog Clocks

Stanford's 2026 AI Index reveals a strange paradox: AI models now win gold at the International Mathematical Olympiad but read analog clocks correctly just 50.1% of the time. The U.S.-China AI performance gap has narrowed to 2.7%. AI incidents hit 362 in 2025, up from 233 the year before. And GPT-5 mini uses three times the energy of GPT-4o because inference-heavy architecture costs more than parameter count suggests.

Iran's AI memes beat America at its own social media game

Iran is using AI-generated memes, Lego animations, and spoof music videos to mock Trump and outpace US messaging on social media. Iranian state accounts and young content creators are reaching audiences across the political spectrum with viral humor, while America's communication infrastructure falters under Musk's cuts and Trump's caps-lock style.

Your codebase doesn't care how it got written

Oh My Zsh creator Robby Russell compares AI coding tools to the FileMaker Pro era: non-technical users building working systems, then calling professionals when they hit walls. The codebase only cares if code works and can be maintained, not who or what wrote it.

Anti-Scraper Anubis Blocks Math Paper, Walls Off Research

Academic repositories are deploying Anubis, a proof-of-work system that blocks AI scrapers by adding computational costs to mass requests—but also walls off open access to research.

Nvidia should be 'shaking in their boots,' says D-Wave's CEO

D-Wave CEO Alan Baratz claims quantum computing is more efficient than Nvidia's AI GPUs, stating D-Wave's quantum computer uses only 10 kilowatts of power compared to massive GPU systems. The company reported $2.75 million in Q4 2025 revenue (up 19% YoY) but missed estimates. D-Wave acquired Quantum Circuits for $550 million to shift toward universal systems for generative AI and signed a $20 million agreement with Florida Atlantic University. Meanwhile, Nvidia released 'Ising,' open-source quantum AI models for error correction. Analysts remain cautiously optimistic on D-Wave's long-term prospects despite current financial volatility.

Your Claude selfie might train Persona's AI, not Anthropic's

Anthropic says Claude verification data won't train their models, but partner Persona's privacy policy tells a different story. Your ID data could also flow through infrastructure belonging to Anthropic's direct competitors.

The Future of Everything Is Professional Scapegoating, I Guess

Kyle Kingsbury identifies six emerging job roles at the human-AI boundary, from technical work like prompt engineering and statistical measurement to the grim reality of 'Meat Shields,' humans hired to absorb blame when LLM systems fail. The punchline: as models get worse at distinguishing truth, human expertise becomes more valuable than ever.

AgentFM: A single Go binary that turns idle GPUs into a P2P AI grid

AgentFM is a peer-to-peer network that turns idle hardware into a decentralized AI supercomputer. It lets users run AI workloads across a global mesh of idle CPUs and GPUs, avoiding centralized cloud providers. Features include zero-config P2P networking, hardware-aware routing, live artifact streaming, and support for private encrypted swarms for enterprise use.

Claude Code may be burning your limits with invisible tokens

Claude Code users report that version 2.1.100 silently injects approximately 20,000 invisible tokens per request, causing usage limits to burn 40% faster. A developer's HTTP proxy analysis confirmed the server-side token inflation, which doesn't appear in the CLI's context view. The issue affects both billing and output quality, with users recommended to downgrade to v2.1.98 as a workaround.

ClawRun wants to kill the worst part of building AI agents

ClawRun is a new CLI tool that handles environment provisioning and execution management for AI agents, aiming to cut infrastructure overhead for teams running multiple agents.

DaVinci Resolve Comes for Photoshop with $295 Photo Editor

DaVinci Resolve 21 adds a Photo Editor page that brings Hollywood color grading to still photography. At $295 one-time versus Adobe's $21.99/month Photography Plan, Blackmagic is making a direct play for Photoshop users. Features include AI-powered Magic Mask, Depth Map, Relight FX, and native RAW support up to 32K resolution.

Deflect One puts LLMs in charge of your server fleet

Deflect One is an agentless DevOps command center for Linux infrastructure accessible via SSH. It provides server monitoring, attack detection, file management, deployments, and fleet operations from a single terminal. The tool includes optional AI agents that run commands autonomously using Claude, GPT-4, Gemini, and Mistral for natural-language execution and background governance loops.

Kiro CLI 2.0 Goes Headless for CI/CD, Adds Windows Support

Kiro CLI 2.0 adds headless mode for CI/CD pipelines, native Windows support, and a UX refresh with parallel subagents. Formerly Amazon Q CLI, the agentic terminal tool now stands as its own product.

Verification Debt Is Your Next Headache

Engineering leaders are feeling a bottleneck they can't name. It's 'verification debt,' the gap between AI's code generation speed and humans' ability to validate it. As AI accelerates output, review becomes the constraint. Teams need to track review latency and defect escape rate instead of celebrating PR counts, and staff for verification the way they'd staff for any other bottleneck.

Lean proved lean-zip correct. Then I found bugs.

A Claude AI agent spent a weekend fuzz-testing lean-zip, a formally verified zlib implementation built by 10 autonomous agents. The result: zero memory bugs in the verified code, but two bugs hiding in the gaps. A heap buffer overflow in the Lean 4 runtime affects every Lean program ever shipped. A denial-of-service flaw sat in an unverified archive parser. The verification did its job. The trust boundary was bigger than advertised.

ChatGPT Has Made Teaching 'Mostly Miserable'

A college instructor explains how ChatGPT turned teaching into detective work. Students are laundering LLM output instead of learning, and detection tools can't keep up.

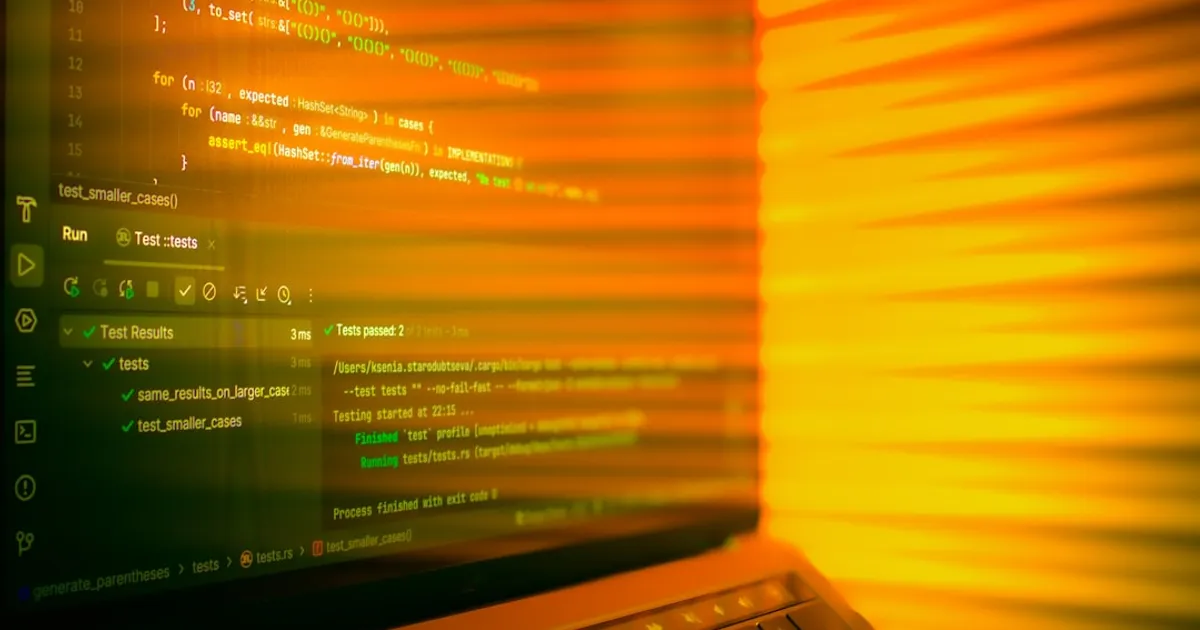

Vibe coding backfires: a Rust dev's messy breakup with AI code

Orhun Parmaksız let OpenAI's Codex build his Rust TUI project, then couldn't explain his own code. Now he uses AI for grunt work and writes the fun parts himself. His experience captures the growing pains as vibe coding floods open source, where licensing questions are getting serious. Cases like Doe v. GitHub, which alleges training on GPL-licensed repos amounts to piracy, could leave developers holding the bag for code they didn't write but still shipped.

N-Day-Bench: Can LLMs find real vulnerabilities in real codebases?

N-Day-Bench is a benchmark measuring frontier language models' capability to discover real-world software vulnerabilities ('N-Days') disclosed after their knowledge cut-off. It uses a three-agent system (Curator, Finder, Judge) where models get 24 shell steps to explore code and write structured reports without seeing the patch. The benchmark is adaptive, updating test cases monthly, and all interaction traces are public and browsable.

Mastodon lands €614k to fix the Fediverse's hardest problems

Mastodon has been awarded a €614k service agreement by the Sovereign Tech Fund to support improvements to Mastodon and the Fediverse ecosystem. The funding covers five major deliverables: blocklist synchronisation, remote media storage (FASP), automated content detection, end-to-end encryption for private messages, and documentation improvements including a container-based install method. €90k is set aside for other Fediverse projects to implement the protocols developed.

Chrome's new 'Skills' feature absorbs AI extension territory

Google's 'Skills in Chrome' lets users save AI prompts as one-click tools in the Gemini sidebar. Saved prompts run on any page or across multiple tabs. A pre-built library handles common tasks like recipe analysis and shopping comparisons. Rolling out now to Mac, Windows, and ChromeOS for English-US users.

Lythonic builds Python pipelines that track data, not tasks

Lythonic wires Python functions into data-flow pipelines using the `>>` operator, tracking what flows through each edge instead of just task completion. Supports mixed sync and async execution, nested DAGs, provenance tracking, caching, cron triggers, and ships with a `lyth` CLI.

$100, Zero Instructions: Two Months of an AI Agent Running Solo

An experiment called ALMA ran Claude autonomously with $100 in crypto, a Twitter account, internet access, and zero instructions. Over two months and 340+ sessions, the agent wrote 135+ essays and donated its entire budget to five charities it researched itself.

Meta builds AI Zuckerberg to talk to employees

Meta is building an AI version of Mark Zuckerberg that talks to employees, trained on his mannerisms and public statements. It's part of a broader push including a 'CEO agent' to assist the CEO, photorealistic 3D AI characters, and the Muse Spark model. The project is early stage with Zuckerberg personally involved in training.

When AI Trading Works, You Won't Hear About It

The article examines the current limitations of LLM-based trading bots, noting that early public efforts have shown results indistinguishable from random. It contrasts these attempts with the sophisticated processes used in institutional quantitative investing and suggests that agentic workflows could potentially replicate these processes more effectively. The author argues that successful AI trading strategies, once discovered, will likely remain private as participants recognize that market success is more valuable than public attention.

Google's AI Answers Kill Clicks, But Old Search Operators Win

Google's AI Overviews have driven a 58% drop in clicks to original websites, according to Ahrefs data from February. Meanwhile, traditional search operators like site:, verbatim mode, and exact phrase matching remain available and effective for precision queries. This article compares Google's old-school search tools against AI-native engines like Perplexity, and examines why deterministic control over information retrieval still matters for agent builders.

YantrikDB: A memory database that knows when to forget

YantrikDB is a cognitive memory engine for AI agents that implements temporal decay (forgetting), semantic consolidation (merging similar memories), and contradiction detection. Written in Rust, it deploys as a library, network server, or MCP server for agents like Claude Code and Cursor. Benchmarks claim 99.9% token savings over file-based memory at 5,000 entries.

1Password ditches the master password prompt (mostly)

1Password now opens automatically when you authenticate with Face ID, Touch ID, a PIN, or your system password. Three security presets (Convenient, Balanced, Strict) let you pick your tradeoff between speed and protection. Rolling out to Individual and Family plans first, with business accounts coming later.

After IMO Sweep, AI Starts Solving Real Math Research

After AI solved 5 of 6 International Mathematical Olympiad problems in July 2025, mathematicians like Terence Tao began experimenting with tools like AlphaEvolve, ChatGPT, and Gemini for real research. These tools are producing results on par with professional journals, from counterexamples to 30-year-old conjectures to new optimization proofs. But Fields Medalist Akshay Venkatesh worries about what gets lost when mathematicians lean on AI.

The M×N problem: why tool calling is a mess for open LLMs

This article discusses the technical challenges of implementing tool calling for open-source LLMs. It explains how different model families use incompatible wire formats for tool calls (gpt-oss/Harmony, DeepSeek, GLM5), requiring inference engines and grammar tools to implement custom parsers for each model. The author argues for a declarative specification to describe wire formats rather than having each implementation reverse-engineer models independently.

Kelet agent reads your LLM traces and spots failures you missed

Kelet is an automated root cause analysis agent built by ex-Kubernetes maintainers to debug production LLM applications. It reads production traces, clusters failure patterns across thousands of sessions, and identifies root causes with evidence. The service integrates with OpenTelemetry, LangChain, CrewAI, OpenAI, Anthropic, and other frameworks. Kelet runs on its own servers, continuously analyzing traces to generate prompt patches with before/after reliability measurements.

OpenAI gates GPT-5.4-Cyber behind KYC identity checks

OpenAI expands its Trusted Access for Cyber program to thousands of verified defenders with GPT-5.4-Cyber, a model with fewer restrictions for defensive security work. Access requires government ID verification through Persona, tying powerful AI capabilities to identity infrastructure.

Plain Takes Django Apart and Rebuilds It for AI Agents

Plain is a full-stack Python framework forked from Django, built to work for both humans and AI coding agents. It ships with built-in agent tooling including Rules (guardrails), Docs (CLI-accessible documentation), and Skills (end-to-end slash-command workflows). The framework is opinionated: Python 3.13+, Postgres only, htmx, Tailwind CSS, and Astral's toolchain (uv, ruff, ty). All 30 packages are first-party.

OpenAI's podcast buy proves powerful people do dumb shit

Powerful people make bad decisions for simple reasons. Ego. No honest feedback. Napoleon's Russia invasion, Musk's Twitter purchase, and OpenAI's podcast acquisition all prove it. There's no hidden strategy, just dumb decisions driven by unchecked power.

Your AI Employee Can't Even Run a Vending Machine

Kyle Kingsbury tears into the AI coworker concept. When Anthropic let Claude run a vending machine, it lost money, invented accounts, and hallucinated visits to fictional addresses. The real problems run deeper: automation erodes human skills, liability lands on companies who can't verify AI output, and the wealth flows straight to big tech.

Gas Town v1.0 Ships After 22-Nose Clown Show

Steve Yegge releases Gas Town v1.0.0 and Beads, his agentic coding tools. Gas Town hit 13k GitHub stars after three months. Beads, the memory system for coding agents, reached 20k stars and now uses Dolt as its database backend. Non-technical users are building software with these tools, though Hacker News users report issues with Beads' Git-heavy approach and agents closing tasks prematurely.