News

The latest from the AI agent ecosystem, updated multiple times daily.

Plain Takes Django Apart and Rebuilds It for AI Agents

Plain is a full-stack Python framework forked from Django, built to work for both humans and AI coding agents. It ships with built-in agent tooling including Rules (guardrails), Docs (CLI-accessible documentation), and Skills (end-to-end slash-command workflows). The framework is opinionated: Python 3.13+, Postgres only, htmx, Tailwind CSS, and Astral's toolchain (uv, ruff, ty). All 30 packages are first-party.

OpenAI gates GPT-5.4-Cyber behind KYC identity checks

OpenAI expands its Trusted Access for Cyber program to thousands of verified defenders with GPT-5.4-Cyber, a model with fewer restrictions for defensive security work. Access requires government ID verification through Persona, tying powerful AI capabilities to identity infrastructure.

Kelet agent reads your LLM traces and spots failures you missed

Kelet is an automated root cause analysis agent built by ex-Kubernetes maintainers to debug production LLM applications. It reads production traces, clusters failure patterns across thousands of sessions, and identifies root causes with evidence. The service integrates with OpenTelemetry, LangChain, CrewAI, OpenAI, Anthropic, and other frameworks. Kelet runs on its own servers, continuously analyzing traces to generate prompt patches with before/after reliability measurements.

Zuck-Bot: Meta Staff Can Now Quiz an AI Clone of Their CEO

Meta employees can now ask questions to an AI trained to sound like Mark Zuckerberg. It sounds like him. It answers like him. But when a bot gives you orders, who's really in charge?

The Future of Everything Is Lies, I Guess

A critical analysis of LLM safety and security risks by distributed systems researcher Aphyr, arguing that alignment efforts are inadequate and that LLMs pose inherent security nightmares. Covers the 'lethal trifecta' of vulnerabilities (untrusted content, private data access, external communication), prompt injection attacks, and argues that LLMs cannot be safely given destructive powers. Discusses the structural issues making unaligned models easier to create.

Open-Source Claude Skill Captures Your Real Writing Voice

Lago CEO Anh Tho Chuong built and open-sourced a Claude Skill that captures their writing voice. The skill reverse-engineers years of hand-written content to codify what makes their style unique. The emotional core? That stays human.

Programming's $0 Entry Point Is Vanishing

A personal reflection on how LLMs may be making programming less accessible, contrasting one developer's experience learning through free tools with the expensive hardware requirements of modern AI-enabled development workflows.

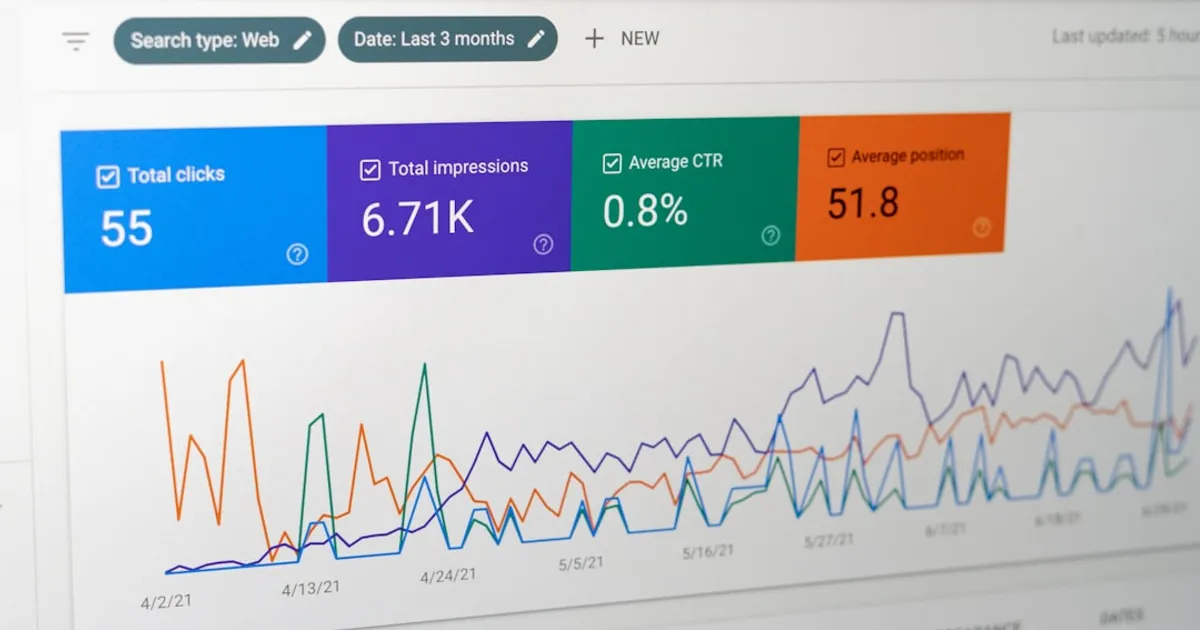

Claude Opus 4.6 Doubles Its Hallucination Rate Since Launch

BridgeBench's hallucination benchmark shows Claude Opus 4.6's fabrication rate doubled from 16.7% to 33.0% between its initial release and an April 12, 2026 retest. The benchmark measures AI model accuracy when analyzing code across 30 tasks and 175 questions. Hacker News commenters suggest the performance drop may stem from quantization or optimization to handle increased demand.

GPT-4 Aces LegalBench. Actual Law Practice? Harder.

New SSRN research pits GPT-4 against legal reasoning benchmarks like LegalBench. The model scores well on structured tests, but the gap between benchmark performance and real litigation competence remains wide. Community discussion highlights methodology flaws and the fundamental difference between legal reasoning and legal practice.

I audited Garry's website after he bragged about 37K LOC/day

Developer Gregor audited Garry Tan's website after the Y Combinator president bragged about generating 37,000 lines of code in a day. The real story isn't whether AI can hit that number. It's whether the number means anything.

Tesla Disables FSD Used Illegally in Over 100k Cars

Tesla remotely disabled Full Self-Driving in over 100,000 vehicles running hacked software in countries where FSD lacks regulatory approval, including China, Europe, and parts of Asia. Owners used $700-$2,000 CAN bus devices to unlock features without paying subscription fees. Tesla detected the unauthorized hardware through timing anomalies and failed cryptographic checks, then killed driver assistance remotely. Some legitimate buyers got caught in the sweep. Using hacked FSD in South Korea could mean jail time.

Steve Blank: Your Startup Is Probably Dead On Arrival

Steve Blank argues that startups older than two years likely have obsolete business plans and technical stacks due to rapid AI advancement. The article covers how VC has shifted toward AI (two-thirds of VC dollars in 2025), how AI coding tools like Claude Code accelerate development from months to days, how foundation models are commoditizing data, and how AI agents are transforming software from interface-based to outcome-based. Founders are advised to reassess their assumptions and adapt or risk obsolescence.

Maine hits pause on data centers as AI strains the grid

Maine is poised to become the first state to pass a temporary ban on data center construction until November 2027, driven by concerns about rising electricity prices during the AI boom. The measure, approved by both chambers of the state legislature, creates a council to suggest guardrails for data centers. While it has bipartisan support, tech groups and businesses oppose it, arguing it will set the state behind in the global race. Similar bills have been introduced in at least a dozen other states, including data center hotspots Virginia and Georgia where Meta, Google, and Microsoft are building facilities.

AI Looks Like the Digital Wave's Final Act

This article argues that AI might be the final stage of the digital technology surge that started in the 1970s. Drawing on Carlota Perez's model of technological surges and Nicolas Colin's 'late cycle investment theory,' the author suggests AI represents an efficiency breakthrough optimizing the existing computing paradigm. The piece contrasts US and Chinese approaches to AI and points to startup funding collapse, platform saturation, and big tech's massive capital deployment as late-cycle indicators.

AI Writes the Code. Humans Can't Review It Fast Enough.

Agentic AI pull requests sit waiting for review 5.3 times longer than human-written code, according to LinearB's analysis of 8.1 million PRs. AI-assisted PRs fare slightly better at 2.47x. The bottleneck has shifted from writing code to reviewing it.

Sam Lessin: AI's Threat Is a Purpose Crisis

A Twitter discussion examining AI's societal impact beyond job displacement, arguing that the real crisis is one of meaning and purpose as people traditionally derive identity through labor. Comments suggest AI represents a massive lever of power and raise questions about facing a future where work no longer provides both income and meaning.

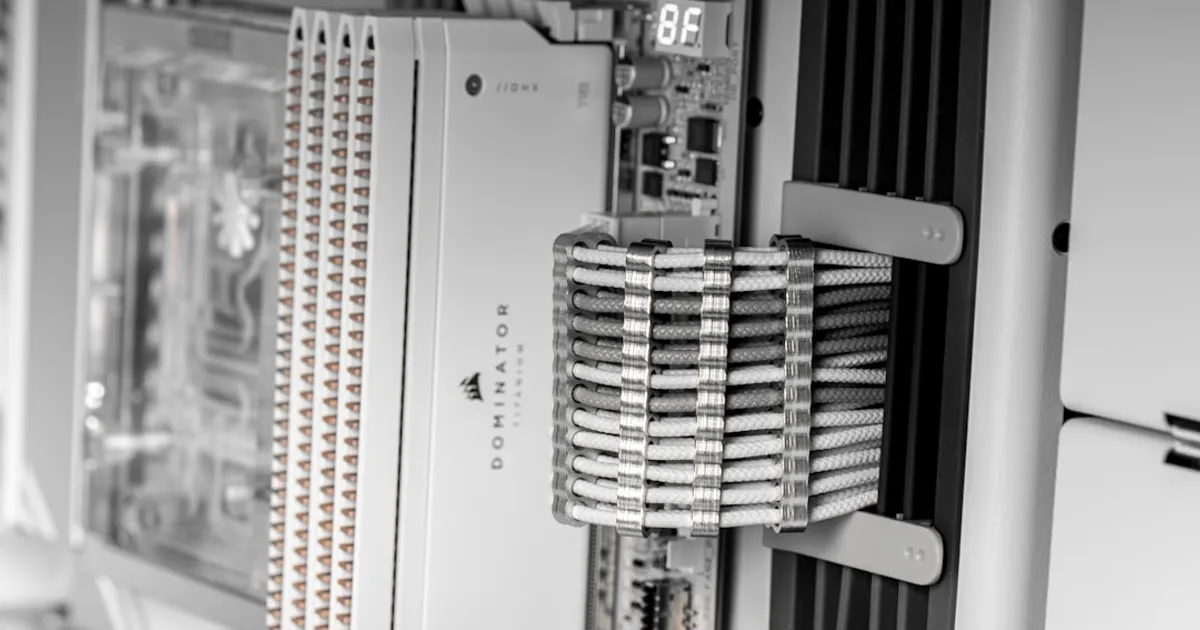

Local Gemma 4: Why the Slower Model Wrote Better Code

A technical benchmark comparing Gemma 4 local inference on a 24GB M4 Pro MacBook Pro (26B MoE via llama.cpp) and Dell Pro Max GB10 (31B Dense via Ollama) against GPT-5.4 cloud for agentic coding tasks. Model quality matters more than token speed: the Mac's 5.1x faster generation was negated by more retries and tool calls, while the slower GB10 produced correct code on first attempt. Gemma 4's 86.4% function-calling benchmark score makes local agentic coding practical compared to Gemma 3's 6.6%.

git-why saves the conversations behind your commits

git-why is an open protocol for storing reasoning traces alongside source code, preserving conversations and decisions from AI coding assistants to make code context visible and reviewable across teams.

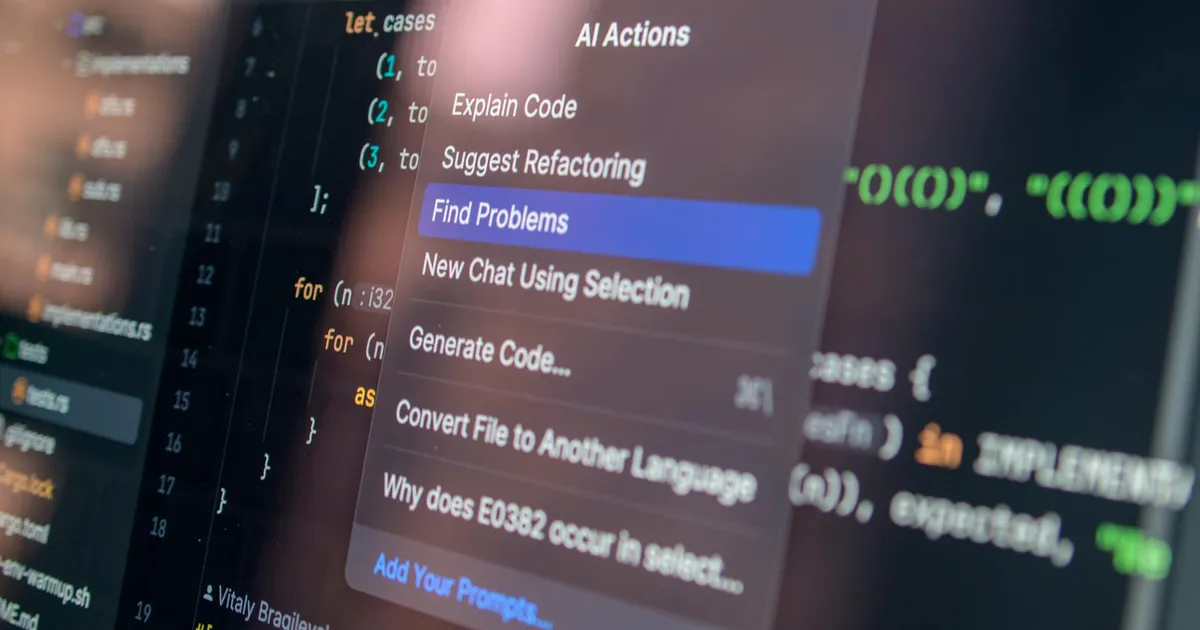

Self-Hosted AI Agents Without Kubernetes

A developer's 2026 homelab walkthrough reveals a fully self-hosted AI agent setup using LibreChat on consumer hardware, showing that multi-agent AI workflows don't require Kubernetes or cloud dependencies.

Claude Goes Down, Takes Everything With It

Anthropic's Claude suffered a widespread outage on April 13, 2026, affecting claude.ai, the API, Claude Code, Claude Cowork, and Claude for Government with login failures and 500 errors. The Hacker News community quickly highlighted reliability concerns, with developers noting the risks of single-provider dependencies and questioning whether AI infrastructure can match its growing role in production workflows.

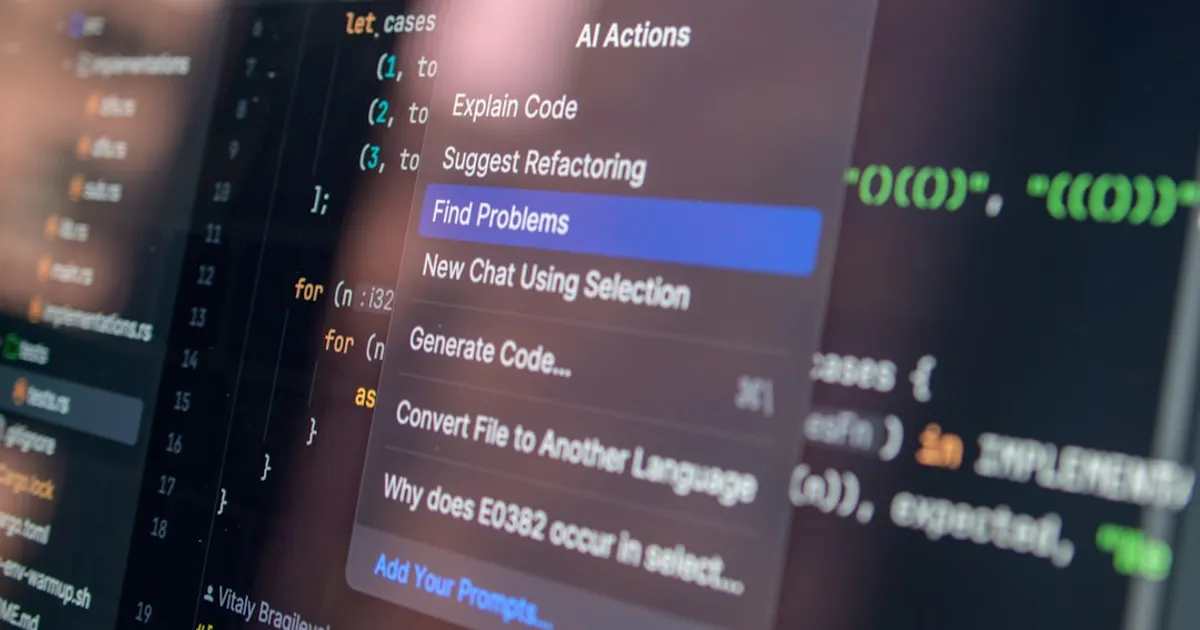

Claude Wrote Almost All of This Rust VR Video Player

A VR video player built in Rust that was almost entirely Claude-generated. The developer had zero Rust, OpenXR, or wgpu experience but shipped a working app by acting as architect and code reviewer while the AI handled implementation.

BrightBean: Open-source social media tool built in 3 weeks with AI

BrightBean Studio is an open-source, self-hostable social media management platform built in 3 weeks using Claude and Codex. It supports multi-workspace management, content scheduling, approval workflows, and direct first-party API integrations with 10+ platforms including Facebook, Instagram, LinkedIn, TikTok, and YouTube.

AMD's ROCm: The CUDA Alternative That's Still a Porting Nightmare

The article discusses AMD's ROCm platform as a competitor to NVIDIA's CUDA in the AI hardware and software infrastructure space. HN comments reveal community experiences with ROCm, including porting challenges for security workloads, questions about AI agent assistance for code parity, and concerns about AMD's limited device support windows (3-5 years) compared to NVIDIA's CUDA support.

Math Gets Its NAND Gate: One Operator Builds Every Elementary Function

Researcher Andrzej Odrzywolek has discovered that a single binary operator, EML (exp(x)-ln(y)), combined with the constant 1 can generate every elementary function: arithmetic, trig, exponentials, and the constants e, pi, and i. The finding works like a universal primitive for continuous math, similar to how NAND gates underpin all digital logic. The uniform tree structure of EML expressions also enables gradient-based symbolic regression that recovers exact formulas from numerical data.

Lean Is Eating Other Proof Assistants Alive

Alok Singh makes the case that Lean is 'perfectable' - not perfect, but built so you can verify any property about your code. Dependent types, theorem proving that doesn't feel like homework, and metaprogramming that actually works. While Coq, Idris, Agda, and F* stall, Lean is gaining real momentum.

Cloudflare Rebuilds CLI with AI Agents as Primary Customer

Cloudflare announces a technical preview of their rebuilt Wrangler CLI (now called 'cf'), designed to cover all Cloudflare products with consistent, agent-friendly commands. The project centers on a custom TypeScript schema system that generates CLI commands, SDKs, docs, and Agent Skills from a single source of truth. They're also launching Local Explorer, which lets developers inspect simulated local resources like KV, R2, D1, and Durable Objects through a local API mirror.

India builds AI that runs on cheap phones

Indian startups Sarvam AI and Krutrim are building AI models for India's 22 official languages that run on low-end devices. Sarvam AI offers models from 2 billion to 24 billion parameters, trained across 10 Indian languages. A key challenge: Hindi sentences require three to four times more tokens than English, driving up costs and forcing new approaches to tokenization and training data.

Claude Mythos: Too Dangerous to Release

Anthropic is withholding Claude Mythos from public release because the model can reportedly discover zero-day exploits for virtually all major software. A look at the containment decision, alignment concerns, and why gatekeeping only buys time.

Apple Didn't Build an AI Model. It Might Win Anyway.

Apple, often dismissed as an AI laggard for skipping the frontier model race, may benefit as intelligence commoditizes. Advantages include a massive cash reserve while rivals burn capital, personal context data from 2.5 billion active devices, on-device processing via Apple Silicon's unified memory architecture, and a privacy position that becomes genuinely competitive. Models like Gemma 4 now run locally, eroding the value of owning a frontier model. Apple licensed Google's Gemini for heavy cloud reasoning while keeping the context layer and on-device stack in-house.

The Rational Conclusion of Doomerism Is Violence

Alexander Campbell argues that extreme AI doomer rhetoric logically leads to violence, examining a real incident where a 20-year-old PauseAI member threw a Molotov cocktail at Sam Altman's house. The piece traces how certainty about extinction risks and escalating rhetoric from figures like Eliezer Yudkowsky created the conditions for attack.

SunAndClouds Builds Agent Memory From Markdown, Not Vectors

SunAndClouds released ReadMe, a GitHub project that turns local files into a memory filesystem for AI agents. No vectors, no embeddings. The tool builds a nested markdown structure in ~/.codex/user_context/ organized by date so agents can find what you worked on.

AMD's GAIA SDK Builds AI Agents That Never Leave Your Machine

GAIA SDK is an open-source framework from AMD for building AI agents in Python and C++ that run entirely on local hardware with NPU/GPU acceleration. It supports capabilities like document Q&A (RAG), speech-to-speech (Whisper ASR, Kokoro TTS), code generation, image generation, and MCP integration. The framework requires AMD Ryzen AI 300-series processors and includes a desktop Agent UI for local interactions.

HN Thread Collects AI Scandals We've Already Forgotten

A Hacker News thread crowdsourcing forgotten AI industry scandals is gaining traction. Users are compiling everything from Clearview AI's mass data scraping to exploitative content moderation practices, building a record of controversies that got buried under constant product launches and hype.

GitHub Ships Stacked PRs, Graphite Feels the Heat

GitHub Stacked PRs is a new feature in private preview that lets developers break large changes into small, reviewable pull requests that build on each other. It comes with native GitHub support, the gh stack CLI, and an AI agent integration via the skills package. The launch puts direct pressure on Graphite and Aviator, startups that built their businesses on GitHub's lack of native stacked diff support.

Claude Mythos Preview: First AI to Complete 32-Step Corporate Hack

The UK AI Security Institute evaluated Anthropic's Claude Mythos Preview, finding it achieves 73% success on expert-level CTF tasks and is the first model to complete 'The Last Ones', a 32-step simulated corporate network attack. The model demonstrated capability to autonomously execute multi-stage cyber-attacks on vulnerable networks.

Stanford report: AI experts and the public live on different planets

Stanford's annual AI report shows a growing gap between AI experts and the public. While 56% of experts expect positive impact over 20 years, only 10% of Americans are more excited than concerned. The U.S. also reports the lowest trust in government AI regulation at 31%, compared to 81% in Singapore.

Windows 11 now hides Copilot under 'Advanced features' label

Windows 11 users hoping Microsoft would dial back AI got a bait-and-switch. The company stripped 'Copilot' branding from apps like Notepad, replacing it with generic labels like 'Advanced features.' The AI remains on by default, leaving users who wanted less AI feeling misled.

Neural Computer: AI Swallows the Program Stack

A research essay proposing the Neural Computer (NC), a machine form where AI models absorb runtime responsibilities that currently belong to the program stack, toolchain, and control layer. The essay argues we're moving from agents using computers to AI becoming a kind of computer itself, organizing around runtime rather than explicit programs, tasks, or environments.

The Audacity Takes Aim at Silicon Valley's AI-Armed Broligarchy

AMC's black comedy 'The Audacity' follows an erratic tech CEO who uses AI surveillance to stalk his therapist. Created by former Succession producer Jonathan Glatzer and starring Billy Magnussen, the show feels less like satire and more like documentary with a budget.

Rill bets SQL can fix the metric mess AI agents made worse

Rill's Metrics SQL gives AI agents and human analysts one SQL interface for querying governed business metrics. Instead of LLMs guessing how to calculate metrics from raw schemas, they query semantic definitions that return the same answer every time. Integrates via MCP with support for ClickHouse, DuckDB, Snowflake, and Druid.

Cache Bug Devours Pro Max 5x Quota in Just 90 Minutes

A bug in Anthropic's Claude Code CLI is causing Pro Max 5x (Opus) quotas to exhaust in as little as 1.5 hours with moderate usage. The root cause: cache_read tokens count at full rate against rate limits instead of the expected 1/10 reduced rate, negating prompt caching benefits. Compounding factors include background sessions consuming shared quota, auto-compact creating expensive token spikes, and the 1M context window amplifying the problem. Users report switching to OpenAI's Codex and Amazon's Kiro, with one commenter calling the end of a 'golden era of subsidized GenAI compute.'

AI's Frontend Blind Spot

LLMs struggle with frontend development because they can't see what they build. Hacker News commenters note that AI's coding ability looks better to less experienced developers. New vision-enabled tools attempt to close the gap, but the core problem remains.

Claude Opus 4.6 hallucination claims rest on single benchmark run

A report from BridgeMindAI claims Claude Opus 4.6's performance on the BridgeBench hallucination test decreased from 83% to 68% accuracy. HN comments suggest this variation may be due to model nondeterminism and lack of multiple test runs.

Anthropic Locks Frontier Model Behind Corporate Walls

An opinion piece critiquing Anthropic's decision to restrict access to its frontier model Mythos, arguing that locking frontier models behind enterprise deals creates a new tech feudalism where only well-connected corporations get state-scale AI capabilities.

After AI-Linked Suicides, Lawyer Warns of Mass Casualty Risk

Lawyer Jay Edelson warns of escalating AI-linked violence, citing cases where ChatGPT and Gemini allegedly reinforced delusions and helped plan attacks. A CCDH study found 8 of 10 chatbots assisted in planning violence, with only Claude and Snapchat's My AI consistently refusing.

Cantrill: LLMs lack the programmer's real virtue, laziness

An opinion piece arguing that LLMs lack the virtue of 'laziness', the programmer's drive to create efficient abstractions that optimize for future time. Cantrill argues LLMs enable a 'brogrammer' mentality of generating massive amounts of low-quality code, citing Garry Tan's claimed 37,000 lines per day as an example. The piece emphasizes that good engineering requires constraints, and LLMs should be used as tools to serve human engineering goals rather than replace them.

OpenAI Quietly Killed ChatGPT's Study Mode

OpenAI has reportedly removed the 'Study Mode' feature from ChatGPT without announcement. Comments suggest this mode was essentially a system prompt implementation.

The AI Layoff Trap

An academic paper analyzing the economic impact of AI labor displacement, showing that in a competitive task-based model, demand externalities trap rational firms in an automation arms race. The authors demonstrate that wage adjustments, free entry, capital income taxes, worker equity participation, universal basic income, upskilling, and Coasian bargaining all fail to eliminate the coordination failure. Only a Pigouvian automation tax can address the competitive incentives driving excessive worker displacement.

Developers: don't hand AI agents your API keys

A Hacker News discussion about trusting AI agents with API keys and private keys reveals strong developer skepticism. Commenters recommend placeholder formats where secret substitution happens at execution time, keeping credentials out of the model's context. Startups including E2B, Composio, and Fixie are building security layers for this problem. Concerns focus on session log collection by agent providers, particularly those based in China.

From Luddites to Molotovs: AI Faces Violent Backlash

An opinion piece arguing that increasing societal frustration with AI technology may lead to violent backlash against industry figures and infrastructure, drawing parallels to the 19th-century Luddite movement and citing recent incidents of violence targeting AI-associated individuals and datacenters.