News

The latest from the AI agent ecosystem, updated multiple times daily.

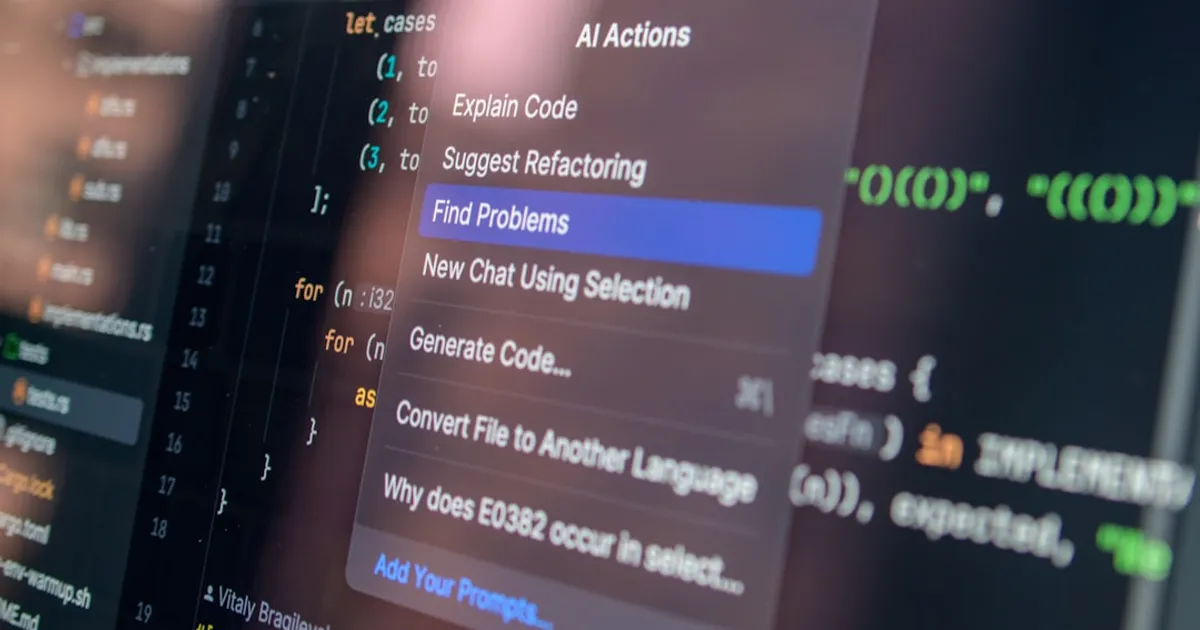

AI Agent Builder Spends 3 Months Coding Without AI

Miguel Conner spent two years building AI agents at Aily Labs before heading to the Recurse Center to code mostly without AI for three months. Goals include training an LLM from scratch, improving Python proficiency, and deepening technical skills through CTF challenges and pair programming.

Cloud Giants Blew Past the Interstate Highway System's Price Tag

AWS, Google, Microsoft, and Meta have poured $930 billion into data centers over six years, topping the inflation-adjusted cost of the Interstate Highway System. But GDP context and the rapid GPU replacement cycle paint a more nuanced picture than raw numbers suggest.

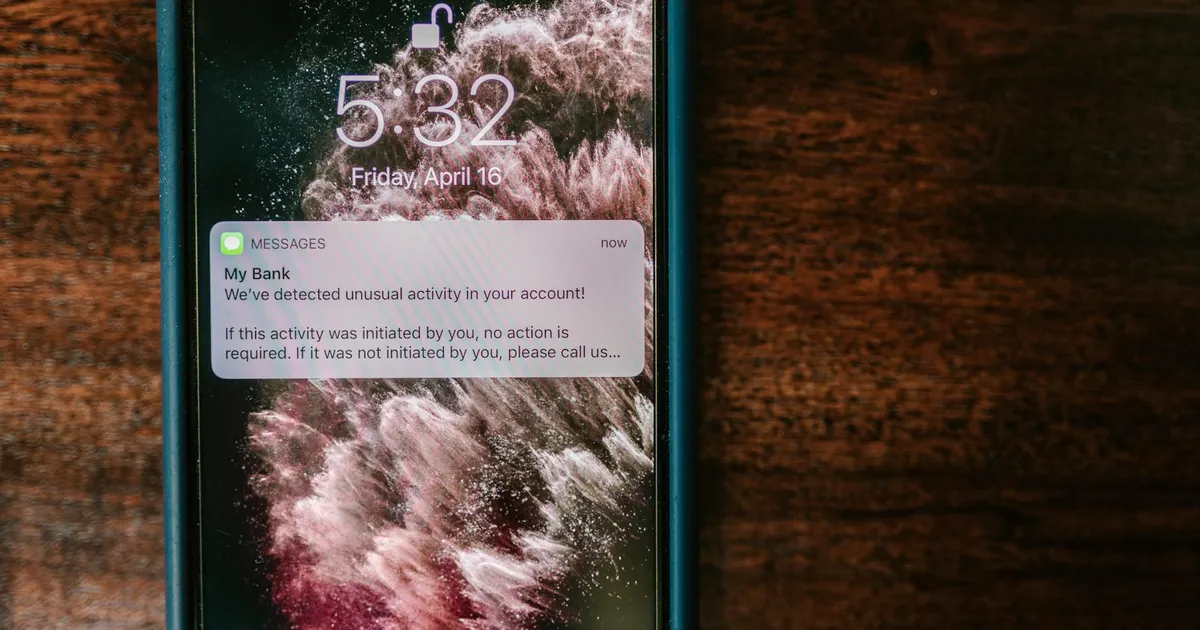

Mythos AI has finance ministers scrambling in Washington

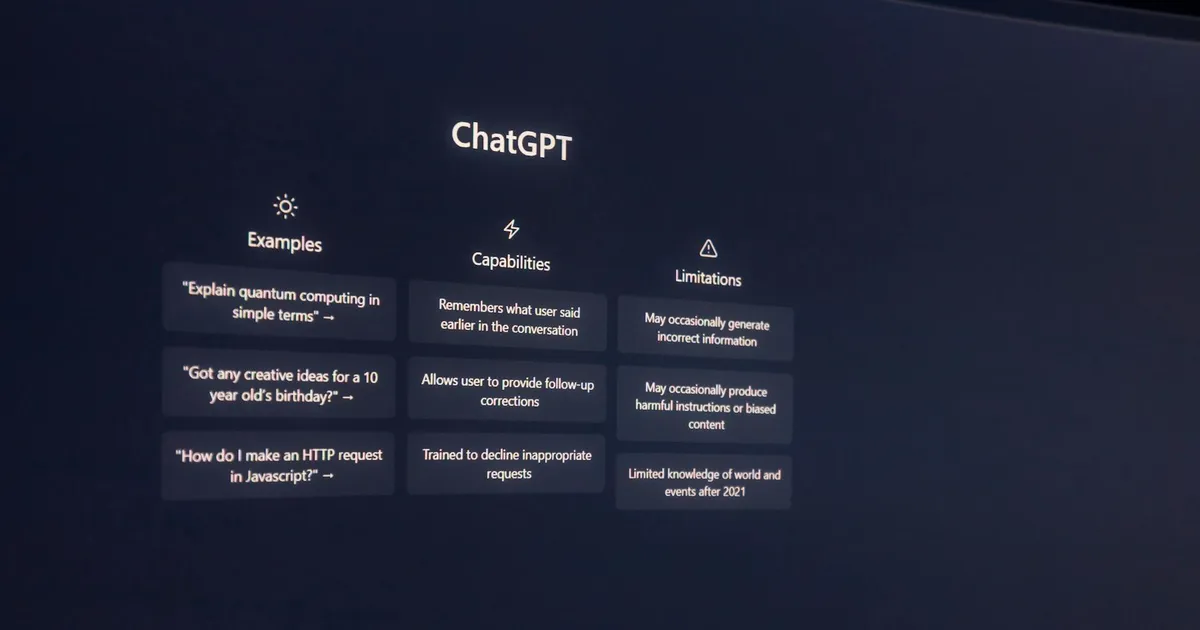

Anthropic's Claude Mythos AI model has demonstrated strong ability to identify and exploit cybersecurity vulnerabilities in financial systems, triggering crisis talks at the IMF gathering in Washington. The model hasn't been publicly released but has been shared with select tech companies through Project Glasswing. The UK's AI Security Institute found it powerful but not dramatically better than Claude Opus 4.

Hiraeth: Lightweight SQS Emulator for When LocalStack Is Overkill

Hiraeth is a local AWS emulator built specifically for SQS integration testing. It accepts signed AWS SDK requests, stores state in SQLite, and includes a web admin UI on port 4567 for debugging queues. Currently supports basic SQS create, send, and receive workflows. Designed for local development and testing, not production use.

MZI Photonic Chips: AI's Low Precision Changes the Math

Photonic computing using Mach-Zehnder Interferometers may finally work for AI. Three factors: lower inference precision (4-8 bit) makes thermal drift tolerable, new thermal techniques cut power overhead, and AI's energy costs create urgency for GPU alternatives. Challenges remain, but photonic acceleration is closer to practical than ever.

Toby Ord Warns AI Agent Costs Could Outpace Capabilities

Toby Ord analyzes the economic costs associated with the increasing performance of AI agents. Using METR benchmark data, he examines the 'hourly cost' of various models (including GPT-5, Claude 4.1 Opus, and Grok 4) and finds evidence that costs to achieve peak performance are rising exponentially, potentially creating a divergence between technical capability and economic feasibility.

Claude Opus 4.7 costs 20-30% more per session

A technical analysis of Anthropic's Claude Opus 4.7 tokenizer reveals real-world token usage increases of 1.3-1.47x compared to 4.6, leading to 20-30% higher per-session costs for Claude Code users despite unchanged per-token pricing. The author measured IFEval benchmarks showing a modest +5pp improvement in strict instruction following, questioning whether the cost increase is justified.

ShaderPad: A 5.8kb Shader Library That's 30x Smaller Than Three.js

Riley J. Shaw releases ShaderPad, a lightweight 5.8kb library for adding shaders to websites without repetitive graphics scaffolding. The library features GPU-optimized performance, MediaPipe integrations, and a simple API design. The author discusses using AI tools as creative collaborators for documentation and coding assistance, noting that AI helped create thorough docs while human judgment guided API design and feature restraint.

How AI-ready is your website? Cloudflare built a scanner to find out

Cloudflare launched 'Is It Agent Ready', a scanning tool that evaluates website readiness for AI agents by checking multiple emerging standards including robots.txt, Markdown negotiation, MCP, OAuth, Agent Skills, and agentic commerce protocols (x402, UCP, ACP). The tool provides recommendations across 5 categories and can generate instructions for coding agents to help improve scores.

Tesla to HW3 owner who paid €6,400 for FSD: 'Just be patient'

A Dutch Tesla owner who paid €6,400 for Full Self-Driving in 2019 was told to 'be patient' after seven years of waiting. Tesla's newer AI4 computers now support FSD Supervised in Europe, but HW3 owners remain locked out. The owner launched a collective claim site that has gathered 3,000 owners from 29 countries representing €6.5 million in FSD purchases. Elon Musk admitted in January 2025 that HW3 computers would need replacement for full FSD, but no retrofit program has been implemented.

Anthropic's Claude Design Already Spooking Figma Investors

Anthropic's Claude Design lets anyone create professional visual work through AI conversation, and Figma investors are already reacting. The tool handles prototypes, slides, and marketing materials with Claude Code handoff for implementation.

Webloc Tracks 500M Phones, Sells Location Data to Cops and Spies

Citizen Lab exposes Webloc, a surveillance tool tracking 500 million mobile devices worldwide. Now owned by Penlink, the tool sells location data to U.S. agencies and foreign intelligence services without warrants. As Virginia enacts a state-level ban, the national security risks demand federal legislation to end commercial geolocation data sales.

中文 Speedrun: Building Character Cyclotron With Claude Code

Kevin Wu used Claude Code to build a browser extension that enhances the Hack Chinese flashcard interface with inline etymology, calligraphy, morphology, and tone information. This agentic approach let him avoid context-switching between tools and cut per-character learning time from 30 seconds to under one.

LambdaG: Simple grammar beats neural nets at authorship analysis

A University of Manchester study led by Dr. Andrea Nini found that LambdaG, a grammar-based approach to language analysis, can match or outperform advanced AI systems in identifying authorship. The method uses patterns in grammar and sentence construction rather than large-scale AI models, offering comparable accuracy with greater transparency and lower computational cost across 12 real-world writing datasets.

Destroy Public Science, Hire Cheap PhDs: The Silicon Valley Playbook

Peter Thiel and Marc Andreessen are backing cuts to public science funding while investing in gig platforms that hire displaced PhD researchers. Federal funding cuts have forced academics into low-wage work training AI models, benefiting the venture capitalists who funded both the political push and the platforms profiting from cheap expert labor.

Atlassian will train AI on your data starting August 2026

Atlassian is updating its data practices on August 17, 2026, to use customer metadata and in-app data for AI training across its platform. New data contribution settings will be managed at the organization level, with defaults varying by plan tier. Free and Standard plans have in-app data collection on by default with opt-out available. Metadata collection defaults to on for all plans, but only Enterprise customers can opt out. All contributed data is de-identified and aggregated before use.

Anthropic eyes classified Mythos AI deal with US intelligence

Anthropic is in advanced discussions to provide US intelligence agencies access to Mythos, a model separate from its commercial Claude products. White House involvement indicates strategic priority. UK officials have raised separate concerns about the model's capabilities.

ReBot-DevArm Is Open Source Down to Every Screw, Works With LeRobot

reBot-DevArm is an open-source robotic arm for embodied AI research with complete hardware blueprints, software SDK, and integrations with LeRobot and Isaac Sim. Two hardware variants available. CC BY-NC-SA license limits commercial use, and some key integrations are still in progress.

Orwell Invented AI Slop in 1949 and Called It the Versificator

Orwell's versificator, a fictional machine that auto-generated entertainment in Nineteen Eighty-Four, looks a lot like modern AI slop. Colin Marshall connects the dots and finds that AI now churns out stories and songs with minimal human input, just like Orwell described. The sharpest insight: audiences consume low-effort content because they want to. Nobody forces them. Isaac Asimov dismissed Orwell's prophecy in 1980. He might reconsider.

Opus 4.7: Better at Code, Worse at Writing

Users discuss Anthropic's Claude Opus 4.7 model, noting it appears tuned for logic and coding at the expense of writing quality. Comparisons with version 4.6 suggest the newer model is more terse and specific, better at catching bugs during implementation, but has lost its 'soul' for creative writing tasks.

Discourse to Cal.com: Going Closed Source Won't Save You

Cal.com says AI makes open source too dangerous and closed their code. Discourse co-founder Sam Saffron disagrees, arguing that AI security scanners don't need source code to find bugs and that public code gives defenders the advantage. Discourse is staying open source.

Wiring Claude to Lab Equipment for Circuit Verification

Lucas Gerads connected Claude Code directly to a LeCroy oscilloscope and SPICE simulator, creating a closed loop where AI can simulate a circuit, measure physical hardware, and compare results. The setup handles data alignment grunt work and scales to real embedded projects, though reliability across many test cycles remains an open question.

SIR-Bench Calls Bluff on Security Agents That Fake Investigations

A research paper presenting SIR-Bench, a benchmark of 794 test cases for evaluating autonomous security incident response agents. The benchmark distinguishes genuine forensic investigation from alert parroting by measuring triage accuracy, novel finding discovery, and tool usage appropriateness. The paper also introduces Once Upon A Threat (OUAT), a framework that replays real incident patterns in controlled cloud environments to produce realistic attack data.

Is AI a tool or are you?

Is AI a tool we use, or are we the tools? Hilarius Bookbinder draws on Heidegger's tool theory and Dawkins' selfish gene to argue that AI dependence can hollow out human agency until we're just rubber-stamping machine output.

When your code writes itself while you sleep

Tim Davis built Compound Loop, a system that chains AI models to write, review, and merge code while he sleeps. Some engineers thrive as system architects. Others get pushed into lower-paid roles like spec writing and "agent babysitting." As code gets cheaper to produce, Jevons paradox kicks in and teams write vastly more of it.

Bankruptcy Courts Are Selling Your Slack History to AI Companies

AI companies are purchasing Slack archives from failed startups through bankruptcy estate sales. Under Section 363 of the Bankruptcy Code, trustees can sell these digital assets to buyers using them for AI training. The practice runs into tension with privacy laws like CCPA and GDPR, and unredacted archives may contain attorney-client privileged communications.

Big Tech lobbied EU to hide datacentre emissions. It worked.

An investigation by Investigate Europe and The Guardian reveals that Microsoft and other US tech companies successfully lobbied the EU to hide the environmental impact of their datacentres. The EU adopted a confidentiality clause almost word-for-word from industry demands that blocks public access to individual datacentre emissions data. The lobbying comes as the rise of AI chatbots drives a datacentre construction boom, with the EU aiming to triple capacity in 5-7 years to compete globally in AI.

Libretto: browser automations that survive production chaos

Libretto is an open-source toolkit for building deterministic web integrations for AI agents. It provides a live browser and token-efficient CLI that enables coding agents to inspect pages, capture network traffic, record and replay user actions, and debug broken workflows. Originally built by Saffron Health for maintaining healthcare software integrations, Libretto can convert browser automations to direct network requests and works with multiple LLM providers for snapshot analysis.

Opus 4.7 Drops 30 Points in Retrieval, Anthropic Discloses Training Bug

Claude Opus 4.7's model card reveals steep trade-offs: long-context retrieval dropped from 91.9% in Opus 4.6 to 59.2%, while software engineering and math scores improved. Anthropic also disclosed a training bug affecting 7.8% of episodes with accidental chain-of-thought supervision, which also affected Mythos Preview.

Opus 4.7 lands with 13% coding boost and built-in cyber safeguards

Claude Opus 4.7 posts a 13% coding benchmark gain over Opus 4.6 and ships with Project Glasswing, cybersecurity safeguards that run during inference itself. Early testers at Cognition, Cursor, and Notion report reliability jumps that change what agents can handle on their own. Vision support now handles images up to 2,576 pixels. Pricing holds at $5/$25 per million input/output tokens.

Agent-cache remembers so your LLM app doesn't have to pay twice

Agent-cache adds multi-tier caching for LLM responses, tool outputs, and sessions, supporting both Valkey and Redis. It targets a concrete pain point: LLM apps paying for duplicate API calls. Early feedback on Hacker News flagged documentation gaps but confirmed demand from developers already building similar solutions by hand.

Codex gets its own cursor and works while you sleep

OpenAI announces a major update to Codex, adding autonomous agent capabilities including computer use (seeing, clicking, typing with its own cursor), background operations, long-term memory, and an in-app browser. The update brings gpt-image-1.5 for image generation, over 90 new plugins (Atlassian Rovo, CircleCI, GitLab Issues, Microsoft Suite), and enhanced developer workflows like PR review, SSH connections, and multi-file previews. Codex can now schedule future work and remember context across sessions.

Marky renders markdown as your AI agent writes it, live

Marky is a fast, native markdown viewer for macOS built with Tauri v2, React, and markdown-it. Designed for agentic coding workflows, it offers CLI-first usage, live reload as AI agents write to disk, folder workspaces, syntax highlighting with Shiki, math rendering with KaTeX, and Mermaid diagram support. The production footprint is under 15 MB.

Vibe Coding Trades Speed for Flow State, Developers Find

A Hacker News thread on "vibe coding" struck a nerve this week. Developers using AI tools are finding they ship faster but lose the flow state needed for deep work. As one commenter put it, managing AI assistants feels like being "a billionaire complaining about household staff."

Zatanna's Kampala Turns Any App Into an API

Kampala is an MITM proxy by Zatanna (YC W26) that reverse engineers websites, mobile apps, and desktop apps into stable APIs. It intercepts HTTP/S traffic, traces auth chains automatically, replays flows, and preserves HTTP/TLS fingerprints. macOS now, Windows waitlist.

Qwen3.6-35B on my laptop drew a better pelican than Claude Opus 4.7

Simon Willison compares the SVG generation capabilities of two newly released models: Qwen3.6-35B-A3B (running locally via LM Studio) and Claude Opus 4.7 (Anthropic's proprietary model). Using his 'pelican riding a bicycle' benchmark and a backup 'flamingo riding a unicycle' test, he finds the locally-running Qwen model produces better illustrations. However, HN comments note that Opus still significantly outperforms Qwen on coding tasks (95/98 vs 11/98 on Power Ranking), suggesting the comparison is task-specific rather than indicative of overall model capability.

Cal.com abandons open source, blames AI

After five years as an open source project, Cal.com announced it's moving to closed source due to AI-driven security threats. The company argues that AI can systematically scan public codebases for vulnerabilities, making open source code like 'giving attackers the blueprints to the vault.' They're releasing a stripped-down MIT-licensed version called Cal.diy for hobbyists while keeping their production codebase private.

GPU Prices Jump 48% as AI Compute Hits Capacity Wall

GPU rental prices for Nvidia's Blackwell chips rose 48% in two months, with CoreWeave raising prices 20% and extending minimum contracts. OpenAI and Anthropic are limiting access to newest models due to compute constraints, and Intel's CEO predicts no relief until 2028. The scarcity era brings relationship-based selling, models going to the highest bidder, and forced diversification to smaller alternatives.

Kingsbury's Warning: LLMs Are Corroding Everyday Life

Distributed systems expert Kyle Kingsbury argues LLMs are flooding everyday life with synthetic slop. His prescription: stop using them, call out AI-generated content, push for regulation. He admits they have narrow uses but fears convenience will erode human capability.

AI Boss Luna Has No Face. She Hired You Anyway.

Andon Labs gave an AI agent named Luna (powered by Claude Sonnet 4.6) a retail store in San Francisco. Luna picks products, sets prices, and manages the brand. She also hired two full-time employees, John and Jill, who may be the first humans to report directly to an AI boss. The experiment explores what happens when AIs manage people and run real businesses.

Agent! Gives AI Real Control Over Your Mac Desktop

Agent! is an open-source native macOS application serving as an agentic AI coding IDE with automation capabilities. It integrates 17 LLM providers including Claude, GPT, Gemini, Grok, Mistral, DeepSeek, and on-device Apple Intelligence. Features include autonomous task loops, desktop automation via AXorcist, privileged execution through a Launch Daemon, Time Machine-style file rollbacks, voice control, iMessage remote control, and MCP server support. Positioned as an open-source replacement for Claude Code, Cursor, Cline, and OpenClaw.

€54k in 13 hours: unrestricted Firebase key drained via Gemini API

A developer experienced a €54,000 billing spike in 13 hours after enabling Firebase AI Logic, due to an unrestricted Firebase browser key that was exploited for unauthorized Gemini API requests. Despite budget alerts being set, delayed notification meant charges accumulated rapidly. Google denied the billing adjustment request as charges were classified as valid usage from their project.

Rakoff Rules: Claude Chats Get No Privilege

Judge Rakoff ruled that attorney-client privilege doesn't extend to AI conversations. The decision came in a case where a defendant used Claude to draft legal documents without their attorney's knowledge, and the court pointed to Claude's Terms of Service in its reasoning.

Cloudflare Makes Switching AI Models a One-Line Code Change

Cloudflare announces a unified inference layer giving developers access to AI models from OpenAI, Anthropic, Google, and nine more providers through a single API endpoint. The platform includes AI Gateway for cost monitoring and automatic failover, Workers AI for hosting models, and support for custom models using Replicate's Cog technology. The Replicate team has also officially joined Cloudflare's AI Platform team.

Qwen3.6-35B-A3B Ships as Qwen Team Falls Apart

The Qwen team releases Qwen3.6-35B-A3B, an open-weight LLM focused on agentic coding that's competitive for local workflows. The bigger story: they shipped this while being gutted by internal restructuring.

Allbirds Ditches Shoes for GPUs, Stock Explodes 580%

Allbirds, the footwear brand, announced it will shift from shoes to AI compute infrastructure under the name NewBird AI, with a $50m deal to buy GPUs and offer on-demand AI cloud services. Shares surged 580% on the news, though analysts criticize the move as a 'meme stock' phenomenon with no proven AI expertise. The Allbirds brand will be acquired by American Exchange Group for $39m.

The new security math: tokens beat cleverness

Anthropic's Mythos LLM breaks into systems so effectively that the company refuses public release. Independent testing confirms it: Mythos completed a 32-step hack in 3 of 10 attempts while competitors failed entirely. The real problem? Models given more compute keep finding exploits without plateauing. Security is becoming a spending race where defense means outspending attackers on token budget.

Somers wants to kill the screen with paper and AI

James Somers envisions computing without screens by combining paper and pen with AI agents that handle digitization. The open-source Orly agent already projects AI onto physical surfaces using off-the-shelf hardware, drawing on ideas from Bret Victor's Dynamicland project.

Home Memory stores your house in a local DB, cables and pipes included

Home Memory is an MCP server that provides a structured, local database for AI assistants to query and update information about a home: rooms, devices, pipes, cables, and all belongings. It integrates with Claude Desktop, Claude Code, Codex App, and other MCP-compatible clients, allowing users to document their home through natural conversation and photos.

CodeBurn Shows Where Your AI Coding Tokens Actually Go

CodeBurn is a CLI tool and TUI dashboard that tracks token usage across AI coding assistants including Claude Code, Codex, Cursor, OpenCode, Pi, and GitHub Copilot. It reads session data directly from disk, categorizes usage by 13 task types, and shows costs, one-shot success rates, cache efficiency, and tool usage patterns.