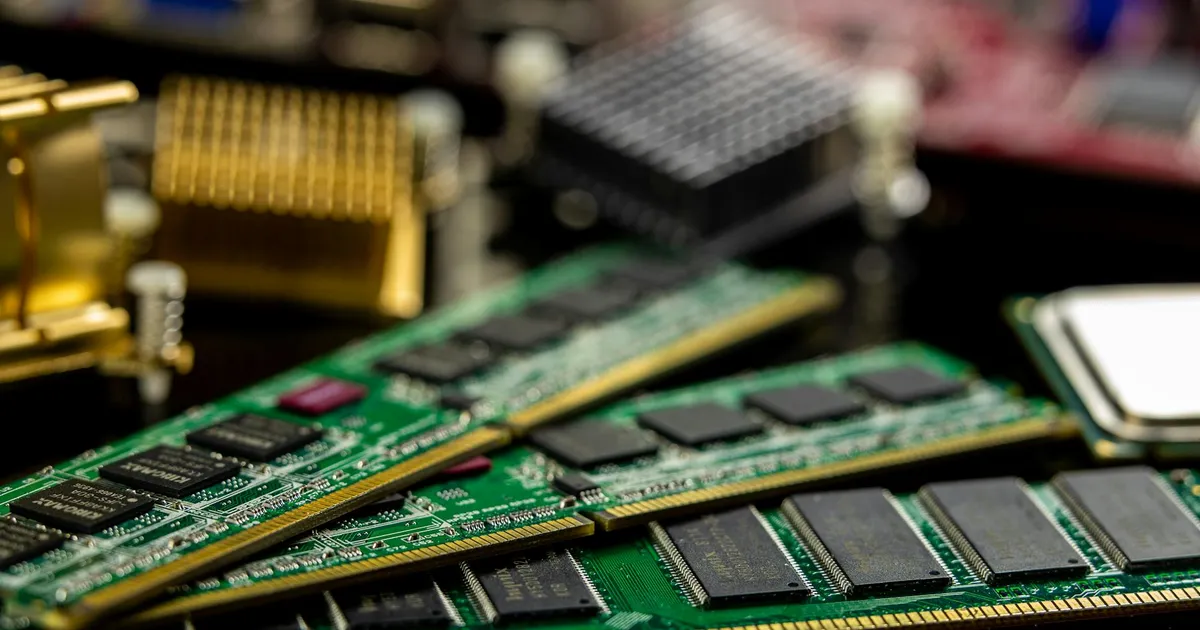

AI companies are eating the world's RAM supply, and everyone else is paying for it. A single OpenAI development run might consume 20-25% of global RAM wafer capacity, according to reporting from NPR's Scott Simon and The Verge's Sean Hollister. This resource intensity mirrors the challenges Claude Code users face, specifically regarding OpenClaw consuming disproportionate resources. Memory chip prices have jumped 3-6x, and a DDR5 kit that cost $150 a few months ago now runs $885. This isn't a temporary blip. Samsung, SK Hynix, and Micron are deliberately shifting production away from standard DRAM to High Bandwidth Memory (HBM), the stuff Nvidia and AMD need for AI accelerators. HBM sells for up to 10x the profit margin of commodity memory, so the choice is obvious. The problem is that HBM is wildly inefficient to make, requiring 2-3 times the wafer input for the same capacity. The manufacturers aren't rushing to build new fabs either. They remember what happened last time they overproduced. Some additional capacity will come online in 2028, but that's two years away.

Gaming companies are already feeling it. Valve delayed its living room console and is rethinking pricing. Sony might push its next PlayStation to 2029. Mid-range phones could jump from $500 to $600 because memory accounts for 15-20% of their material cost. Router and set-top box RAM is up roughly sevenfold. The shortage will work its way through smartphones, cars, smart appliances, and hospital equipment. As the ecosystem grows, experts warn that not all tools are beneficial; for example, Ken Kantzer advises avoiding agentic frameworks that prioritize quantity over quality. There's no clear end in sight because nobody knows when the AI infrastructure buildout slows down. As Hollister put it, we're watching the biggest infrastructure buildout in history, and it has an unquenchable thirst for memory.