News

The latest from the AI agent ecosystem, updated multiple times daily.

600 Lines of C# Is All You Need for a Working GPT Model

MicroGPT.cs packs a complete GPT implementation into roughly 600 lines of plain C#. No PyTorch, no TensorFlow, no NuGet packages. It's a faithful port of Andrej Karpathy's microgpt.py, built by developer milanm, and includes an autograd engine, character-level tokenizer, transformer layers with multi-head attention, and a full training loop. The project runs on .NET 10, trains a tiny model on human names, then generates new ones that sound plausible but don't exist.

Microsoft quietly removes Copilot branding from Windows 11 apps

Microsoft is removing Copilot buttons from Windows 11 apps including Notepad, Snipping Tool, Photos, and Widgets. The underlying AI features will remain, with Notepad replacing the Copilot button with a 'writing tools' menu that provides similar functionality.

Someone Firebombed Sam Altman's House. A Suspect Is in Custody.

A suspect was arrested after a Molotov cocktail attack at the home of OpenAI CEO Sam Altman, according to Reuters reporting.

Eve: Managed OpenClaw Without the Weekend Debugging

Eve is a managed version of the open-source OpenClaw agent framework, offering 100+ built-in skills for tasks like meeting coordination, invoice management, and expense reporting without the hassle of self-hosting and maintenance.

Why Your AI Inference is Slow: You're Fighting the Hardware

Your hardware is fast. Your code isn't. Caer Sanders on Martin Fowler's site explains why understanding CPU caches and ditching locks makes AI inference dramatically faster, with examples from Wayfair and LMAX.

A Quadcopter Physics Sim That Fits in 30 Lines of Python

A walkthrough of building a 2D quadcopter physics simulation from scratch, covering equations of motion, state-space formulation, and Python implementation. The author spent six months replicating UZH's champion-level drone racing research and is writing the tutorials they wished existed.

Bank CEOs summoned to DC over Anthropic's vulnerability-hunting AI

The US Treasury secretary summoned major American bank CEOs to Washington to discuss cybersecurity risks posed by Anthropic's unreleased Claude Mythos AI model, which has exposed thousands of vulnerabilities in widely used software. The meeting included Fed chair Jerome Powell and heads of systemically important banks. Anthropic has restricted Mythos to select companies including Amazon, Apple, and Microsoft due to unprecedented cybersecurity risks.

Google AI Overviews: better answers, worse citations

A study by startup Oumi found Google's AI Overviews, powered by Gemini 2 and Gemini 3 models, were accurate 85% and 91% of the time respectively. Given Google's search volume, this translates to hundreds of thousands of false answers per minute. The study also found 'ungrounded' answers (where citations don't support the information) increased from 37% to 51% between the two model versions.

Anthropic Spots Third-Party Apps Through System Prompt Fingerprints

Research shows Anthropic detects third-party LLM clients like OpenCode and Aider through system prompt content analysis. Replacing custom prompts with Claude Code's official prompt bypasses detection entirely.

GitButler Raises $17M to Build Version Control for AI Agents

GitButler, co-founded by GitHub co-founder Scott Chacon, raised a $17M Series A led by a16z to build version control infrastructure designed for AI agent collaboration. The company released a technical preview CLI targeting trunk-based workflows, arguing that Git can't track AI provenance metadata like which LLM generated code or what prompt was used.

Tesla Circles Back to Cheap EV After Robotaxi Reality Check

Tesla is developing a new compact SUV priced below the Model 3, reversing Elon Musk's 2024 decision to kill the $25,000 Model 2 program in favor of Robotaxi. The 4.28-meter vehicle would use a smaller battery and single motor, with production planned for Shanghai. The shift comes as Tesla's Robotaxi program struggles with only about 8 unsupervised vehicles in Austin, while sales have declined from a 2023 peak of 1.81 million to 1.636 million in 2025.

MCP Beats Skills for LLM Service Connections

David Mohl argues MCP beats Skills for connecting LLMs to services. Skills work for knowledge transfer, but MCP's API abstraction means zero installs, automatic updates, and proper OAuth instead of plaintext tokens. His take: use MCP for services, Skills for knowledge only.

Microsoft's Copilot rollback: Mozilla exposes years of dark patterns

Mozilla criticizes Microsoft's aggressive rollout of Copilot in Windows, citing dark patterns, forced installations, and disregard for user consent. After Microsoft pulled Copilot from core Windows apps following user pushback, Mozilla's Linda Griffin framed it as part of a broader pattern of overriding user choice. Firefox 148 offers a contrasting approach with a centralized 'Block AI Enhancements' switch.

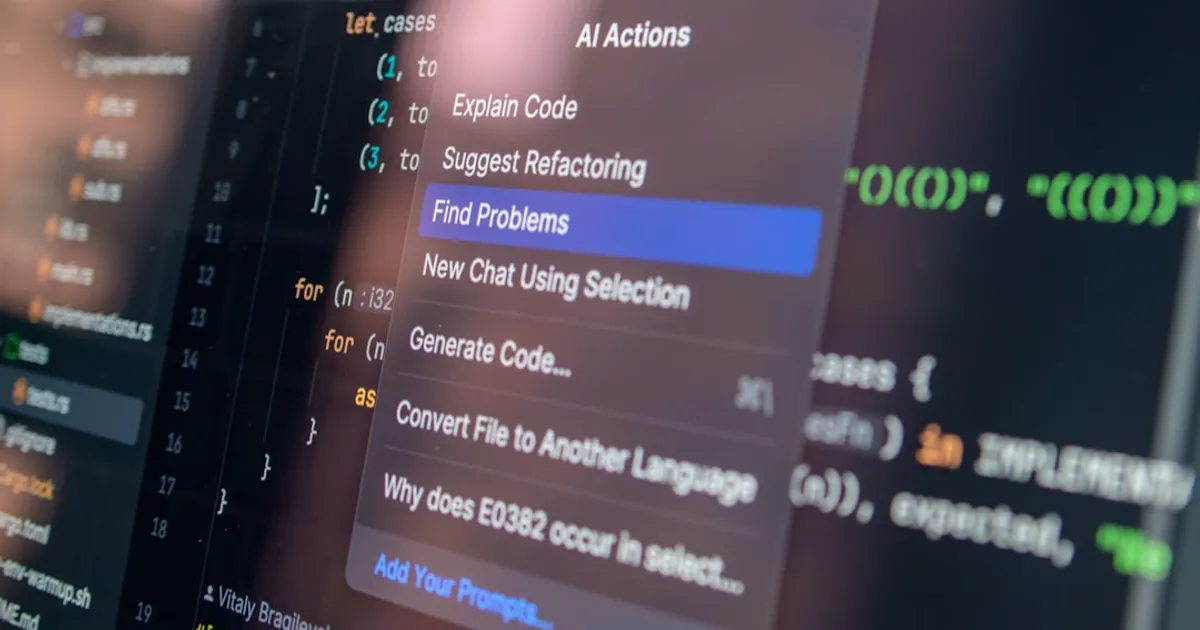

Twill.ai races coding agents on your task, delivers the best PR

Twill delegates coding tasks to cloud agents and returns finished pull requests. Assign the same bug fix or feature to Claude Code, OpenCode, or Codex, run them in parallel, and compare outputs. The platform handles research, planning, implementation, and AI code review in sandboxed environments before delivering a PR.

Canonical Bets 2026 is RISC-V's Breakout Year

RISC-V has been promised for years. Canonical is betting 2026 is when it arrives for real, with Ubuntu LTS support and a path for AI-accelerated custom hardware.

Apple's UK iPhone Update: Think You're an Adult? Prove It.

Apple's iOS 26.4 update automatically enables web filtering and AI-powered 'Communication Safety' tools for UK users, restricting access until they verify their age through credit cards, driver's licenses, or pre-2008 Apple accounts. Big Brother Watch argues this isn't required by UK law, excludes millions without acceptable ID, and sets a dangerous precedent for device-level internet controls worldwide.

Marimo pair gives AI agents Python notebooks that remember

Marimo pair provides reactive Python notebook environments designed for AI agents to work in. The tool preserves state between sessions, so agents can pick up where they left off instead of starting from scratch every time.

Trivy attack proved every secrets manager has a runtime flaw

When attackers slipped malware into Aqua Security's Trivy scanner (v0.69.4), millions of CI/CD pipelines ran malicious code that harvested API keys. The attack revealed a flaw in every major secrets manager: tools like HashiCorp Vault and AWS Secrets Manager protect keys at rest but dump them as plaintext at runtime, where any compromised tool can read them. VaultProof's split-key architecture offers one way to close this gap.

Cars Were Already Robots. Now Tesla's Building Real Ones.

Modern cars are adopting robot architecture (steer-by-wire, 48V zonal architecture, centralized compute, sensor fusion), foreshadowing how such systems will spread to industries that move physical things. Tesla is converting Model S/X production to manufacture Optimus humanoid robots at 1M units/year starting 2027. Automotive suppliers like Hyundai Mobis and Schaeffler are entering the robotics actuator market, with implications for construction, logistics, defense, and agriculture industries.

Hotz Bets He'll Own a Zettaflop Before He Dies

George Hotz lays out his vision for a personal zettaflop-scale supercomputer (1e21 FLOPS), with detailed calculations on power consumption, solar infrastructure, and a $30M price tag.

QVAC SDK Brings AI Inference to JavaScript, No API Keys Required

QVAC SDK runs AI models directly in JavaScript environments, no cloud required. The Hacker News launch signals growing demand for local inference tools among web developers.

Training Order Matters More Than You Think

The order you feed training examples to your model matters more than you think. Experiments using Lie brackets on an MXResNet trained on CelebA show that swapping examples creates measurable parameter differences, catching real failures like a model predicting impossible attribute combinations.

Five Pure-Java Projects Running Transformer Models on CPUs and GPUs

Five open-source projects now enable transformer model inference entirely in Java, no Python or C++ required. Llama3.java, Gemma4.java, Jlama, GPULlama3.java, and Qxotic leverage modern JDK features like the Vector API, Panama FFI, and GraalVM Native Image to run models from Llama 3 to Gemma 4 on CPUs and GPUs.

OpenAI Tests Ads in ChatGPT. Your Chats Power Them.

OpenAI has begun testing advertisements in ChatGPT for users on Free and Go plans in the US. Ads appear below responses, are clearly labeled, and do not influence ChatGPT's answers. Users can control ad personalization, and paid tiers (Plus, Pro, Business, Enterprise, Edu) remain ad-free. An Ads-Free option is available for Free plan users with reduced usage limits and feature access.

D&D's Combat Nightmare Gets Formal Verification

A technical deep-dive into using formal modeling and model-based testing with Quint and XState to model the complex combat rules of Dungeons & Dragons. The author created a formal specification covering all character classes, conditions, counterspell chains, and interrupt mechanics. The MBT approach caught numerous bugs including argument swaps, state sync issues, and design flaws, while also processing 12,700 community Q&A entries into Quint assertions via LLM-assisted translation.

Zoneless: Open-source Stripe Connect clone with $0.002 fees using USDC

Zoneless is an open-source drop-in replacement for Stripe Connect's payout functionality, enabling global marketplace payments using USDC on Solana with ~$0.002 fees. It offers a Stripe-compatible API, instant payouts, self-hosting capabilities, and is designed for AI agent economies and microtransaction marketplaces. The tool is already production-tested at PromptBase, an AI marketplace with 450,000+ users.

OpenAI Lobbies for Immunity on AI-Linked Mass Casualties

OpenAI is supporting an Illinois state bill (SB 3444) that would shield AI labs from liability in cases where AI models cause serious societal harms, including mass casualties or at least $1 billion in property damage. The bill defines frontier models as those trained using more than $100 million in computational costs, potentially affecting major AI labs like OpenAI, Google, xAI, Anthropic, and Meta.

ChatGPT's Racial Slur Traced to Metal Lyrics Jailbreak

A Hacker News discussion sharing a ChatGPT conversation link that allegedly contains the AI using racial slurs. The fetched page content only shows the ChatGPT login/interface page, not the actual conversation content. The HN comments reference a metal song search but do not provide substantive context about the title's claim.

Scientists invented a fake disease. AI diagnosed people with it.

Researchers led by Almira Osmanovic Thunström created a fake skin condition called 'bixonimania' and uploaded fraudulent academic papers, complete with acknowledgements thanking Starfleet Academy, to test whether LLMs would propagate misinformation. Within weeks, major AI systems including ChatGPT, Google Gemini, Microsoft Copilot, and Perplexity began repeating the invented condition as real medical advice. The fake papers were even cited in peer-reviewed literature before being retracted.

Mythos Found Zero-Days. So Did a $0.11 Model.

AISLE found that a 3.6B parameter model ($0.11/M tokens) can detect the same FreeBSD zero-day that made Anthropic's Mythos famous. Their analysis of eight open-weight models reveals AI cybersecurity capability is 'jagged' and doesn't scale smoothly with model size, challenging the assumption that bigger models are the competitive moat.

OpenAI Pushes Bill: Our AI Could Cause Mass Death, Just Don't Sue

OpenAI backs Illinois bill SB 3444, which would shield AI labs from liability for critical harms caused by frontier models if they publish safety reports. Critical harms means 100+ deaths or $1B+ in damage.

Tesla revives cheap EV after Musk's Robotaxi bet flops

Tesla is reportedly developing a new compact SUV priced below $34,000, reversing Elon Musk's 2024 decision to kill the affordable EV program in favor of Robotaxi. The new vehicle, to be produced in Shanghai, acknowledges that fully autonomous driving hasn't materialized as promised. Tesla's Robotaxi service operates only about 8 unsupervised vehicles in Austin, while Chinese competitors like BYD and Xiaomi push affordable EVs at prices Tesla can't match.

GPT-5.4 Still Falls for Prompt Injection in OpenClaw

GPT-5.4 remains vulnerable to prompt injection in OpenClaw. A security researcher demonstrated attacks in web fetch and email scenarios where the model executes untrusted code via multi-step exploits using encoded strings and tool call chains, ignoring security notices. The findings coincide with HackMyClaw, a $1000 challenge testing similar techniques.

OpenAI Wants Immunity If Its AI Helps Kill a Hundred People

OpenAI is backing Illinois bill SB 3444, which would shield AI developers from lawsuits when their models cause mass death of 100+ people or at least $1 billion in property damage. Developers get protection as long as they didn't intentionally cause harm and published safety reports.

40% Unemployment and 3-Day Work Weeks Are Mathematically Identical

A 40% unemployment rate and a 3-day work week are the same thing, mathematically. Economist Alex Tabarrok argues that AI's impact on work is a policy choice, not destiny. Between 1870 and today, US work hours fell 40% without unemployment spikes. We absorbed that shift through longer childhoods and retirements. AI could follow the same path with the right policy levers.

Messy code costs more when AI agents do the reading

The codebases that work best for AI agents probably won't look like what we'd write for humans. Flat hierarchies beat abstractions, and your CLAUDE.md file matters more than your linter.

Locked out by age bias, skilled workers now train AI for $21/hr

Skilled workers over 50, locked out of their fields by age discrimination, are turning to AI data annotation as a last resort. The Guardian profiles professionals including a former IT manager, ER physician, and academic who now label data for OpenAI, Google, and Meta through contracting firms. While top experts can earn $180/hour, most make $20-40/hour with no benefits. These 'bridge jobs' keep workers afloat while they train the AI that might replace them.

OpenAI shelves £31bn UK deal that was mostly hot air

OpenAI pulled the plug on its £31bn Stargate UK project, blaming energy costs and regulations. A Guardian investigation had already exposed the deal as mostly empty promises. The Essex 'supercomputer' site was still scaffolding in March. OpenAI's actual commitment was vague: 'exploring the offtake' of 8,000 Nvidia GPUs at datacenters built by Nscale, a startup with zero completed projects.

Gen Z Turns Against AI as Entry-Level Jobs Vanish

A Gallup study examining changing emotional attitudes of young adults (Gen Z) toward AI reveals decreased hope and increased anger. HN commentary suggests this shift stems from job displacement concerns, as organizations reduce junior and intern hiring in favor of AI adoption.

Whack-a-Mole: Finetuning Reactivates Copyrighted Text in LLMs

A new paper shows finetuning bypasses safety alignment in LLMs, causing GPT-4o, Gemini-2.5-Pro, and DeepSeek-V3.1 to reproduce 85-90% of held-out copyrighted books from semantic descriptions alone. The effect generalizes across authors and reveals an industry-wide vulnerability, undercutting legal defenses that rely on the strength of copyright protection measures.

Lafargue Saw Your AI Anxiety Coming in 1883

Historian Robert Zaretsky connects Paul Lafargue's 1883 pamphlet 'The Right to Be Lazy' to modern fears about AI displacing workers, arguing that idleness as emancipation offers a useful lens for thinking about automation.

Nile Local turns your laptop into a data lake

An AI-powered data IDE called Nile Local runs entirely on your laptop, aiming to replace cloud data tools for privacy-conscious teams. Unlike general chatbots, it provides a structured environment for data workflows. But sparse documentation makes it hard to evaluate whether it delivers on that promise.

Anthropic Ships Managed Agents: No More DIY Infrastructure

Claude Managed Agents is a pre-built, configurable agent framework that runs in managed infrastructure, designed for long-running tasks and asynchronous work. It provides a fully managed environment where Claude can read files, run commands, browse the web, and execute code securely, with built-in prompt caching, compaction, and performance optimizations. Currently in beta with rate limits of 60 create requests and 600 read requests per minute.

Databricks co-founder wins ACM Prize, claims AGI is already here

Databricks co-founder and CTO Matei Zaharia, creator of Apache Spark, wins the 2026 ACM Prize in Computing with a $250,000 award. In an interview, he states that 'AGI is here already, it's just not in a form that we appreciate,' arguing that society should stop applying human standards to AI models. He warns about security risks with AI agents like OpenClaw and expresses excitement about AI for automating research in biology and engineering.

Court won't block Pentagon blacklisting Anthropic over AI ethics

Anthropic just lost a key round against the Pentagon. A federal appeals court won't stop the military from blacklisting the AI company over its refusal to let Claude power surveillance or autonomous weapons. The stakes are billions in government contracts.

AI Did It in 12 Minutes. It Took Me 10 Hours to Fix It

Ibrahim Diallo used GLM-5 from z.ai to generate roughly 5,000 lines of PHP for a media manager project in 12 minutes, then spent 10 hours refactoring it into 1,254 lines of maintainable code. The experience reinforced his belief that developers need a mental model of their application to fix AI-generated code when it breaks. Hacker News commenters largely agreed, warning against deploying "vibecoded" applications.

Waymo's Robot Cars Run Out of Road in NYC

Waymo's permits for testing autonomous vehicles in New York City expired on March 31, ending testing operations that had been running in Brooklyn and Manhattan since last summer. The company had eight robot cars operating with safety drivers and reported zero collisions. Waymo, a subsidiary of Alphabet, operates driverless vehicles in 10 other U.S. cities and is hoping the state DMV permit might be renewed through upcoming budget negotiations.

Relvy Automates On-Call Runbooks Because Nobody Updates Your Wiki

Relvy, a Y Combinator Winter 2024 startup, wants to kill the runbooks sitting forgotten in your Notion workspace. Built by two former Samsara engineers, the platform handles routine incidents automatically while escalating the tricky ones to humans. Hacker News users confirmed the problem is real, but questioned whether specialized tools can hold off general AI agents.

Mass Effect Artist: DLSS 5 Risks Erasing Game Art's Soul

Veteran game artist Mark Linington (Mass Effect, Halo, Overwatch 2) says Nvidia's DLSS 5 has crossed from enhancement into reinterpretation, risking the 'soul' of game art. He wants hands-on artist control with reference images and lighting direction, not just sliders. Most major studios already use AI in production, but Linington draws a line between AI as a careless shortcut versus a genuine production partner.

Vercel Claude Code plugin wants to read your prompt

An investigation into privacy concerns with the Vercel plugin for Claude Code, which collects telemetry data including full bash command strings and prompts across all projects. The consent mechanism uses prompt injection rather than proper UI elements, and data collection occurs even on non-Vercel projects without clear disclosure.