Aishwarya Goel, an AI industry professional who uses Claude and ChatGPT daily, published an essay on March 15, 2026 arguing that heavy reliance on AI tools may be quietly eroding a critical phase of human thought. The piece, titled "Delay the Inference," does not reject AI assistance outright — Goel is explicit that tools like Claude have unlocked capabilities she previously found out of reach, particularly in writing and brainstorming. Her concern is more specific: that the reflex to open a chat window before a thought has fully formed may be short-circuiting the internal, generative stage where original ideas take shape.

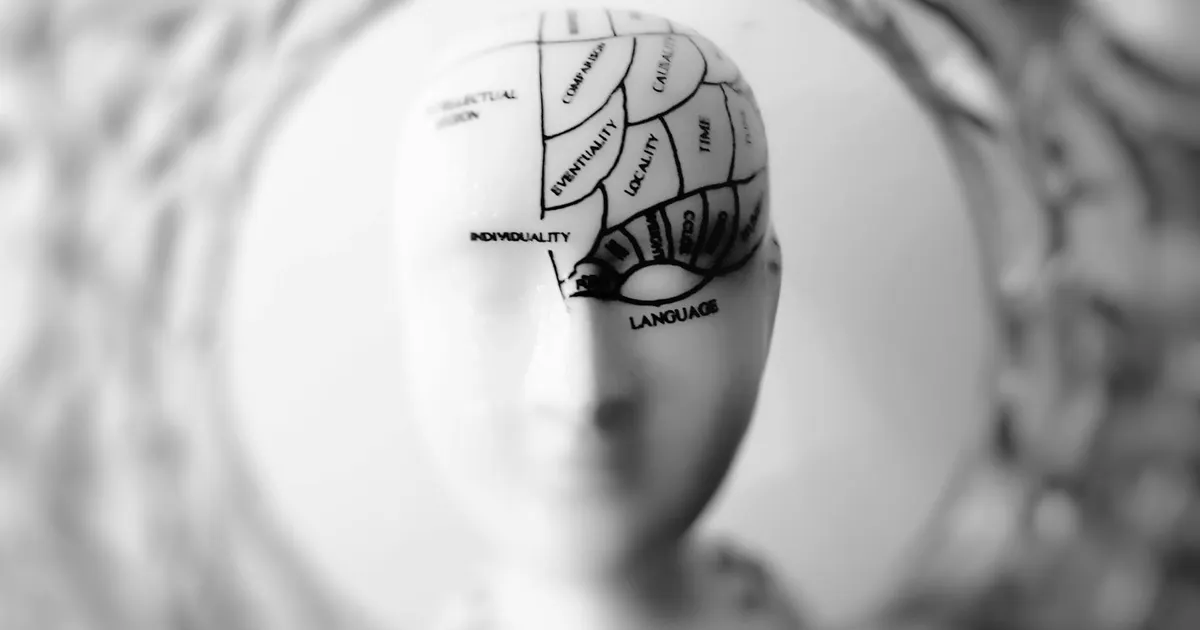

The essay's central concept draws on a deliberate double meaning. In machine learning, inference is the step where a model converts a prompt into output. In human cognition, inference is the process of arriving at a conclusion. Goel argues that both processes are degraded when triggered prematurely. She references neuroscience research on the default mode network — the brain system associated with mind-wandering, memory consolidation, and associative thinking — to suggest that the value lost to early prompting is not raw intelligence, but the unstructured background processing that precedes genuinely original thought.

Goel offers her own workflow as a practical framework: collect raw notes in Apple Notes over weeks, draft a rough version independently, do her own research, and only then bring AI in for brainstorming and refinement. Think first, prompt later. The sharpest observation in the essay is behavioral: reaching for a chat window when a thought gets quiet is structurally the same as checking a phone when a conversation lulls. Both are reflexes triggered by cognitive discomfort, not by a genuine need. The discipline she's proposing is less about AI skepticism than about recognizing that reflex for what it is.