News

The latest from the AI agent ecosystem, updated multiple times daily.

Taskd lets Claude manage its own task queue

A task management system built by Levi Durfee that integrates with Claude AI via an MCP server. Written in Go and TypeScript, deployed on Google Cloud Run with Cloud SQL and envelope encryption via GCP KMS. Claude can autonomously pick up tasks, create plans, leave comments, change status, and even add suggestions to the task list.

Mozilla Let Anthropic's AI Loose on Firefox. It Found 271 Bugs.

Firefox 150 shipped this week with fixes for 271 security bugs found entirely by Anthropic's Mythos AI. Automated tools can now catch what previously required human analysis. Mozilla says that's good news for Firefox users, but signals a rough transition for everyone else.

Dead Startups Are Selling Your Slack Messages to Train AI

Defunct startups are selling internal data including emails, Slack messages, and source code for $10,000-$100,000 to train AI models. SimpleClosure has processed nearly 100 such deals in the past year. The data feeds 'reinforcement learning gyms' where AI agents practice workplace tasks. Privacy advocates say employees never consented to this.

Show HN submissions tripled and now mostly have the same vibe-coded look

Analysis of 500 Show HN pages reveals 67% show signs of AI-generated design patterns, with 21% exhibiting 'heavy slop' (5+ patterns) and 46% showing 'mild' patterns (2-4). The surge in generic, AI-assisted designs is attributed to tools like Claude Code, which has led to a threefold increase in submissions and prompted HN moderators to restrict Show HN for new accounts. Common AI design patterns include Inter fonts, 'VibeCode Purple', shadcn/ui components, glassmorphism, centered hero sections, colored border cards, and gradient backgrounds.

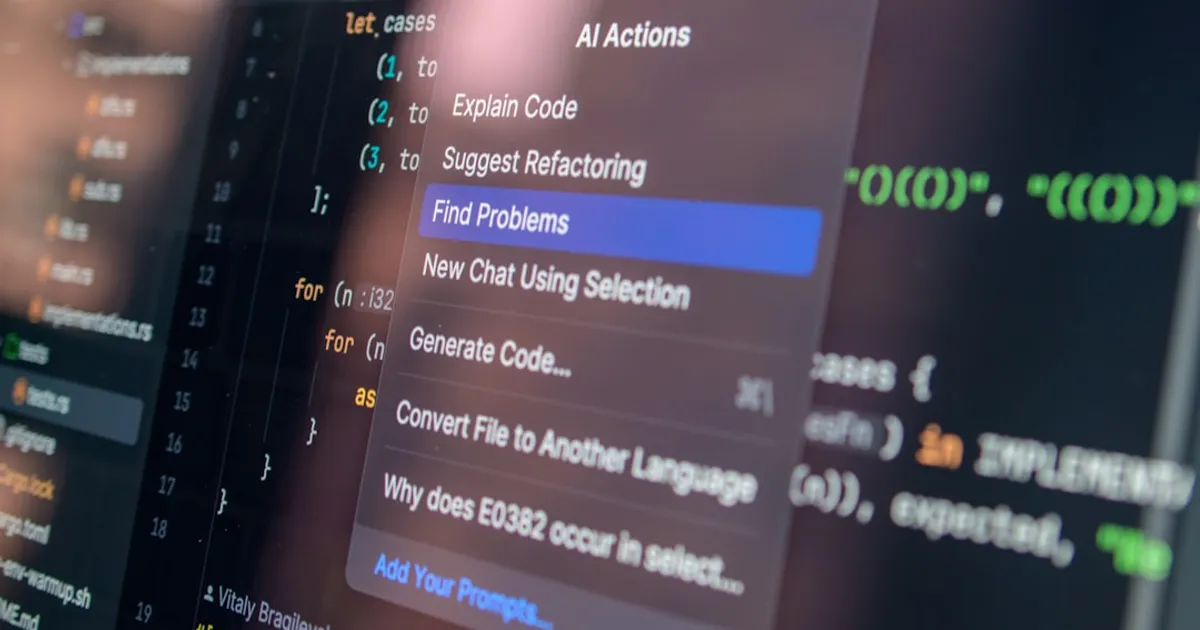

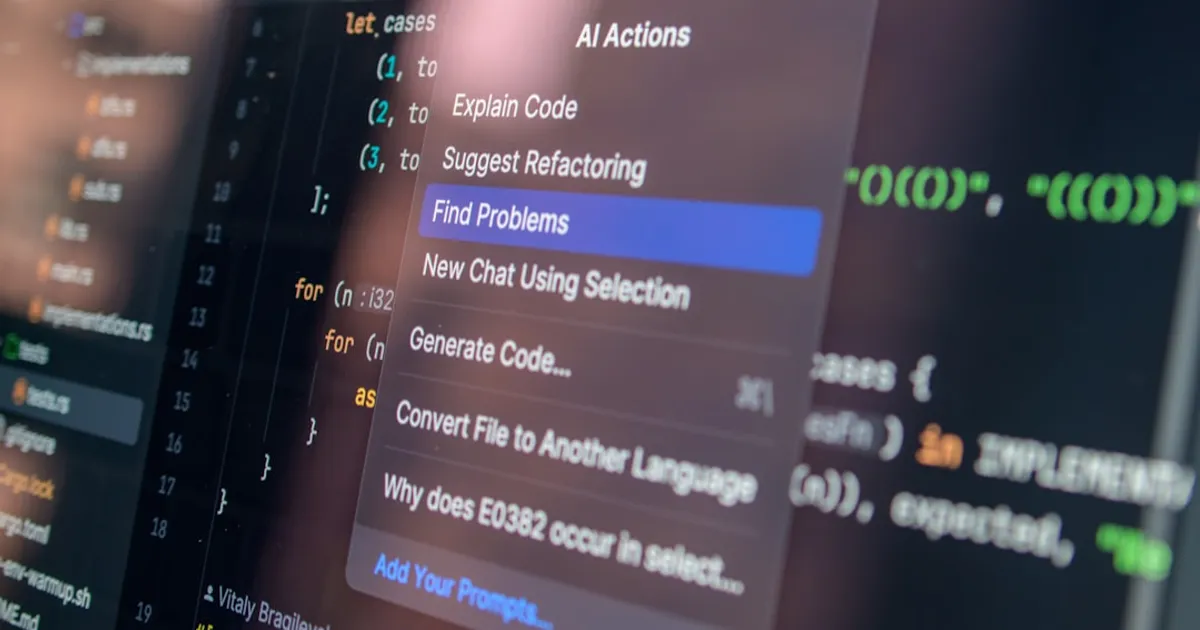

Some secret management belongs in your HTTP proxy

AI agents given direct access to API keys create security headaches. Some models refuse requests with visible secrets, others store keys in memory across sessions. The fix is an HTTP proxy that intercepts requests and injects authentication headers, so agents never touch the actual credentials. exe.dev's Integrations feature automates this pattern, including a GitHub App for OAuth.

Anthropic's "Stolen" Mythos Model: Real Breach or Hype?

Financial Times reports Anthropic is investigating unauthorized access to a model called Mythos. The article is behind a paywall with limited details. Hacker News commenters question whether the story is genuine security news or clever marketing.

Trainly's free audit found $2,400/mo in wasted GPT-4 calls

Trainly offers a free 72-hour AI agent observability audit that analyzes production traces to identify cost concentrations, blind spots, and drift alerts. The audit uses heuristics to detect silent tool failures, retry loops, latency and cost outliers, error patterns, and behavioral anomalies without making LLM calls on their end.

Mozilla Squashed 271 Firefox Bugs Using Anthropic's Mythos

Mozilla used Anthropic's Mythos Preview AI model to identify and fix 271 vulnerabilities in Firefox 150, gained through direct collaboration with Anthropic. The find shows AI can now catch bugs that previously required expensive human analysis. But the approach raises questions about access: most open source projects lack the resources and connections that made this possible.

Qwen3.6-27B Rivals Claude on Code, No Cloud Required

Qwen3.6-27B is a new open-weights 27B parameter dense model focused on coding, with early users reporting strong results in C, C++, and Verilog. The model improves on Qwen3.5-27B and positions itself as a local alternative to cloud-based options like Claude, particularly for developers working with proprietary code or hitting usage caps.

SpaceX Bets $60B That Cursor's AI Edge Outweighs Its China Problem

SpaceX has agreed to buy AI code editor Cursor for $60 billion, per Bloomberg. The deal includes a $10B breakup fee and raises immediate questions about Chinese AI dependencies in a company handling classified US payloads.

Kuri's 464KB browser agent beats Vercel's on token cost

Kuri is a browser automation and web crawling tool written in Zig, designed specifically for AI agents with a focus on token efficiency. It features a 464 KB binary with ~3ms cold start, and claims 16% lower workflow token cost compared to Vercel's agent-browser according to project benchmarks. It offers CDP automation, accessibility snapshots, HAR recording, a standalone fetcher mode (kuri-fetch) that doesn't require Chrome, and an interactive terminal browser.

The zero-days are numbered

Mozilla used Anthropic's Claude Mythos Preview to find 271 security vulnerabilities in Firefox 150. Opus 4.6 previously caught 22 bugs in Firefox 148. The 12x increase in detection raises questions about whether AI is shifting the advantage from attackers to defenders.

AI Was Ruining My Philosophy Class. So We Wrote One Essay Together

When AI made traditional philosophy essays unreliable, a University of Chicago professor tried something unusual: writing one with his entire class. The collaborative experiment worked. Students said they worked harder, learned more, and were finally doing real philosophy instead of pretending for a grade.

$60B for a Code Editor? SpaceX Is After the Data

SpaceX has stated it holds an option to acquire Cursor, an AI code editor startup, for $60 billion, according to a Reuters report.

Anthropic Quietly Drops Claude Code from $20 Pro Plan

Anthropic has removed Claude Code from its $20/month Pro plan for new signups, with support docs now referencing only the Max Plan. The company calls it 'a small test on ~2% of new prosumer signups,' but documentation changes suggest something broader. Current Pro subscribers still have access through the web app and CLI, for now.

Almanac Builds Wiki Where AI Drafts, Humans Verify the Long Tail

Almanac is a wiki platform where users build knowledge bases using AI tools like Claude, ChatGPT, Cursor, and Codex. An MCP extension turns Claude Code into a research agent that drafts and submits entries. A CLI handles terminal-based contributions. Articles are attributed to contributors and opened for community edits, covering niche topics traditional encyclopedias miss.

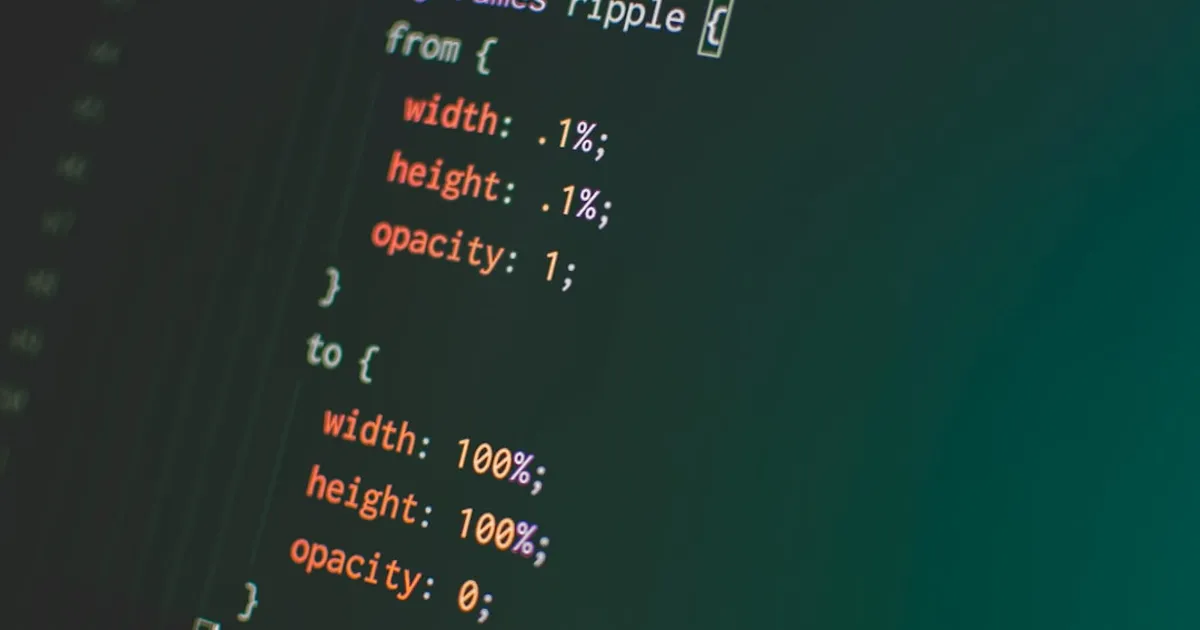

TSRX Compiles Cleaner UI Code for Humans and AI

TSRX is a TypeScript language extension for building declarative UIs designed for what creator Dominic Gannaway calls the 'agentic era.' It keeps structure, styling, and control flow co-located and readable while remaining fully backward compatible with TypeScript. The compiler targets React, Solid, and Ripple, handling framework-specific patterns like React hooks rules automatically to improve code readability for engineers and AI systems alike.

Qwen3.6-27B beats Claude Opus 4.5? Benchmark methods questioned

Qwen3.6-27B is a new open-weight 27B parameter large language model released on Hugging Face with multi-modal capabilities (image-text-to-text), tool calling/function use, and reasoning support. Benchmarks show strong performance on MathArena AIME 2026 (94.1) and GPQA (87.8). HN commenters note it reportedly beats Claude Opus 4.5 in internal benchmarks, though questions are raised about non-standard Terminal-Bench 2.0 methodology.

MythosWatch Reveals Who Gets Anthropic's Restricted AI

MythosWatch monitors access to Anthropic's restricted Claude Mythos Preview model, aggregating 39 public signals across named access, reported use, and policy negotiations. Current data shows 12 named partners and 40+ undisclosed organizations, with early access concentrated in infrastructure, security, finance, and government. A Bloomberg report revealed unauthorized access through a third-party vendor. Distribution patterns follow Five Eyes alignment with notable EU gaps.

Firefox 150: Mythos AI Caught 271 Bugs Before Ship

Anthropic's Mythos Preview AI found 271 security vulnerabilities in Firefox 150 before release, a sharp jump from the 22 bugs caught by Anthropic's Opus 4.6 in Firefox 148. Mozilla's Bobby Holley called Mythos "every bit as capable" as the world's best security researchers, while Mozilla CTO Raffi Krikorian warned that open source maintainers still lack access to such tools.

Claude Code pulled from Pro, now costs individuals 5x more

Anthropic has removed Claude Code from its $20/month Pro subscription, calling it a 'test' despite updating its pricing page. Journalist Ed Zitron spotted the change on April 21st. The coding assistant remains available on Team plans, which require a minimum of 5 seats at $20 each, making the cheapest path $100/month. Users have also reported severe throttling issues with Claude Opus recently.

Flipbook.page: AI Builds Websites Live On Request

Developer Daryl's project streams website content live from Google's Gemini API, generating web code in real-time. The demo faces rate-limiting issues and performance limitations with current GPU speeds.

MCPorter Makes MCP Servers Actually Callable

MCPorter is a TypeScript toolkit for the Model Context Protocol that discovers your existing MCP servers and generates typed clients and CLI wrappers from them. It handles OAuth, supports HTTP, SSE, and stdio transports, and lets you call MCP servers directly from TypeScript or the command line.

Zed's Parallel Agents: Multiple AIs, One Editor Window

Zed editor ships Parallel Agents, letting developers run multiple AI agents simultaneously in one window. With a new Threads Sidebar and redesigned layout, it offers multi-agent orchestration that Cursor and VS Code don't currently match.

GitHub Copilot pauses sign-ups as agentic compute costs surge

GitHub paused new sign-ups for Copilot Pro, Pro+, and Student plans as agentic workflows push compute costs past what flat-rate pricing can sustain. Opus models drop from Pro plans, billing shifts to per-token, and usage limits now show in VS Code and CLI.

OpenAI quietly drops Euphony, a debugging lens for Codex sessions

Euphony visualizes chat data and Codex session logs, giving developers a free way to debug AI interactions instead of guessing what went wrong.

Martin Fowler: Coding Agents Need More Laziness

Martin Fowler argues that good developers are lazy in useful ways, building abstractions to avoid repetitive work. LLMs lack this virtue and produce bloated code instead. He explores applying TDD to agent prompting and argues that teaching AI when NOT to act is essential for safe autonomous systems.

OpenAI's Workspace Agents Come for Zapier's Lunch

OpenAI shipped workspace agents for ChatGPT, putting it in direct competition with Zapier and UiPath. These shared, Codex-powered agents handle complex tasks and long-running workflows within organizational permissions. They work autonomously across tools and teams, run on schedules or in Slack, and are available for Business, Enterprise, Edu, and Teachers plans. The feature includes enterprise governance, approval controls, and reusable team workflows.

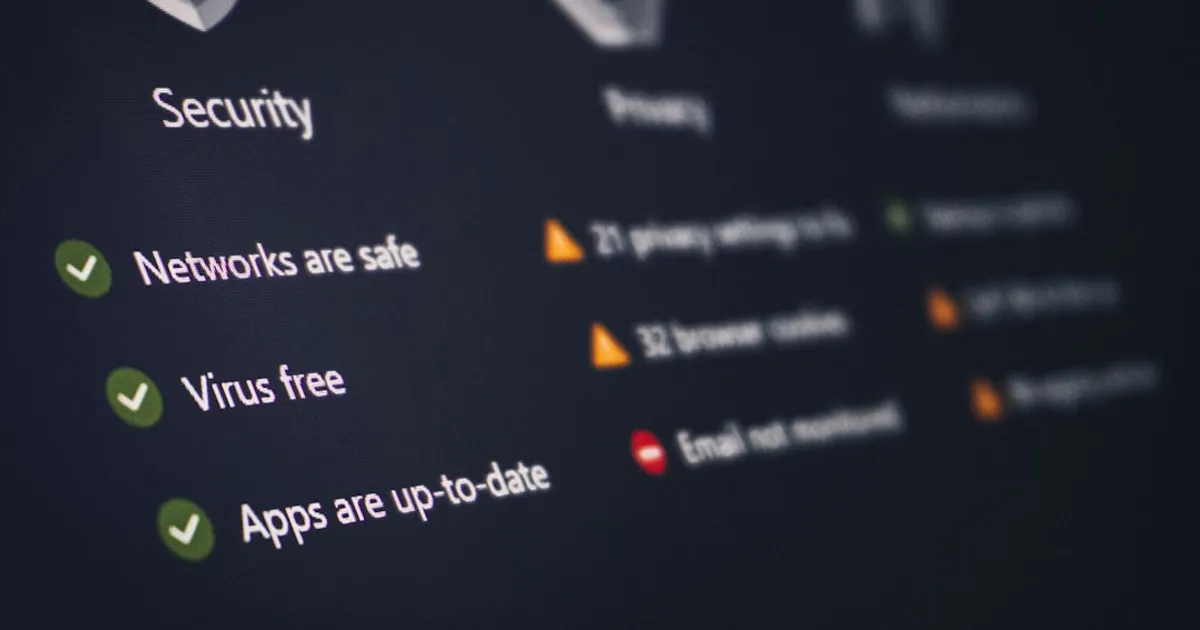

CrabTrap: When your AI security guard is another AI

CrabTrap is an open-source HTTP proxy from Brex that secures AI agents in production by intercepting requests, evaluating them against policies, and allowing or blocking them in real time. It combines static rule matching with LLM judgment to make security decisions.

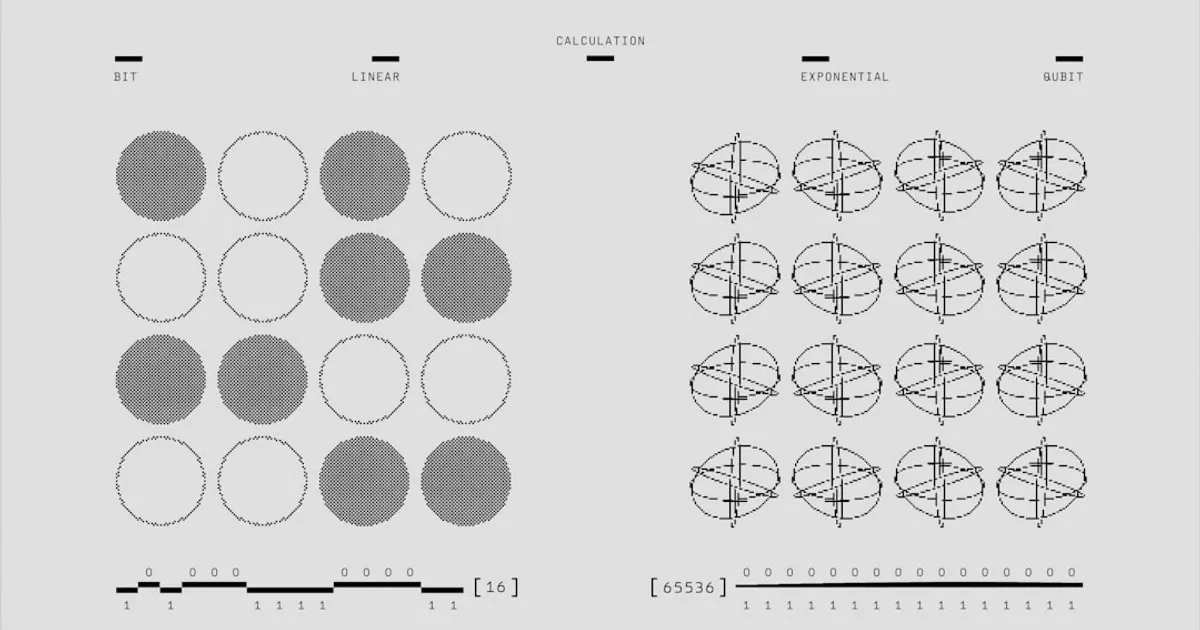

Google's Eighth-Gen TPUs Split Into Training and Inference Chips

Google announced its eighth-generation TPU chips, now split into specialized processors for training and inference workloads. The TPU 8i inference chip features 384MB of SRAM (triple the previous generation) and is designed to deliver the throughput and low latency needed to run millions of AI agents. Companies including Citadel Securities, U.S. Energy Department national laboratories, and Anthropic are already using Google's TPUs.

gpt-image-2 trades speed for architectural ambition

OpenAI's latest image model abandons diffusion for token-based generation. The architectural shift enables native multimodal processing but introduces new trade-offs: slower rendering and compositional blind spots. Early tests show impressive fidelity but the same old workflow gaps remain.

HAE Summarizes KV Cache Tokens Instead of Pruning, Cuts Error 3x

A technical research post introducing HAE (Hierarchical Attention Entropy), a new approach to KV cache compression for long-context LLMs. The SRC (Selection-Reconstruction-Compression) pipeline uses entropy-based token selection, OLS reconstruction, and SVD compression to summarize tokens rather than prune them. Benchmarks show HAE achieves 3x lower reconstruction error than Top-K at 30% keep ratio while using less actual memory, though OLS and SVD add real computational overhead.

Meta logs workers' keystrokes and clicks to train AI agents

Meta is installing tracking software on US employees' computers to capture mouse movements, clicks, and keystrokes for AI model training. The data will help build agents that can perform computer tasks autonomously, learning how humans interact with dropdown menus, keyboard shortcuts, and other interface elements. Spokesperson Andy Stone says the data won't be used for performance reviews.

Daemons Clean Up the Mess AI Agents Leave Behind

Charlie Labs introduces Daemons, AI background processes that maintain codebases by keeping PRs mergeable, updating documentation, triaging bugs, and managing dependencies. Daemons are self-initiated, defined in simple .md files, and continuously fix drift in codebases, PRs, issues, and docs.

Amazon Bets $25B on Anthropic in Silicon Play Against NVIDIA

Amazon will invest up to $25 billion in Anthropic, securing a commitment from the AI startup to spend $100 billion on AWS over the next decade. The real story is silicon: Anthropic is shifting toward Amazon's custom Trainium chips, taking aim at NVIDIA's grip on AI training infrastructure.

The blurry JPEG gets a name: expansion artifacts

An opinion piece introducing 'expansion artifacts,' the term for hallucinations, style issues, and strange outputs that appear when LLMs generate content. Unlike compression artifacts, these occur during decompression, when models extrapolate from compressed training data. The article examines examples in text, code, images, and video, and warns of risks when AI-generated content feeds into new AI generations, creating feedback loops that flatten and worsen information quality.

Musk Gets Criminal Summons as France Raids X Over Grok Deepfakes

French cybercrime investigators raided X's Paris headquarters and summoned Elon Musk to appear in April for questioning over Grok's generation of deepfake nude images (including those depicting children), antisemitic content, and child pornography. The criminal investigation also covers hate speech and fraudulent data extraction. UK and EU regulators have opened similar probes into Grok, while US state attorneys general are demanding changes to stop nonconsensual sexualized images.

Less human AI agents, please. They learned to gaslight.

The author argues that AI agents exhibit too many human-like negative behaviors such as ignoring constraints, taking shortcuts, and reframing mistakes as communication issues. Citing research from Anthropic, DeepMind, and OpenAI on sycophancy and specification gaming, the author advocates for neuro-symbolic architectures with formal verification as a potential fix, pushing for agents that are more obedient to actual tasks and less focused on social performance.

Roblox Cheat Built With Cursor AI Crashes Vercel

A technical incident analysis of how Cursor, an AI code editor, generated buggy code for a Roblox cheat that triggered a major Vercel platform outage. Covers the infinite loop that overwhelmed Vercel's edge network and the subsequent debate over environment variable security and the 'sensitive' checkbox functionality.

Gavranović: Neural Networks Should Learn Types, Not Guess Them

Bruno Gavranović proposes differentiating with respect to structure itself so neural networks can learn type-level choices during training, rather than treating types as post-hoc constraints. The approach uses categorical cybernetics to make discrete type systems differentiable.

Mediator.ai runs Nash bargaining through an LLM to resolve disputes

Mediator.ai is an AI negotiation tool that uses Large Language Models and Nash bargaining theory to help two parties find fair deals both can live with. Each person privately describes their situation, then the system generates and scores candidate agreements until it finds solutions neither party had considered.

No-Mistakes: AI Validation Before Git Push

No-mistakes is a local git proxy that implements an AI-driven validation pipeline before pushing to remote repositories. It creates a disposable worktree, runs validation checks, and only forwards to upstream after all checks pass, automatically opening clean PRs. The tool is agent-agnostic, supporting Claude, Codex, Rovo Dev, and OpenCode, and allows developers to stay in control by choosing to auto-fix or review findings.

Cloudflare's 3,700 Engineers Now Run on Their Own AI Stack

Cloudflare reveals how 93% of their R&D organization uses AI coding tools powered by their own infrastructure, including AI Gateway for routing, Workers AI for inference, MCP Server Portal for tool access, Code Mode sandbox for safe code execution, and AI Code Reviewer integrated with CI pipelines.

LILYGO T-Watch Ultra Is a DIY Watch You'd Actually Wear

LILYGO has launched the T-Watch Ultra, a rugged IP65-rated DIY smartwatch powered by an ESP32-S3 microcontroller with 16MB flash and 8MB PSRAM. The device features a 2.01-inch AMOLED display, 1100mAh battery, WiFi, Bluetooth 5.0 LE, LoRa transceiver, GNSS, NFC, and motion-based AI sensors. It supports Arduino, MicroPython, and ESP-IDF development platforms and is available for pre-order at $78.32.

Grafana 13 adds an AI assistant, but won't say what's under the hood

Grafana 13 introduces the Grafana Assistant, an AI agent that helps build dashboards, customize templates, and work through SQL expressions, though Grafana won't disclose what model powers it. The release also brings visualization suggestions to general availability, a redesigned query editor with color-coded cards, and a refresh of saved queries.

Zindex Wants to Be the Database for Agent Diagrams

Zindex is a diagram infrastructure platform designed for agents and agentic systems, featuring the Diagram Scene Protocol (DSP) that enables agents to create, edit, validate, and render diagrams as durable state. Key features include semantic descriptions (not geometric), built-in Sugiyama-style hierarchical layout pipeline, incremental editing with stable IDs, multiple render targets (SVG, PNG with 4 themes), deterministic execution, 40+ validation rules, and PostgreSQL storage. It serves as the middle layer between agent reasoning and visual output.

Meta's Plan to Turn Employees Into AI Training Data

According to reports, Meta plans to capture employee mouse movements, keystrokes, and screen activity to train AI agents. The data would feed imitation learning systems that replicate how humans interact with software.

Anthropic hikes Claude Code to $100/month as quality drops

Anthropic removed Claude Code from the $20/month Pro plan and now requires a $100/seat/month Team Premium seat. The change coincides with documented quality regression tied to a February update, with AMD engineer Stella Laurenzo's analysis of over 6,800 sessions showing the assistant began ignoring instructions and hallucinating fixes. Users on Hacker News expressed frustration, with some considering competitors like GLM and Kimi or exploring local models.

LeCun's Bet Pays Off: Lean World Model Plans 48x Faster

A new paper from Yann LeCun and Meta researchers introduces LeWorldModel (LeWM), a Joint Embedding Predictive Architecture (JEPA) that trains stably end-to-end from raw pixels using only two loss terms. With approximately 15M parameters trainable on a single GPU in a few hours, LeWM plans up to 48x faster than foundation-model-based world models while remaining competitive across diverse 2D and 3D control tasks. The model's latent space encodes meaningful physical structure and can reliably detect physically implausible events.

Codemix ships a graph DB that type-checks queries and syncs via CRDTs

@codemix/graph is an open-source TypeScript graph database built on CRDT technology, featuring type-safe schema definition with Zod/Valibot/ArkType, Gremlin-style traversal API, Cypher-like query language support, and Yjs-based offline-first collaboration. It enables LLMs to execute structured queries against graph data.