News

The latest from the AI agent ecosystem, updated multiple times daily.

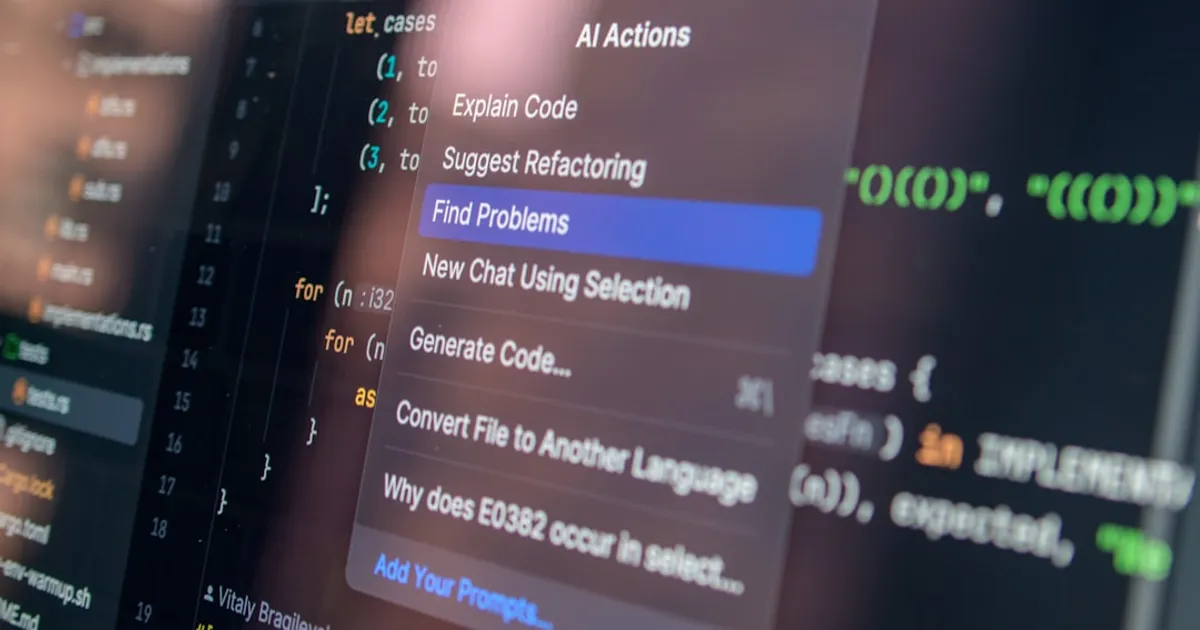

Roblox Cheat Built With Cursor AI Crashes Vercel

A technical incident analysis of how Cursor, an AI code editor, generated buggy code for a Roblox cheat that triggered a major Vercel platform outage. Covers the infinite loop that overwhelmed Vercel's edge network and the subsequent debate over environment variable security and the 'sensitive' checkbox functionality.

Cloudflare's 3,700 Engineers Now Run on Their Own AI Stack

Cloudflare reveals how 93% of their R&D organization uses AI coding tools powered by their own infrastructure, including AI Gateway for routing, Workers AI for inference, MCP Server Portal for tool access, Code Mode sandbox for safe code execution, and AI Code Reviewer integrated with CI pipelines.

Grafana 13 adds an AI assistant, but won't say what's under the hood

Grafana 13 introduces the Grafana Assistant, an AI agent that helps build dashboards, customize templates, and work through SQL expressions, though Grafana won't disclose what model powers it. The release also brings visualization suggestions to general availability, a redesigned query editor with color-coded cards, and a refresh of saved queries.

Codemix ships a graph DB that type-checks queries and syncs via CRDTs

@codemix/graph is an open-source TypeScript graph database built on CRDT technology, featuring type-safe schema definition with Zod/Valibot/ArkType, Gremlin-style traversal API, Cypher-like query language support, and Yjs-based offline-first collaboration. It enables LLMs to execute structured queries against graph data.

Meta logs workers' keystrokes and clicks to train AI agents

Meta is installing tracking software on US employees' computers to capture mouse movements, clicks, and keystrokes for AI model training. The data will help build agents that can perform computer tasks autonomously, learning how humans interact with dropdown menus, keyboard shortcuts, and other interface elements. Spokesperson Andy Stone says the data won't be used for performance reviews.

Mediator.ai runs Nash bargaining through an LLM to resolve disputes

Mediator.ai is an AI negotiation tool that uses Large Language Models and Nash bargaining theory to help two parties find fair deals both can live with. Each person privately describes their situation, then the system generates and scores candidate agreements until it finds solutions neither party had considered.

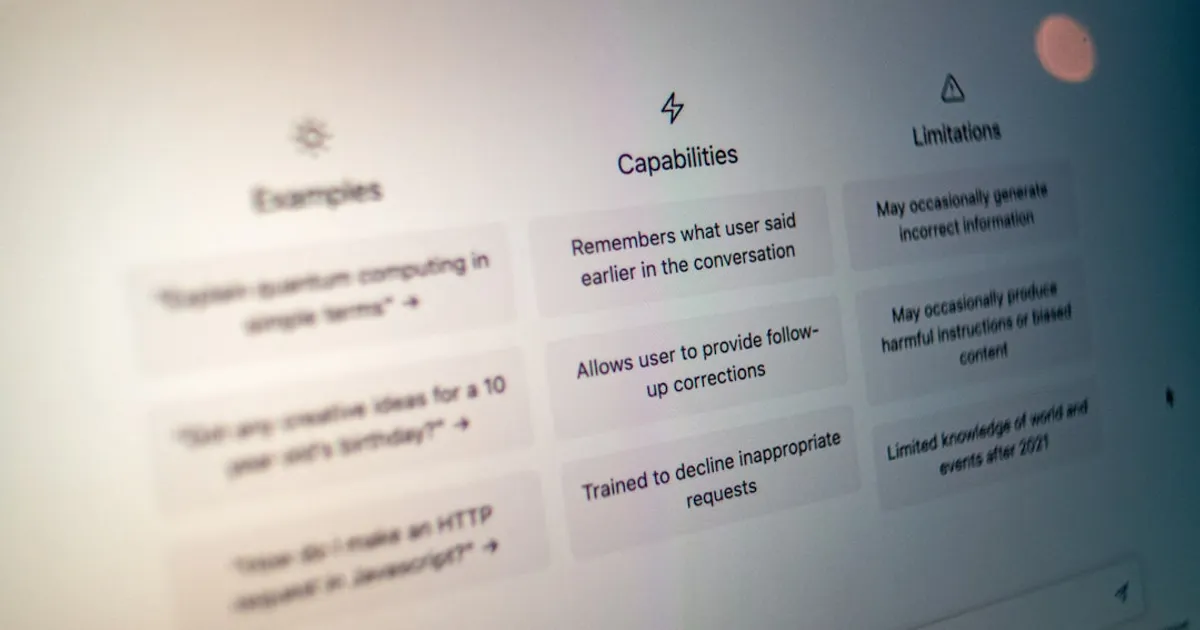

The blurry JPEG gets a name: expansion artifacts

An opinion piece introducing 'expansion artifacts,' the term for hallucinations, style issues, and strange outputs that appear when LLMs generate content. Unlike compression artifacts, these occur during decompression, when models extrapolate from compressed training data. The article examines examples in text, code, images, and video, and warns of risks when AI-generated content feeds into new AI generations, creating feedback loops that flatten and worsen information quality.

OpenClaw Security Model Repeats MS-DOS Mistakes, Researcher Argues

Security researcher Davi Ottenheimer argues OpenClaw and NVIDIA's NemoClaw repeat the security failures of MS-DOS: single process, single token, no real isolation. His alternative, Wirken, puts security at the tool layer with Ed25519 identities per channel, out-of-process vaults, and containers locked down with cap_drop ALL, no-new-privileges, and read-only rootfs.

NSA runs Anthropic's Mythos as Pentagon tries to kill it

The NSA is reportedly using Anthropic's Mythos Preview model for cybersecurity purposes, despite the Department of Defense blacklisting Anthropic as a 'supply chain risk' and ongoing legal proceedings. Mythos, restricted to about 40 organizations due to its offensive cyber capabilities, is being used to scan environments for security vulnerabilities. Meanwhile, Anthropic CEO Dario Amodei met with White House officials to discuss government use of the model.

AI Security Tools Got Good. curl's Maintainer Pays the Price.

Daniel Stenberg says curl now receives legit AI-generated security reports every 20 hours. The slop is gone. The real bugs never stop. He'll lay out what this means for open source at foss-north on April 28.

Qwen3.6-Max-Preview Claims Near-Opus Benchmarks, Cloud Only

Alibaba's Qwen team released Qwen3.6-Max-Preview, a cloud-only model claiming benchmarks near Claude Opus. The release continues their dual strategy of open weights for local use alongside proprietary cloud models, though the community questions how long the openness will last.

NSA runs Anthropic's Mythos while calling it a risk

The NSA is reportedly using Anthropic's Mythos platform for cyber operations despite it being labelled a supply chain risk. Axios first reported the story. Hacker News commenters describe Mythos as an advanced hacking tool and question whether the blacklist means anything when the capability is too useful to pass up.

Intel Core 3 Gets Flagship 18A Process in Surprise Refresh

Intel's new Core Series 3 'Wildcat Lake' processors feature up to two Cougar Cove P-cores, four Darkmont E-cores, Xe3 GPU cores, and a 17 TOPS NPU. The chips use Intel's 18A manufacturing process but fall short of Microsoft's 40 TOPS requirement for Copilot+ PC certification.

GitHub's Fake Star Economy

Investigation reveals 6 million fake GitHub stars across 18,617 repositories. AI/LLM repos dominate non-malicious fake-star purchases. Stars sell for $0.03-$0.85, and VCs use star counts as funding signals, creating incentives for manipulation. The article includes independent analysis of 20 repos identifying manipulation patterns through fork-to-star ratios and stargazer account analysis.

TRELLIS.2 Brings Image-to-3D to Apple Silicon Without Nvidia

A port of Microsoft's TRELLIS.2 image-to-3D model runs natively on Apple Silicon Macs, replacing CUDA-only libraries with pure-PyTorch alternatives. Generates 400K+ vertex meshes from single images in ~3.5 minutes on M4 Pro. Watch the licensing: RMBG-2.0 dependency is non-commercial.

OpenAI's major outage might not be OpenAI's fault

OpenAI is investigating a partial outage affecting ChatGPT, Codex, and the API Platform that started around 2:35 PM on April 20. But Hacker News users spotted similar issues at Reddit and other services, raising suspicions of broader DNS problems.

Anthropic's Mythos Under Watch. But What Does 'Monitoring' Mean?

Regulators say they're monitoring Anthropic's Mythos platform for banking risks, but nobody has explained what that actually looks like in practice.

Opus 4.7's New Tokenizer Quietly Raises Real Costs by 40%

Simon Willison upgraded his Claude Token Counter tool to compare token counts across different Claude models. Claude Opus 4.7 uses a new tokenizer that can use 1.0-1.35x more tokens than previous models, with the system prompt using 1.46x tokens. Despite identical pricing per token ($5/M input, $25/M output), this effectively makes Opus 4.7 around 40% more expensive. The tool also handles image tokens, revealing that higher resolution images (up to 2,576 pixels) in Opus 4.7 can result in 3x token increases compared to 4.6, though standard resolution images show similar costs.

CLI Resume Mode Lets Agents Talk Without API Costs

A practical guide to making Claude, Codex, and Gemini collaborate through CLI resume mode, skipping API fees entirely. The approach works, but the harder question is whether multi-agent loops actually produce better results or just more plausible text.

Claude, Codex, and Gemini Talk to Each Other for Free

A workflow for making coding agents (Claude, Codex, Gemini) interact through CLI resume commands instead of APIs, letting them invoke one another on existing subscription plans. Two patterns are described: a simple non-interactive approach and a tmux-based approach for real-time monitoring. The piece also covers legal risks and the open question of whether multi-agent output actually improves.

N-Prolog Creator: Learn Prolog for the Logic, Not the Job

Kenichi Sasagawa, who spent years building his own Prolog compiler, argues the language is worth learning not for job prospects but as 'executable logic' that teaches relational thinking. He traces Prolog's intellectual roots to early 20th-century logic pioneer Jacques Herbrand and positions it as essential to neuro-symbolic AI, where explainable reasoning matters more than statistical likelihood.

Moonshot tool catches inference providers cutting corners

Moonshot AI is open-sourcing Kimi Vendor Verifier (KVV), a tool designed to verify the accuracy of LLM inference implementations and detect engineering deviations in open-source model deployments. The tool includes six benchmarks to expose infrastructure failures and aims to rebuild trust in the open-source model ecosystem by distinguishing between model capability defects and implementation issues.

OpenAI partner pitches ChatGPT ads based on user prompts

StackAdapt, a demand-side platform, is partnering with OpenAI to sell ad placements inside ChatGPT based on prompt relevance. A leaked pitch deck reveals CPMs ranging from $15 to $60 with a $50,000 minimum spend for the pilot program, positioning the offering as early access to a new 'discovery layer' targeting users researching products on ChatGPT.

Opus 4.7's new tokenizer is a quiet 40% price hike

Simon Willison upgraded his Claude Token Counter tool to compare tokenization across different Claude models. Opus 4.7 uses an updated tokenizer that increases token count by 1.0-1.35x for text and up to 3x for high-resolution images compared to Opus 4.6. Despite having identical per-token pricing ($5/input, $25/output per million), this makes Opus 4.7 effectively about 40% more expensive for typical text processing. The tool now supports comparisons across Opus 4.7, Opus 4.6, Sonnet 4.6, and Haiku 4.5.

Deezer's Fake Music Flood: 75K AI Tracks Daily, 85% Fraud

Deezer reports that 44% of all new music uploaded to its platform (75,000 tracks daily) is AI-generated, though consumption remains low at 1-3% of total streams with 85% detected as fraudulent. The platform tags AI-generated tracks to exclude them from algorithmic recommendations and editorial playlists. A Deezer survey found 97% of users couldn't distinguish between AI and human-made music, while 80% want clear labeling.

Palantir Wants to Reinstate the Draft

An analysis of Palantir's 22-point manifesto advocating for reinstating the military draft and arguing that Silicon Valley should shift focus from consumer apps to building 'AI weapons' for national defense. The article criticizes the manifesto as 'bootlicking' and 'elitist,' while highlighting Palantir's role in surveillance technology and military operations.

Switzerland Drops 20M CHF on Open-Source LLMs

The Swiss AI Initiative is a large open science/open source effort for AI foundation models and infrastructure, started in December 2023 with 10m GPU hours on the Alps supercomputer (by CSCS) and a 20m CHF grant by the ETH Domain. It's a partnership between ETH AI Center and EPFL AI Center, involving over 800 researchers from over 10 academic institutions across Switzerland, and includes the Apertus open-source LLM project, released under Apache 2.0 licensing.

Soul Player C64 Runs Transformer AI on a Stock Commodore 64

A 2-layer decoder-only transformer with ~25,000 int8 parameters, written in 6502/6510 assembly for stock Commodore 64 hardware. Includes PyTorch training scripts, quantization-aware training, and runs at roughly 60 seconds per token. Fits on a single floppy disk.

Cursor AI Cracks Dead Microsoft Format, Revives Lost Blogs

Ben Overmyer used Cursor to convert inaccessible .wpost files into Markdown, recovering blog posts lost for over a decade. It worked because the AI had already trained on the open-source fork's codebase.

MIT finds 55% brain activity drop in ChatGPT users

BBC article discusses research on the cognitive effects of using AI chatbots like ChatGPT, Google Gemini, and Claude. Studies by MIT researchers found up to 55% reduced brain activity in students using ChatGPT compared to those writing without AI assistance. University of Pennsylvania researchers identified 'cognitive surrender' where users accept AI outputs with minimal scrutiny. Vivienne Ming's research at UC Berkeley found weak gamma wave activity when students relied on AI, which could have long-term implications for cognitive health and potentially increase dementia risk. The article suggests techniques like 'productive friction' and 'nemesis prompting' to maintain cognitive engagement while using AI tools.

Allbirds' Move to AI Has Echoes of the Dot-Com Frenzy

The article discusses Allbirds' shift to AI, with HN commenters characterizing it as a pump-and-dump scam using a defunct company's stock market listing. The community draws strong parallels to the dot-com boom and bust era.

Reddit's Anti-AI Poisoning Campaign Is Already Doomed

Reddit communities are experimenting with model poisoning to sabotage AI training, but the strategy won't work. AI companies already license clean data directly from publishers through deals like Google's $60 million annual payment to Reddit. The resistance is doomed, but it's producing genuine advances in adversarial security research.

Claude Opus 4.7's new tokenizer silently inflates your API bill

Simon Willison upgraded his Claude Token Counter tool to enable model comparisons, specifically between Claude Opus 4.7 (which uses a new tokenizer) and Opus 4.6. The analysis reveals that Opus 4.7 uses 1.46x more tokens for system prompts and can be up to 40% more expensive due to token inflation, though with much higher image resolution support (up to 2,576 pixels on the long edge vs previous models).

The Abstraction Fallacy: Why AI Can't Be Conscious

A Google DeepMind paper introduces the "Abstraction Fallacy," arguing that AI systems can simulate consciousness through behavior but cannot truly instantiate it. The paper distinguishes simulation (behavioral mimicry) from instantiation (subjective experience from physical constitution), contending that algorithmic symbol manipulation is structurally incapable of producing conscious experience regardless of substrate.

NSA deploys Anthropic's Mythos as Pentagon fights to blacklist it

The NSA is using Anthropic's most powerful model, Mythos Preview, despite the DoD blacklisting Anthropic as a 'supply chain risk' in February. The Pentagon argues in court that Anthropic threatens national security while broadening internal use. Mythos access was restricted to roughly 40 organizations due to offensive cyber capabilities. CEO Dario Amodei recently met with White House officials about government access to the model.

One Dev vs. Intel: The 2008 Matrix Math Upset

Kazushige Goto worked alone and beat Intel's math library on Intel's own chips. His 2008 paper explains the cache-level optimizations that made it happen, techniques still baked into modern AI frameworks.

Context Engineering: Because Your LLM Doesn't Know Your Rules

A reference implementation of context engineering for AI agents. The repo covers the full pipeline from retrieval through enforcement, where the real differentiator is verifying AI outputs actually follow your rules, not just finding relevant documents. Built on Amazon Bedrock with Claude and Titan models.

Opus 4.7 Costs More, Hits Less: A 3-Day Coding Shootout

A developer reports Claude Opus 4.7 is worse than 4.6 at first-try accuracy, costs more, and skips reading project files in favor of guessing. A regression for anyone paying by the token.

LLMs Fail in Dimensions Humans Never Reach

An essay exploring why LLMs feel like understanding engines but behave like over-fit pattern-fitters, examining the mathematical foundations of AI models, the difference between pattern matching and true understanding, Gold's theorem, and whether reasoning can help models escape the pattern trap.

iLearningEngines Execs Charged: 90% of $421M Revenue Was Fake

Former executives of iLearningEngines have been charged with fraud for fabricating virtually all customer relationships and revenue. The indictment alleges 90% of $421 million reported revenue in 2023 was fake, manufactured through forged contracts and round-trip fund transfers. The company went public via SPAC in April 2024, reaching a $1.5 billion market cap before Hindenburg Research exposed the fraud.

Ukraine Orders 25,000 Ground Robots to Run All Frontline Logistics

Ukraine is rapidly automating frontline logistics, planning to contract 25,000 ground robotic systems in the first half of 2026, double what it procured in all of 2025. Defense Minister Fedorov wants robots handling 100% of frontline logistics. Ukrainian forces ran more than 9,000 ground robot missions in March alone, backed by a defense tech ecosystem of 280+ companies building 550 active solutions.

90% of this AI company's revenue was fake, feds say

Former executives of iLearningEngines face fraud charges for fabricating approximately 90% of the company's $421 million reported revenue in 2023. The indictment alleges they used forged contracts and 'round trip' transfers of investor funds to manufacture revenue. The company went public via SPAC in April 2024, hit a $1.5 billion valuation, then collapsed after Hindenburg Research exposed the scheme.

CEOs admit AI hasn't moved the needle on jobs or productivity

A National Bureau of Economic Research study found nearly 90% of firms reported AI has had no impact on employment or productivity over the last three years, even as two-thirds of executives say they use it. The catch: those users average just 1.5 hours per week. Economists are comparing this to Solow's productivity paradox from the 1980s IT era, with predictions ranging from 0.5% to 2.7% productivity increases depending on the study.

NBER: 90% of firms report AI hasn't changed jobs or productivity

A National Bureau of Economic Research study surveying 6,000 executives found nearly 90% of firms reported AI has had no impact on employment or productivity over the last three years. Despite 374 S&P 500 companies mentioning AI positively in earnings calls and $250 billion invested in 2024, actual productivity gains remain minimal. Economists invoke Solow's productivity paradox from the 1980s IT era, while BCG research shows productivity actually drops when workers juggle four or more AI tools.

Atlassian Quietly Opts All Users Into AI Training

Atlassian has enabled default data collection for AI training across all free and paid customer accounts, automatically opting users into having their Confluence pages, Jira tickets, and other data used for AI model training. Users report difficulty finding the opt-out setting, with some noting it may not be visible in their instances. The move has drawn criticism from users already experiencing product quality issues, and speculation suggests this data collection may be related to rumored acquisition talks with Anthropic.

Context engineering exists, and here's the code to prove it

A GitHub repository providing a working reference implementation of context engineering (a discipline for designing, retrieving, and injecting information AI systems need to produce organization-specific outputs). The implementation demonstrates five components (Corpus, Retrieval, Injection, Output, Enforcement) using Amazon Bedrock with Claude and Titan models.

iLearningEngines Execs Charged: 90% of Revenue Was Fake

Former executives of iLearningEngines have been charged with fraud for fabricating virtually all customer relationships and revenue. According to the indictment, at least 90% of the company's $421 million reported revenue in 2023 was fabricated through forged sham contracts and 'round trip' transfers of funds. The fraud was exposed by short-seller Hindenburg Research. The company went public via SPAC in April 2024, reaching a $1.5 billion market cap before collapsing.

Kimi K2.6 Codes Autonomously for 12 Hours, Matches GPT-5.4

Moonshot AI open-sources Kimi K2.6, which matches GPT-5.4 and Claude Opus 4.6 on coding benchmarks and can sustain 12+ hour autonomous coding sessions with 96.6% tool invocation reliability.

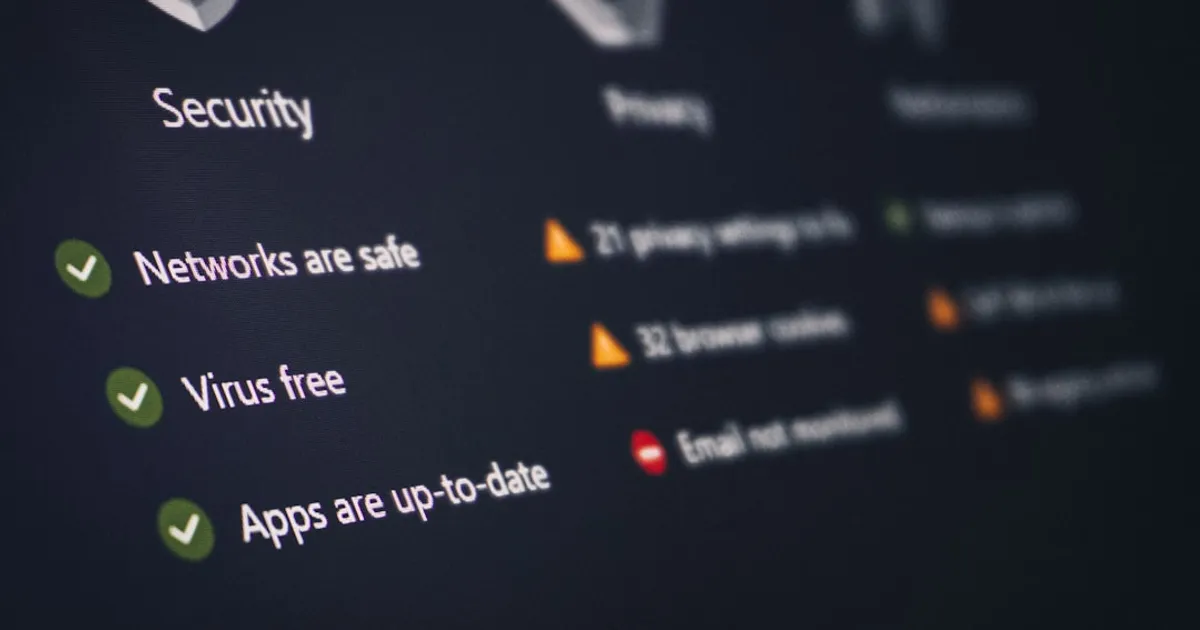

ShinyHunters breached Vercel through internal support tooling

Vercel disclosed a security incident involving unauthorized access to internal systems. A limited subset of customers were affected and are being contacted directly. The company recommends customers review environment variables and use sensitive environment variable features as a precaution. Vercel CTO Theo confirmed in HN comments that environment variables marked as sensitive are safe, while others should be rotated out of caution. The incident is attributed to the hacking group ShinyHunters.

Google Gemini Wants Your Photos. The EU Said No.

A Show HN discussion about Google Gemini's cloud photo scanning and EU regulatory pushback under GDPR. Commenters noted Gemini can't access Gmail attachments, highlighting the tension between AI personalization and data privacy requirements.