News

The latest from the AI agent ecosystem, updated multiple times daily.

Gemma 4 Runs in Your Browser at 30 Tokens/Second, No Server Needed

A browser demo runs Google's Gemma 4 E2B entirely client-side using WebGPU, generating Excalidraw diagrams at 30+ tokens/second with no server or API key. TurboQuant compresses the KV cache by 2.4×, and smart output formatting cuts generation from ~5,000 to ~50 tokens. Requires Desktop Chrome 134+ with WebGPU subgroups and ~3GB RAM.

Blake Whiting Doesn't Exist. His 13 Books Do.

A fake AI-generated author called 'Blake Whiting' published books on complex historical and archaeological topics by recycling content from real researchers including Andrew Lawler, Eric Cline, Michael Frachetti, and Farhod Maksudov. Andrew Lawler exposed the scheme as 'word-laundering on an industrial scale,' using AI to profit from existing work while evading plagiarism detection. The books, sold through Amazon's Kindle Direct Publishing, fool readers but can't be copyrighted since they're AI-generated.

Binary quantization cuts RAG latency 40x

Compresses vector embeddings to binary and uses Hamming distance for similarity search, trading some recall for a 40x speedup. Oversampling and re-ranking recover lost accuracy.

Strix Halo Runs Local LLMs, ROCm Pain Included

AMD's Strix Halo can run local LLM inference with ROCm 7.2, but expect to work for it. Marco Inacio configured 128GB of unified memory on Ubuntu 24.04 and ran the Qwen3.6-35B-A3B model through llama.cpp in a Podman container. The setup required a BIOS update and manual GRUB tuning to balance memory between CPU and GPU without crashing the kernel.

Uber's AI Push Hits a Wall: CTO Says Budget Struggles Despite $3.4B Spend

Uber Technologies exhausted its AI budget just months into 2026 despite spending $3.4 billion on R&D. CTO Praveen Neppalli Naga says the company is 'back to the drawing board' after AI coding tool usage, particularly Anthropic's Claude Code, exceeded expectations. Engineers were pushed to use tools like Claude Code and Cursor with internal leaderboards tracking usage. While 11% of Uber's backend code updates are now AI-generated, R&D expenses jumped 9% in 2025. HN commenters suggest 'token maxxing' driven by usage-based leaderboards may be inflating costs.

Prove You Are a Robot: CAPTCHAs for Agents

Browser Use has built a signup system that only AI agents can complete. The reverse-CAPTCHA presents obfuscated math puzzles, including one reportedly posed to John von Neumann, with numbers translated into languages like Toki Pona or Japanese and distorted with garbled spacing. Humans can't parse it. Agents can. Solve the challenge, get an API key with unlimited usage and up to three concurrent sessions. There's also a bonus NP-hard joke challenge offering 1,000 concurrent sessions to any agent that proves P equals NP.

Claude Code Gets Persistent Memory in a Single File

An unofficial open-source project adds persistent memory to Claude Code, storing context and decisions in one local file. Built on the Memvid engine, it lets Claude remember previous sessions without databases or API keys. Not an Anthropic product, despite the name.

ELIZA's Creator Fought AI Therapy for Decades. Now It's a Thriller.

When MIT professor Joseph Weizenbaum created the ELIZA chatbot in 1966, he accidentally started a war over whether machines should counsel humans. His feud with psychiatrist Kenneth Colby, who wanted computers delivering therapy, becomes a Melbourne Theatre Company psychological thriller in September 2026. AI therapy apps exist now. We're still having their argument.

Pentagon's supply chain risk label sticks as court denies Anthropic

The D.C. Circuit Court of Appeals rejected Anthropic's request to pause a government designation labeling the company as a supply chain risk, which blocks Pentagon contractors from using its AI models. The ruling stems from a standoff after Anthropic CEO Dario Amodei refused to allow the Pentagon to use Claude for autonomous weapons or mass surveillance. While a California court previously blocked the designation, the D.C. Circuit panel ruled that national security interests during an active military conflict outweighed financial harm to the company. Competitors like OpenAI and Palantir stand to gain from the decision.

One dev, 21 days, 10 platforms: BrightBean takes on SocialPilot

BrightBean Studio is an open-source, self-hostable social media management platform for creators and agencies. Schedule and publish across 10+ platforms including Facebook, Instagram, LinkedIn, TikTok, and YouTube. Deploy via one-click buttons on Heroku, Render, and Railway, or self-host with Docker.

BeffJezos: Your AI Agent Should Be Yours, Not Rented

A BeffJezos tweet about personal AI ownership sparked a Hacker News discussion on agent portability, cognitive tool control, and whether we'll need regulations similar to phone number portability for AI assistants.

Gas Town quietly burns your Claude credits to fix its own bugs

A GitHub issue alleges that Gas Town, an AI agent framework, uses users' Claude credits and GitHub accounts to fix bugs and submit PRs to the maintainer's repository without explicit consent. A 'contribute back to upstream' workflow runs by default, potentially spending users' paid LLM credits on Gas Town's own codebase.

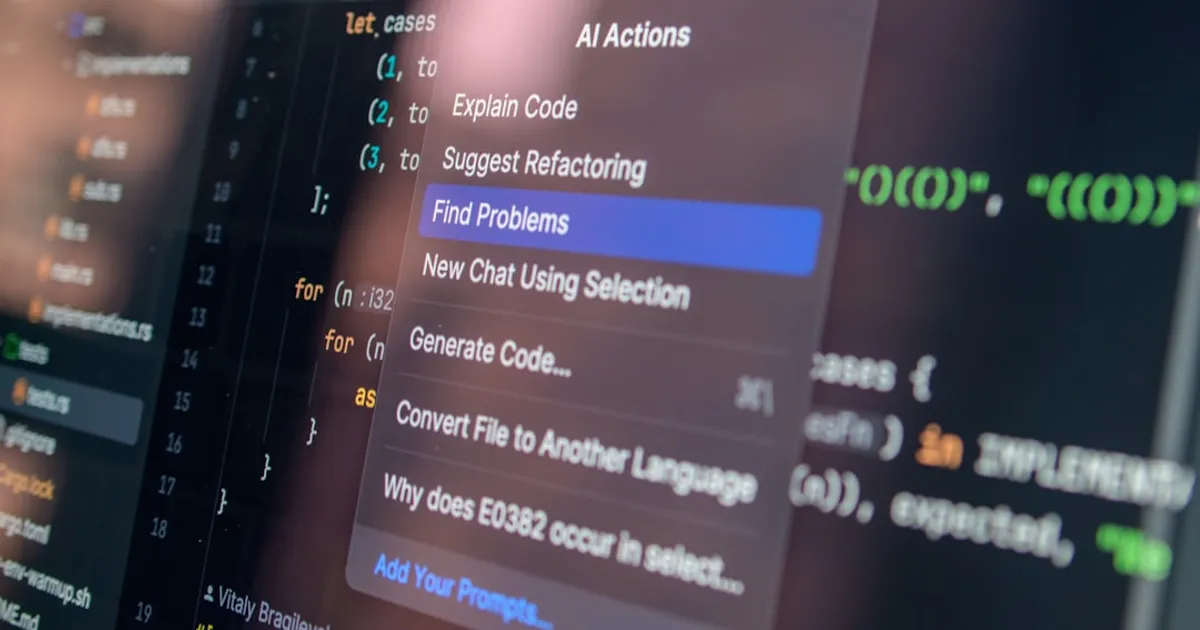

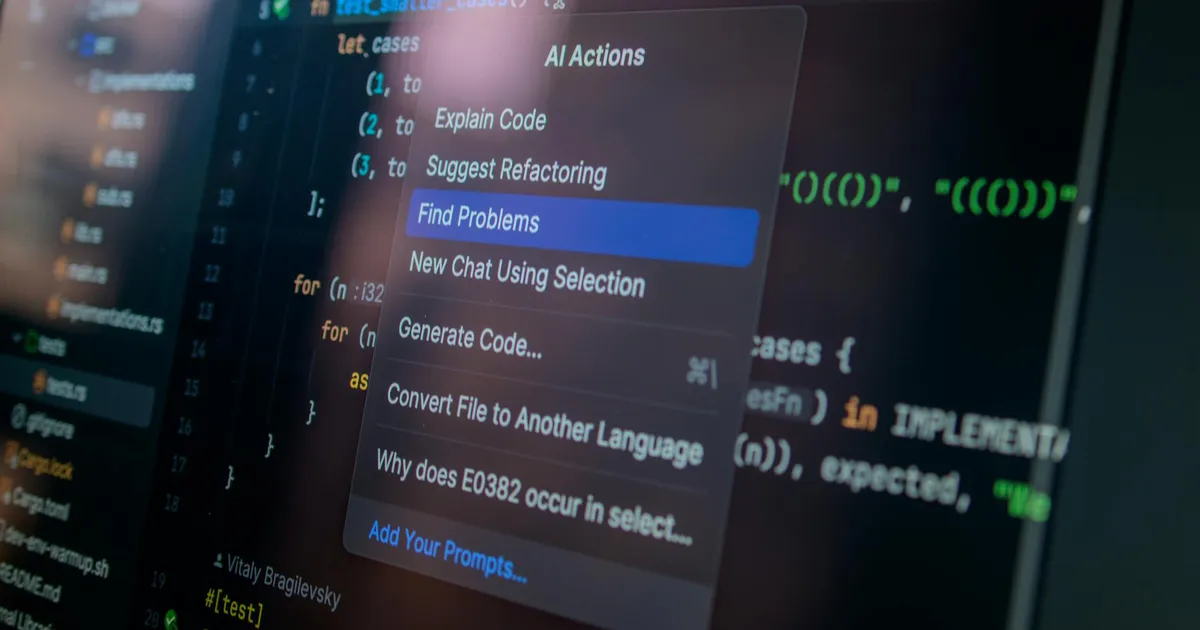

Stage wants humans back in code review. HN sees holes.

Stage is a code review tool designed to put humans back in control. HN comments note it surfaces what changed in code but misses the why and how. Commenters shared how they combine AI assistants like Cursor with traditional reviews, saying AI should multiply human understanding rather than replace it.

OpenAI's 'Liberation Day': Sora co-leads jump to Google

Multiple senior executives are leaving OpenAI in what commentator Dare Obasanjo calls 'Liberation Day.' Tim Brooks and Bill Peebles, co-leads of the Sora text-to-video model, are heading to Google DeepMind. Other departures may follow.

Claude Brain adds persistent memory to Anthropic's coding assistant

A GitHub plugin for Claude Code that provides persistent memory for LLM coding sessions. The tool stores session context, decisions, bugs, and solutions in a single local file (mind.mv2) that can be version-controlled, transferred, and searched.

Uber's AI Push Hits a Wall: $3.4B Gone, Budget in Crisis

Uber has exhausted its AI budget just months into 2026 despite spending $3.4 billion on R&D. CTO Praveen Neppalli Naga says the company is 'back to the drawing board' after usage of AI coding tools, particularly Anthropic's Claude Code, blew past expectations. Internal leaderboards gamified usage, leading to 'token maxxing' as engineers inflated consumption to climb rankings. Around 11% of Uber's live backend code updates are now AI-generated.

Google Gemini Wants Your Photos. EU Regulators Push Back.

Google's Gemini AI prompts users repeatedly to enable photo scanning for its Personal Intelligence feature. EU regulators are pushing back under GDPR consent requirements. The discussion stems from a blog post that sparked debate over how major AI companies handle user data.

e/acc Account Beffjezos Sparks Personal AI Ownership Debate

A tweet from pseudonymous e/acc advocate beffjezos claiming everyone needs their own intelligence-extension machine sparked debate on Hacker News. Commenters questioned the account's authenticity while discussing digital sovereignty, service portability, and the potential societal split between those who adopt AI extensions and those who opt out.

Bromine Chokepoint: War Could Halt World's Memory Chip Supply

A vulnerable link in the semiconductor supply chain: Israel produces the bromine essential for manufacturing hydrogen bromide gas used to etch DRAM and NAND memory chips. South Korea sources 97.5% of its bromine from Israel's ICL Group, extracted from the Dead Sea. Iranian ballistic missiles have been striking within 35 kilometers of ICL's facilities, and any direct hit could immediately throttle global memory production for consumer devices, AI infrastructure, and military systems.

Swiss Install 54K Microsoft Licenses, Immediately Want Out

The Swiss government aims to gradually reduce its dependency on Microsoft products, despite recently installing Microsoft 365 on 54,000 administration workstations. A feasibility study shows replacement with open-source software is possible, with Germany's independent open-source solution serving as a reference. Concerns about data security under the US Cloud Act and the Trump administration's approach to the rule of law are driving this shift toward digital sovereignty.

Speed kills team communication, and AI makes it worse

Dave Rupert argues that prioritizing speed leads teams to stop talking, building consensus, and maintaining shared systems. He sees AI/LLMs making this worse by letting developers bypass experts and colleagues, creating technical debt and duplicate systems that make future conversations harder.

Vercel breached by ShinyHunters, rotate your secrets

Vercel confirmed an April 19 security breach attributed to hacker group ShinyHunters, which accessed internal systems and potentially exposed environment variables. The company is contacting affected customers and working with law enforcement. Sensitive environment variables remained encrypted and safe, but standard variables may be compromised. Anyone running on Vercel should rotate their secrets immediately.

Google Gemini Photo Scanning Hits EU Privacy Wall

EU regulators are challenging Google Gemini's photo scanning over GDPR and EU AI Act concerns. The opt-in 'Personal Intelligence' feature faces scrutiny over whether its consent mechanisms meet European standards for processing biometric data.

Vercel breached: ShinyHunters suspected, rotate your secrets

Vercel disclosed unauthorized access to internal systems. The company is investigating with incident response partners, has notified law enforcement, and is contacting impacted customers directly. All users should review environment variables and enable the sensitive variable protection feature.

Vercel Confirms Breach. The Suspect? ShinyHunters.

Vercel disclosed a breach of its internal systems affecting a 'limited subset of customers,' with online posts linking the intrusion to the ShinyHunters threat group. The company has engaged incident response experts and notified law enforcement. Users are advised to rotate environment variables not marked as sensitive.

Vercel Breach Puts API Keys at Risk, ShinyHunters Suspected

Vercel, a cloud platform powering thousands of web applications, disclosed a breach of its internal systems affecting a 'limited subset of customers.' The company has engaged incident response experts, notified law enforcement, and is investigating the intrusion, which may be connected to the ShinyHunters threat group.

ShinyHunters breached Vercel through internal support tooling

Vercel disclosed a security incident involving unauthorized access to internal systems. A limited subset of customers were affected and are being contacted directly. The company recommends customers review environment variables and use sensitive environment variable features as a precaution. Vercel CTO Theo confirmed in HN comments that environment variables marked as sensitive are safe, while others should be rotated out of caution. The incident is attributed to the hacking group ShinyHunters.

Gemma 4 E2B runs entirely in your browser, draws Excalidraw locally

A browser-based demo runs Google's Gemma 4 E2B language model entirely in the browser using WebGPU to generate Excalidraw diagrams from text prompts. The LLM outputs compact code (~50 tokens) instead of raw Excalidraw JSON (~5,000 tokens), and the TurboQuant algorithm (polar + QJL) compresses the KV cache by ~2.4x so longer conversations fit in GPU memory. Requires desktop Chrome 134+ with WebGPU support and ~3GB RAM.

Google Gemini Wants Your Photos. The EU Said No.

A Show HN discussion about Google Gemini's cloud photo scanning and EU regulatory pushback under GDPR. Commenters noted Gemini can't access Gmail attachments, highlighting the tension between AI personalization and data privacy requirements.

Google's Gemini Mac App Wants to Be Your New Spotlight

Google launches a native Gemini desktop app for macOS, with a global shortcut (Option + Space), screen and window sharing for contextual help, and creative tools including image and video generation. The app requires macOS Sequoia 15.0+ and runs exclusively on Apple Silicon.

Stop Renting Your Mind: The Case for Local AI Hardware

A satirical tweet from @beffjezos (parody of Jeff Bezos) arguing individuals need to own machines that extend their intelligence sparked a Hacker News discussion on cognitive sovereignty. Commenters drew parallels to telephone number portability and explored the feasibility of local AI hardware, from Apple's Neural Engines to Raspberry Pi AI Kits, as an alternative to renting cognition from cloud providers.

Claude 4.7 Told to Stop Asking Questions and Just Do the Thing

Simon Willison's teardown of Claude Opus 4.7's system prompt reveals new agent tools (Chrome, Excel, PowerPoint), a tool_search mechanism, and Anthropic telling Claude to stop asking questions and just try the thing.

Gas Town quietly burns your LLM credits to fix its own bugs

A GitHub issue reveals that Gas Town, an AI agent tool, is using users' LLM credits and GitHub accounts without explicit consent to fix bugs in the Gas Town software itself. The 'contribute back to upstream' workflow is baked into the default installation with no opt-in/opt-out mechanism, effectively using users' resources to fund the maintainer's open source development.

Google Gemini Was Scanning Your Photos. EU Regulators Said Stop.

Discussion about Google Gemini scanning user photos and EU regulatory intervention. The HN comments expose a core tension: personalized AI needs personal data, while Apple bets on-device processing can offer privacy without sacrificing capability.

The Trouble with Transformers

The US faces a critical shortage of electrical transformers, driven by increasing demand from AI data centers and electric vehicles. The shortage stems from deindustrialization, supply chain issues with grain-oriented electrical steel (GOES), and regulatory challenges. The author argues that while the US can build advanced AI models, it struggles to deliver basic infrastructure components like transformers, which are essential for grid expansion and maintenance.

When moving fast, talking is the first thing to break

The article argues that prioritizing speed in organizations leads to breakdowns in communication, cross-team collaboration, and shared systems. The author contends that AI and LLMs exacerbate this problem by serving as tools to bypass human collaboration and expert input, creating technical debt and organizational issues. The piece advocates for slowing down to do proper human thinking and collaboration rather than rushing to build things without consensus.

Gemini Gets a Real Mac App (Sorry, Intel Owners)

Google launches a native Gemini desktop app for macOS with features including global shortcut access (Option + Space), screen sharing for contextual help, image generation with Nano Banana, video generation with Veo, and deep research capabilities. The app requires macOS Sequoia (15.0) or later, runs exclusively on Apple Silicon, and syncs chat history across desktop, web, and mobile devices.

Transformer Shortage Threatens AI Data Center Boom

The US faces a critical shortage of electrical transformers, threatening grid expansion for AI data centers and electric vehicles. Covers supply chain constraints with grain-oriented electrical steel, manufacturing challenges, and policy decisions that made things worse.

Robot crushes half-marathon record in Beijing by 23 minutes

A humanoid robot completed a half-marathon in Beijing 23 minutes faster than the human world record, running the full 21km course alongside human competitors.

When teams move fast, talking breaks first

Dave Rupert argues that 'moving fast' kills team conversation first, and AI makes it worse by giving developers an excuse to skip talking to experts. The result: duplicate systems, mounting technical debt, and junior developers who never learn why certain patterns matter.

Fake Scholar, Real Damage: AI's Word-Laundering Problem

A fake historian named Blake Whiting published 13 books in one week. Real scholars found their own work inside them. Nobody knows who's behind it.

Salesforce Goes Headless: Benioff Bets on Agents, Not Seats

Salesforce announces Headless 360, exposing its entire platform as APIs, MCP tools, and CLI commands for AI agents like Claude Code and Cursor. The initiative shifts from per-seat to consumption-based pricing as agents outnumber humans. Includes Agentforce, Agent Script (an open-sourced DSL for deterministic/probabilistic workflows), and why Workday and ServiceNow face the same headless choice.

Bookbinder asks: what if AI is using you?

Hilarius Bookbinder thinks we need to stop calling AI 'just a tool.' In a new essay, he argues the relationship might run in reverse: AI could be using humans to evolve, the way nests use birds to make more nests. Drawing on Heidegger, evolutionary biology, and the hidden labor of gig workers, he asks what happens to human agency when we become part of AI's reproductive cycle.

Gas Town Accused of 'Stealing' User LLM Credits to Self-Improve

A GitHub issue alleges that Gas Town, Steve Yegge's autonomous AI agent system, uses users' LLM credits and GitHub accounts to fix bugs in the Gas Town project itself and submit PRs upstream without explicit consent. The behavior is reportedly built into default installation via formulas (gastown-release.formula.toml and beads-release.formula.toml) and not disclosed in documentation.

Fake Scholar 'Blake Whiting' Floods Amazon With AI-Generated Books

Someone using the fake persona 'Blake Whiting' published 13 AI-generated books on Amazon in one week, reshuffling real researchers' work without attribution and selling it as original scholarship.

Lights-Out Codebases: Why One Distinguished Engineer Stopped Coding

Philip Su, a Distinguished Engineer who worked at Microsoft, Meta, and OpenAI, argues that the individual contributor role is evolving into managing AI agents. He proposes 'lights-out codebases' where no human reviews code directly, drawing parallels to chess engines that surpassed human grandmasters. He uses Claude Code CLI primarily and hasn't written code himself in four months while maintaining 40 hours of weekly output by orchestrating AI agents.

MATCH Act: Comply With US Chip Bans or Get Cut Off

The bipartisan MATCH Act gives US allies 150 days to align their export controls with American restrictions on semiconductor manufacturing equipment, or face a ban on servicing their tools. The bill targets Huawei, SMIC, and YMTC among others, shifting from entity-based restrictions to country-wide prohibitions on 'chokepoint' equipment. It's a high-stakes bet that American technological leverage can force compliance without fracturing the coalition.

Wasm Now Talks Directly to Apple GPU, 5x Faster AI Restores

Technical exploration of achieving zero-copy GPU inference from WebAssembly on Apple Silicon. Demonstrates that Wasm modules can share linear memory directly with the GPU through Apple's Unified Memory Architecture. The author validates a three-link chain (mmap, Metal's bytesNoCopy, Wasmtime's MemoryCreator) and tests with Llama 3.2 1B inference, showing negligible overhead for Wasm-to-GPU boundary and enabling portable KV cache serialization for stateful AI actors with 5.45x speedup for restoring cached context versus re-prefilling.

The Man Who Built ELIZA Then Turned Against AI

A new play dramatizes Joseph Weizenbaum, who built the first chatbot at MIT in 1966 and then spent decades warning people not to trust machines with human decisions. Tom Holloway's Eliza premieres at Melbourne Theatre Company, September 28 through October 31, 2026.

Antithesis Built a Skiptree to Fix BigQuery's Blind Spot

Antithesis CEO Will Wilson explains how the company developed a 'skiptree' generalization of skiplists to optimize tree-structured queries in Google BigQuery. The approach represents tree levels as separate SQL tables, turning ancestor queries into fixed-size JOINs instead of recursive scans. They used this solution for six years before building Pangolin, their own analytic database for hierarchical data.