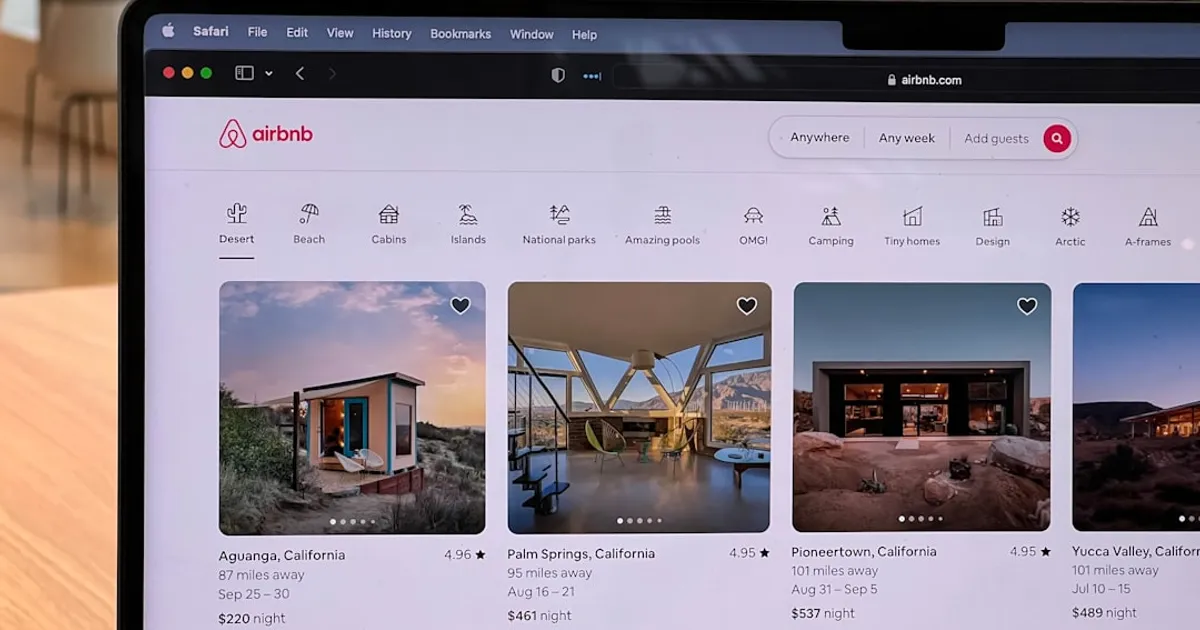

Burla wants you to know it can process a lot of data fast. The cloud startup just published a demo analyzing 1.7 million Airbnb photos across 119 cities, using CLIP to score images and Claude Haiku Vision to validate findings like opium den vibes, messy kitchens, and TVs mounted way too high. They also processed 50 million reviews through a three-tier funnel ending with Haiku analysis on the weirdest 12,000. The whole thing ran on a single Burla cluster that scaled to roughly 1,700 CPU workers and 20 A100 GPUs at peak. While infrastructure constraints often stall scaling for other coding agents, Burla managed to run this entire operation smoothly.

Here's how it works. CLIP (ViT-B-32 via OpenCLIP) vector-encoded every photo and shortlisted candidates matching text prompts. Those candidates went to Claude Haiku for visual confirmation. Reviews got regex filtered first, then SBERT embeddings clustered the top 200,000, and Haiku analyzed the final 12,000. Cheap filters first, expensive models last.

But let's be honest. The GitHub repo lives in burla-cloud/examples. The demo page says Built on Burla at the bottom. Hacker News commenters immediately flagged it as advertising. That's fine. It's a good ad. The technical writeup is detailed, the code samples are real, and the findings are actually entertaining.

Burla raised $10 million in seed funding led by Alliance of Capital. The startup pitches itself as an open-source alternative to proprietary cloud providers for AI workloads. Meanwhile, OpenAI and Microsoft have bet $135B on a partnership that modifies their long-standing relationship. You write a Python function, call remote_parallel_map, and it runs across a cluster. No Docker, no Kubernetes. The demo proves the tool works at scale. Whether the market needs another cloud abstraction layer is a different question.