The idea is sound: keep Claude Desktop for thinking work, route terminal tasks through free local models via Ollama. Your Claude quota doesn't get eaten by lints and batch refactors. The execution here is messier.

Coherence Daddy published a polished 21-slide tutorial promising roughly 90% cost reduction. What the GitHub repo actually contains is a copy-paste prompt, some AI-generated slides, and zero cost calculations to back up that headline number. The 90% figure is aspirational at best. More concerning, Hacker News commenters quickly identified that the entire setup wraps @musistudio/claude-code-router, an npm package by musistudio, without crediting the original author. The repo carries an MIT license stating "no attribution required," which is legally fine for MIT code, but feels off when your main contribution is someone else's tool wrapped in a presentation deck and branded content.

There's also the ToS question nobody in the repo addresses. Anthropic's terms prohibit circumventing usage limits and accessing the service through unauthorized methods. Whether routing requests through a local proxy that swaps in alternative models mid-conversation counts as circumvention is untested. Nobody's reporting bans yet, but anyone considering this should actually read Anthropic's terms before committing their workflow to it.

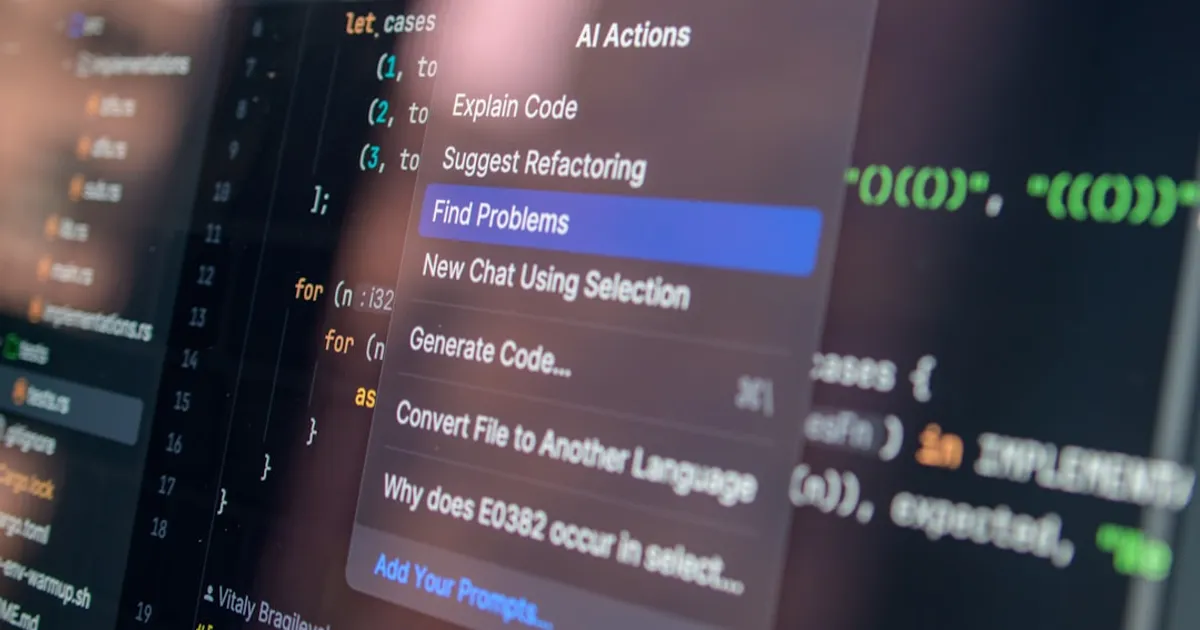

Splitting strategic and mechanical work across different engines is a useful pattern for anyone running agents regularly. The router itself intercepts outgoing requests from Claude Code, sends some to Anthropic's API and others to a local Ollama instance running models like Gemma or Qwen, then passes responses back through the same interface. It's middleware, not magic. Just grab claude-code-router from musistudio directly and skip the influencer packaging.