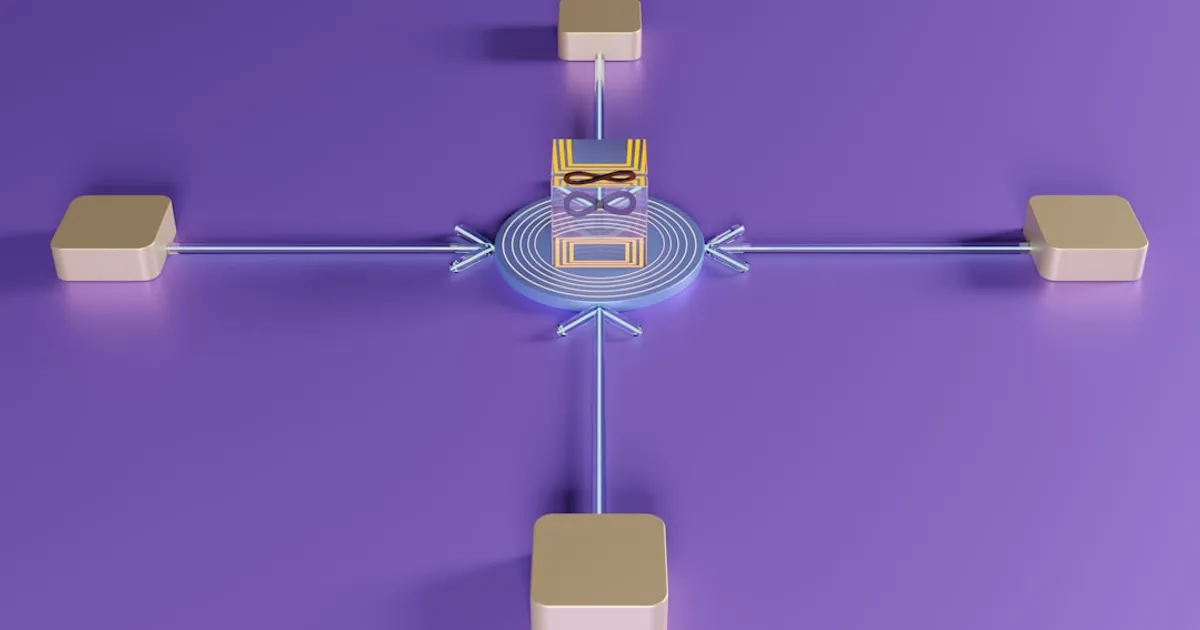

GlassFlow just open-sourced a stream processing engine that handles 500,000 events per second moving data from Kafka to ClickHouse. The tool sits between your event stream and your database, acting as a middleware layer similar to OpenRouter, letting you transform, filter, and deduplicate data before it hits storage. That's a gap a lot of data teams fill with custom code or workarounds inside ClickHouse itself.

GlassFlow handles both stateless transformations (removing nulls, replacing timestamps) and stateful operations like deduplication and temporal joins over configurable time windows. Processing data upstream means you can avoid resource-heavy ClickHouse patterns like ReplacingMergeTree and FINAL queries. It also gives you exactly-once delivery guarantees and handles late-arriving events, which are the details that break production pipelines.

The existing alternatives tell you something about the problem. Flink is powerful but complex to operate. Kafka Streams locks you into Java. Materialize takes a different approach entirely. GlassFlow bets on Kubernetes-native deployment with a Python SDK, which is a reasonable trade-off if your team already lives in that ecosystem.

Apache 2.0 licensed, with a live demo at demo.glassflow.dev. The architecture scales horizontally via Helm on major cloud providers, and there's a Web UI for building pipelines visually. But the dead-letter queue is the quiet feature worth paying attention to. It catches malformed events before they poison your ClickHouse tables, which is the kind of thing you don't appreciate until 3am when the pager goes off.