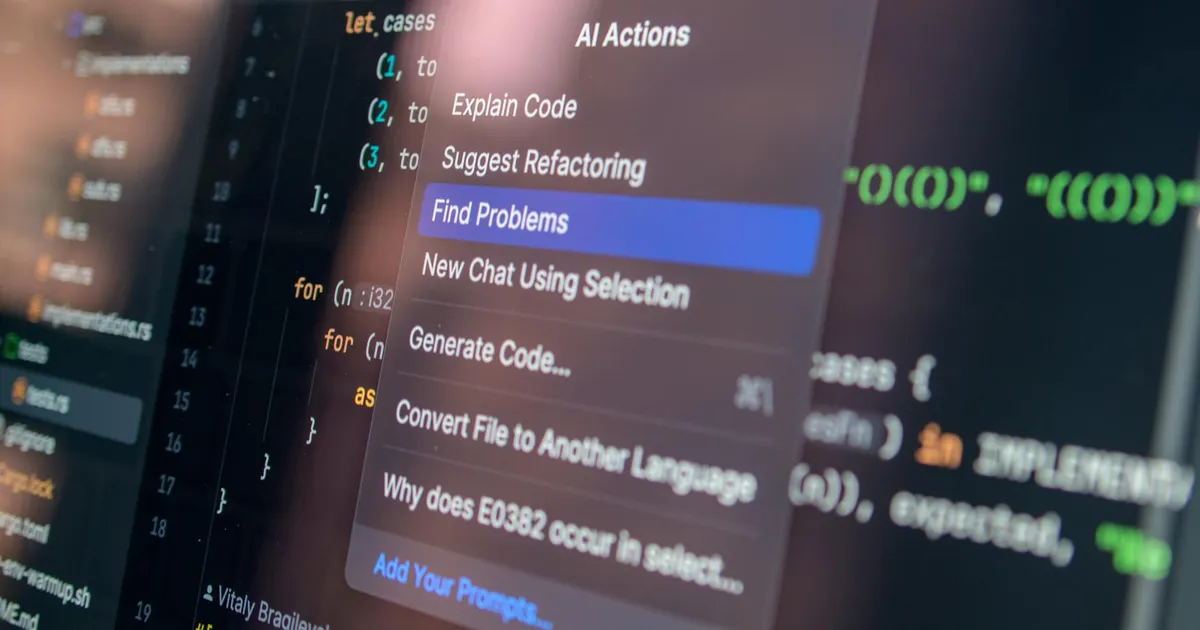

Cybeetle is an early-stage AI security platform targeting software developers with automated code vulnerability scanning and threat intelligence. The product combines static analysis with LLM reasoning to identify vulnerabilities, rank them by risk, generate plain-language explanations, suggest patches, and produce structured threat reports. The goal is a full remediation pipeline — not just a flag-and-report scanner. Its primary interface, Cybeetle Chat, makes the LLM-native design explicit.

The founder, who goes by angeltimilsina on Hacker News, shared that Cybeetle was rejected by Y Combinator when the product was scoped narrowly as a "security LLM reasoning layer." Since then, the team has expanded into code analysis and remediation. The founder is now reapplying to YC. Publicly listed personal email addresses and the solo founder's candor on HN put this firmly at pre-seed, likely a one or two-person operation.

Cybeetle walks into a crowded room. Snyk, GitHub Advanced Security via CodeQL, Semgrep, Socket.dev, and Apiiro are all established here. The closest analog is Snyk's DeepCode AI integration — using AI to explain vulnerabilities to developers and guide fixes, rather than just surfacing them as raw findings. That's a more defensible positioning than competing on detection breadth alone, where the incumbents have years of rule sets and data behind them.

The YC rejection was probably the right call at the time. A reasoning layer without a clear workflow home is a hard pitch. The rebuilt product at least answers the "so what do I do with this?" question — scan, understand, fix. Whether the LLM-generated explanations and patches are materially better than what Snyk or Semgrep already offer is the real question, and Cybeetle hasn't published benchmarks. That's the gap between an interesting demo and a fundable company. The next YC cycle will tell us which one this is.