Invite-only communities with traceable membership trees may be the most practical structural defense against AI-generated content pollution — and lobste.rs has been running that experiment for years.

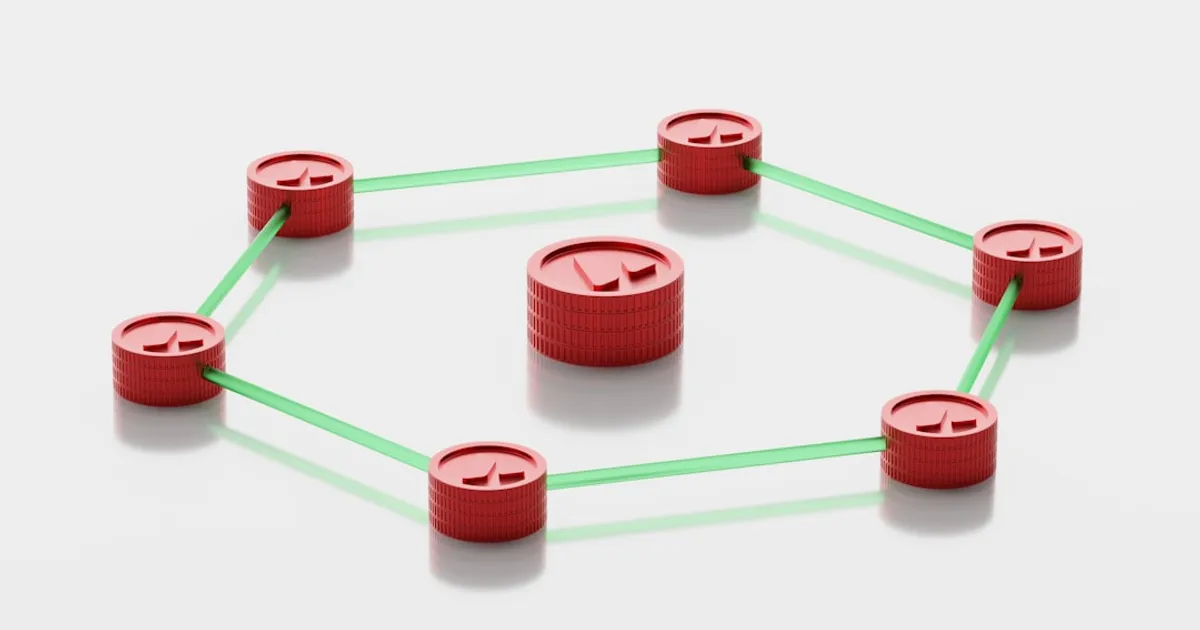

A writer identified as "jes" argues on abyss.fish that lobste.rs's model works because every account can be traced through its invite chain. New members must wait 70 days before they can invite anyone else. When moderators spot an AI slopbot, they pull the thread backward and prune the entire branch of accounts stemming from the same compromised inviter — a moderation action that is structurally impossible on platforms where anyone can register freely.

The cost to bad actors is the key mechanism. Running a coordinated AI content campaign requires compromising real, tenured members of the invite chain. That is a structural barrier, not a policy one.

The jazzband shutdown sharpens the stakes. The open-source GitHub collaborative organization folded in part because it was overwhelmed by AI-generated pull requests — a concrete example of what happens when a community lacks any friction at the door. Mainstream platforms like Facebook, X, and Reddit have gone further: jes characterizes them as having already tipped past the point of feeling predominantly human.

The honest question the model does not answer is whether it scales. Lobste.rs works partly because it has never tried to grow fast. For developer forums or academic platforms that need critical mass to function, a 70-day waiting period before members can invite anyone could strangle growth before trust networks have a chance to form. Hacker News discussion of the post surfaced exactly this tension without resolving it — which is probably the right place to leave it.